|

Capsule Neural Network

A capsule neural network (CapsNet) is a machine learning system that is a type of artificial neural network (ANN) that can be used to better model hierarchical relationships. The approach is an attempt to more closely mimic biological neural organization. The idea is to add structures called “capsules” to a convolutional neural network (CNN), and to reuse output from several of those capsules to form more stable (with respect to various perturbations) representations for higher capsules. The output is a vector consisting of the probability of an observation, and a pose for that observation. This vector is similar to what is done for example when doing '' classification with localization'' in CNNs. Among other benefits, capsnets address the "Picasso problem" in image recognition: images that have all the right parts but that are not in the correct spatial relationship (e.g., in a "face", the positions of the mouth and one eye are switched). For image recognition, capsnets explo ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

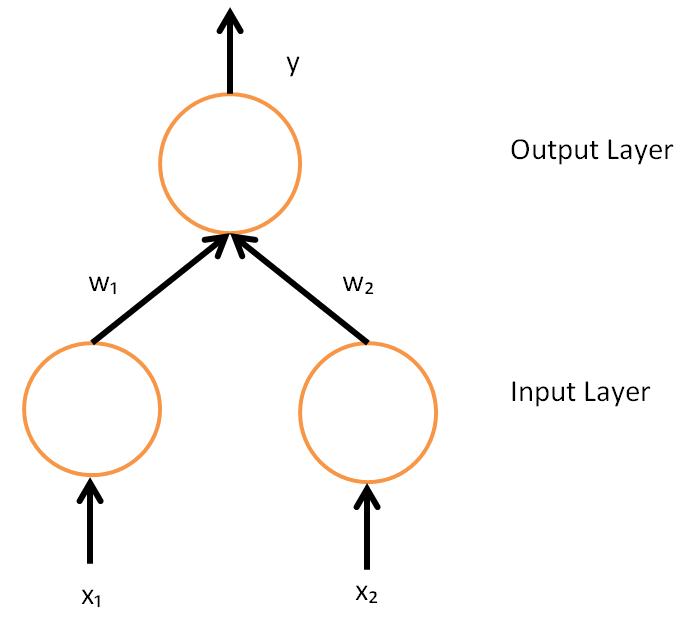

Artificial Neural Network

Artificial neural networks (ANNs), usually simply called neural networks (NNs) or neural nets, are computing systems inspired by the biological neural networks that constitute animal brains. An ANN is based on a collection of connected units or nodes called artificial neurons, which loosely model the neurons in a biological brain. Each connection, like the synapses in a biological brain, can transmit a signal to other neurons. An artificial neuron receives signals then processes them and can signal neurons connected to it. The "signal" at a connection is a real number, and the output of each neuron is computed by some non-linear function of the sum of its inputs. The connections are called ''edges''. Neurons and edges typically have a ''weight'' that adjusts as learning proceeds. The weight increases or decreases the strength of the signal at a connection. Neurons may have a threshold such that a signal is sent only if the aggregate signal crosses that threshold. Typically ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Hough Transform

The Hough transform is a feature extraction technique used in image analysis, computer vision, and digital image processing. The purpose of the technique is to find imperfect instances of objects within a certain class of shapes by a voting procedure. This voting procedure is carried out in a parameter space, from which object candidates are obtained as local maxima in a so-called accumulator space that is explicitly constructed by the algorithm for computing the Hough transform. The classical Hough transform was concerned with the identification of lines in the image, but later the Hough transform has been extended to identifying positions of arbitrary shapes, most commonly circles or ellipses. The Hough transform as it is universally used today was invented by Richard Duda and Peter Hart in 1972, who called it a "generalized Hough transform" after the related 1962 patent of Paul Hough. The transform was popularized in the computer vision community by Dana H. Ballard thro ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Stellate Cell

Stellate cells are neurons in the central nervous system, named for their star-like shape formed by dendritic processes radiating from the cell body. Many stellate cells are GABAergic and are located in the molecular layer of the cerebellum. Stellate cells are derived from dividing progenitor cells in the white matter of postnatal cerebellum. Dendritic trees can vary between neurons. There are two types of dendritic trees in the cerebral cortex, which include pyramidal cells, which are pyramid shaped and stellate cells which are star shaped. Dendrites can also aid neuron classification. Dendrites with spines are classified as spiny, those without spines are classified as aspinous. Stellate cells can be spiny or aspinous, while pyramidal cells are always spiny. Most common stellate cells are the inhibitory interneurons found within the upper half of the molecular layer in the cerebellum. Cerebellar stellate cells synapse onto the dendritic trees of Purkinje cells and send inhibit ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

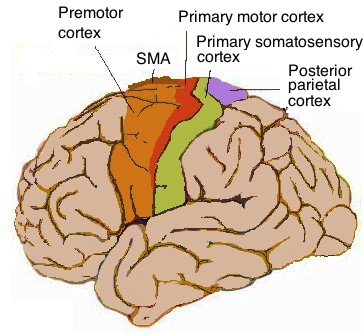

Cerebral Cortex

The cerebral cortex, also known as the cerebral mantle, is the outer layer of neural tissue of the cerebrum of the brain in humans and other mammals. The cerebral cortex mostly consists of the six-layered neocortex, with just 10% consisting of allocortex. It is separated into two cortices, by the longitudinal fissure that divides the cerebrum into the left and right cerebral hemispheres. The two hemispheres are joined beneath the cortex by the corpus callosum. The cerebral cortex is the largest site of neural integration in the central nervous system. It plays a key role in attention, perception, awareness, thought, memory, language, and consciousness. The cerebral cortex is part of the brain responsible for cognition. In most mammals, apart from small mammals that have small brains, the cerebral cortex is folded, providing a greater surface area in the confined volume of the cranium. Apart from minimising brain and cranial volume, cortical folding is crucial for the brain ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Chandelier Cell

Chandelier neurons or chandelier cells are a subset of GABAergic cortical interneurons. They are described as parvalbumin-containing and fast- spiking to distinguish them from other subtypes of GABAergic neurons, although more recent work has suggested that only a subset of chandelier cells test positive for parvalbumin by immunostaining. The name comes from the specific shape of their axon arbors, with the terminals forming distinct arrays called "''cartridges''". The cartridges are immunoreactive to an isoform of the GABA membrane transporter, GAT-1, and this serves as their identifying feature. GAT-1 is involved in the process of GABA reuptake into nerve terminals, thus helping to terminate its synaptic activity. Chandelier neurons synapse exclusively to the axon initial segment of pyramidal neurons, near the site where action potential is generated. It is believed that they provide inhibitory input to the pyramidal neurons, but there is data showing that in some circumstances ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Winner-take-all (computing)

Winner-take-all is a computational principle applied in computational models of neural networks by which neurons compete with each other for activation. In the classical form, only the neuron with the highest activation stays active while all other neurons shut down; however, other variations allow more than one neuron to be active, for example the soft winner take-all, by which a power function is applied to the neurons. Neural networks In the theory of artificial neural networks, winner-take-all networks are a case of competitive learning in recurrent neural networks. Output nodes in the network mutually inhibit each other, while simultaneously activating themselves through reflexive connections. After some time, only one node in the output layer will be active, namely the one corresponding to the strongest input. Thus the network uses nonlinear inhibition to pick out the largest of a set of inputs. Winner-take-all is a general computational primitive that can be implemented usin ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Prior Probability

In Bayesian statistical inference, a prior probability distribution, often simply called the prior, of an uncertain quantity is the probability distribution that would express one's beliefs about this quantity before some evidence is taken into account. For example, the prior could be the probability distribution representing the relative proportions of voters who will vote for a particular politician in a future election. The unknown quantity may be a parameter of the model or a latent variable rather than an observable variable. Bayes' theorem calculates the renormalized pointwise product of the prior and the likelihood function, to produce the ''posterior probability distribution'', which is the conditional distribution of the uncertain quantity given the data. Similarly, the prior probability of a random event or an uncertain proposition is the unconditional probability that is assigned before any relevant evidence is taken into account. Priors can be created using a num ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Log Probability

In probability theory and computer science, a log probability is simply a logarithm of a probability. The use of log probabilities means representing probabilities on a logarithmic scale, instead of the standard [0, 1] unit interval. Since the probabilities of Independence (probability theory), independent event (probability theory), events multiply, and logarithms convert multiplication to addition, log probabilities of independent events add. Log probabilities are thus practical for computations, and have an intuitive interpretation in terms of information theory: the negative of the average log probability is the information entropy of an event. Similarly, likelihoods are often transformed to the log scale, and the corresponding log-likelihood can be interpreted as the degree to which an event supports a statistical model. The log probability is widely used in implementations of computations with probability, and is studied as a concept in its own right in some applications of ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Logit

In statistics, the logit ( ) function is the quantile function associated with the standard logistic distribution. It has many uses in data analysis and machine learning, especially in data transformations. Mathematically, the logit is the inverse of the standard logistic function \sigma(x) = 1/(1+e^), so the logit is defined as :\operatorname p = \sigma^(p) = \ln \frac \quad \text \quad p \in (0,1). Because of this, the logit is also called the log-odds since it is equal to the logarithm of the odds \frac where is a probability. Thus, the logit is a type of function that maps probability values from (0, 1) to real numbers in (-\infty, +\infty), akin to the probit function. Definition If is a probability, then is the corresponding odds; the of the probability is the logarithm of the odds, i.e.: :\operatorname(p)=\ln\left( \frac \right) =\ln(p)-\ln(1-p)=-\ln\left( \frac-1\right)=2\operatorname(2p-1) The base of the logarithm function used is of little importance in t ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Softmax Function

The softmax function, also known as softargmax or normalized exponential function, converts a vector of real numbers into a probability distribution of possible outcomes. It is a generalization of the logistic function to multiple dimensions, and used in multinomial logistic regression. The softmax function is often used as the last activation function of a neural network to normalize the output of a network to a probability distribution over predicted output classes, based on Luce's choice axiom. Definition The softmax function takes as input a vector of real numbers, and normalizes it into a probability distribution consisting of probabilities proportional to the exponentials of the input numbers. That is, prior to applying softmax, some vector components could be negative, or greater than one; and might not sum to 1; but after applying softmax, each component will be in the interval (0, 1), and the components will add up to 1, so that they can be interpreted as probab ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Dot Product

In mathematics, the dot product or scalar productThe term ''scalar product'' means literally "product with a scalar as a result". It is also used sometimes for other symmetric bilinear forms, for example in a pseudo-Euclidean space. is an algebraic operation that takes two equal-length sequences of numbers (usually coordinate vectors), and returns a single number. In Euclidean geometry, the dot product of the Cartesian coordinates of two vectors is widely used. It is often called the inner product (or rarely projection product) of Euclidean space, even though it is not the only inner product that can be defined on Euclidean space (see Inner product space for more). Algebraically, the dot product is the sum of the products of the corresponding entries of the two sequences of numbers. Geometrically, it is the product of the Euclidean magnitudes of the two vectors and the cosine of the angle between them. These definitions are equivalent when using Cartesian coordinates. In mo ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Backpropagation

In machine learning, backpropagation (backprop, BP) is a widely used algorithm for training feedforward neural network, feedforward artificial neural networks. Generalizations of backpropagation exist for other artificial neural networks (ANNs), and for functions generally. These classes of algorithms are all referred to generically as "backpropagation". In Artificial neural network#Learning, fitting a neural network, backpropagation computes the gradient of the loss function with respect to the Glossary of graph theory terms#weight, weights of the network for a single input–output example, and does so Algorithmic efficiency, efficiently, unlike a naive direct computation of the gradient with respect to each weight individually. This efficiency makes it feasible to use gradient methods for training multilayer networks, updating weights to minimize loss; gradient descent, or variants such as stochastic gradient descent, are commonly used. The backpropagation algorithm works by ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |