|

Viterbi Algorithm

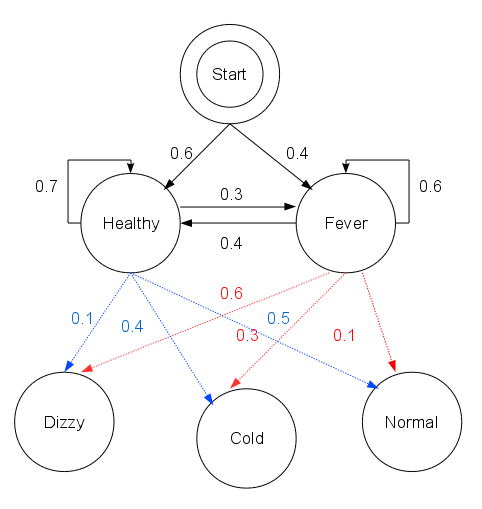

The Viterbi algorithm is a dynamic programming algorithm for obtaining the maximum a posteriori probability estimate of the most likely sequence of hidden states—called the Viterbi path—that results in a sequence of observed events. This is done especially in the context of Markov information sources and hidden Markov models (HMM). The algorithm has found universal application in decoding the convolutional codes used in both CDMA and GSM digital cellular, dial-up modems, satellite, deep-space communications, and 802.11 wireless LANs. It is now also commonly used in speech recognition, speech synthesis, diarization, keyword spotting, computational linguistics, and bioinformatics. For example, in speech-to-text (speech recognition), the acoustic signal is treated as the observed sequence of events, and a string of text is considered to be the "hidden cause" of the acoustic signal. The Viterbi algorithm finds the most likely string of text given the acoustic signal. His ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Convolution Code

In telecommunication, a convolutional code is a type of error-correcting code that generates parity symbols via the sliding application of a boolean polynomial function to a data stream. The sliding application represents the 'convolution' of the encoder over the data, which gives rise to the term 'convolutional coding'. The sliding nature of the convolutional codes facilitates trellis decoding using a time-invariant trellis. Time invariant trellis decoding allows convolutional codes to be maximum-likelihood soft-decision decoded with reasonable complexity. The ability to perform economical maximum likelihood soft decision decoding is one of the major benefits of convolutional codes. This is in contrast to classic block codes, which are generally represented by a time-variant trellis and therefore are typically hard-decision decoded. Convolutional codes are often characterized by the base code rate and the depth (or memory) of the encoder ,k,K/math>. The base code rate is ty ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Graphical Model

A graphical model or probabilistic graphical model (PGM) or structured probabilistic model is a probabilistic model for which a graph expresses the conditional dependence structure between random variables. Graphical models are commonly used in probability theory, statistics—particularly Bayesian statistics—and machine learning. Types of graphical models Generally, probabilistic graphical models use a graph-based representation as the foundation for encoding a distribution over a multi-dimensional space and a graph that is a compact or factorized representation of a set of independences that hold in the specific distribution. Two branches of graphical representations of distributions are commonly used, namely, Bayesian networks and Markov random fields. Both families encompass the properties of factorization and independences, but they differ in the set of independences they can encode and the factorization of the distribution that they induce. Undirected Graphical Model ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Latent Variable

In statistics, latent variables (from Latin: present participle of ) are variables that can only be inferred indirectly through a mathematical model from other observable variables that can be directly observed or measured. Such '' latent variable models'' are used in many disciplines, including engineering, medicine, ecology, physics, machine learning/artificial intelligence, natural language processing, bioinformatics, chemometrics, demography, economics, management, political science, psychology and the social sciences. Latent variables may correspond to aspects of physical reality. These could in principle be measured, but may not be for practical reasons. Among the earliest expressions of this idea is Francis Bacon's polemic the ''Novum Organum'', itself a challenge to the more traditional logic expressed in Aristotle's Organon: In this situation, the term ''hidden variables'' is commonly used, reflecting the fact that the variables are meaningful, but not observable ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Trellis Diagram

In telecommunication, a convolutional code is a type of error-correcting code that generates parity symbols via the sliding application of a boolean polynomial function to a data stream. The sliding application represents the 'convolution' of the encoder over the data, which gives rise to the term 'convolutional coding'. The sliding nature of the convolutional codes facilitates trellis decoding using a time-invariant trellis. Time invariant trellis decoding allows convolutional codes to be maximum-likelihood soft-decision decoded with reasonable complexity. The ability to perform economical maximum likelihood soft decision decoding is one of the major benefits of convolutional codes. This is in contrast to classic block codes, which are generally represented by a time-variant trellis and therefore are typically hard-decision decoded. Convolutional codes are often characterized by the base code rate and the depth (or memory) of the encoder ,k,K/math>. The base code rate is ty ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Markov Chain

In probability theory and statistics, a Markov chain or Markov process is a stochastic process describing a sequence of possible events in which the probability of each event depends only on the state attained in the previous event. Informally, this may be thought of as, "What happens next depends only on the state of affairs ''now''." A countably infinite sequence, in which the chain moves state at discrete time steps, gives a discrete-time Markov chain (DTMC). A continuous-time process is called a continuous-time Markov chain (CTMC). Markov processes are named in honor of the Russian mathematician Andrey Markov. Markov chains have many applications as statistical models of real-world processes. They provide the basis for general stochastic simulation methods known as Markov chain Monte Carlo, which are used for simulating sampling from complex probability distributions, and have found application in areas including Bayesian statistics, biology, chemistry, economics, fin ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Amortized Analysis

In computer science, amortized analysis is a method for analyzing a given algorithm's complexity, or how much of a resource, especially time or memory, it takes to execute. The motivation for amortized analysis is that looking at the worst-case run time can be too pessimistic. Instead, amortized analysis averages the running times of operations in a sequence over that sequence. As a conclusion: "Amortized analysis is a useful tool that complements other techniques such as worst-case and average-case analysis." For a given operation of an algorithm, certain situations (e.g., input parametrizations or data structure contents) may imply a significant cost in resources, whereas other situations may not be as costly. The amortized analysis considers both the costly and less costly operations together over the whole sequence of operations. This may include accounting for different types of input, length of the input, and other factors that affect its performance. History Amortize ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Arg Max

In mathematics, the arguments of the maxima (abbreviated arg max or argmax) and arguments of the minima (abbreviated arg min or argmin) are the input points at which a Function (mathematics), function output value is Maxima and minima, maximized and minimized, respectively.For clarity, we refer to the input (''x'') as ''points'' and the output (''y'') as ''values;'' compare critical point (mathematics), critical point and critical value (critical point), critical value. While the argument of a function, arguments are defined over the domain of a function, the output is part of its codomain. Definition Given an arbitrary set (mathematics), set a totally ordered set and a function, the \operatorname over some subset S of X is defined by :\operatorname_S f := \underset\, f(x) := \. If S = X or S is clear from the context, then S is often left out, as in \underset\, f(x) := \. In other words, \operatorname is the Set (mathematics), set of points x for which f(x) attains the f ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Optical Motion Tracking

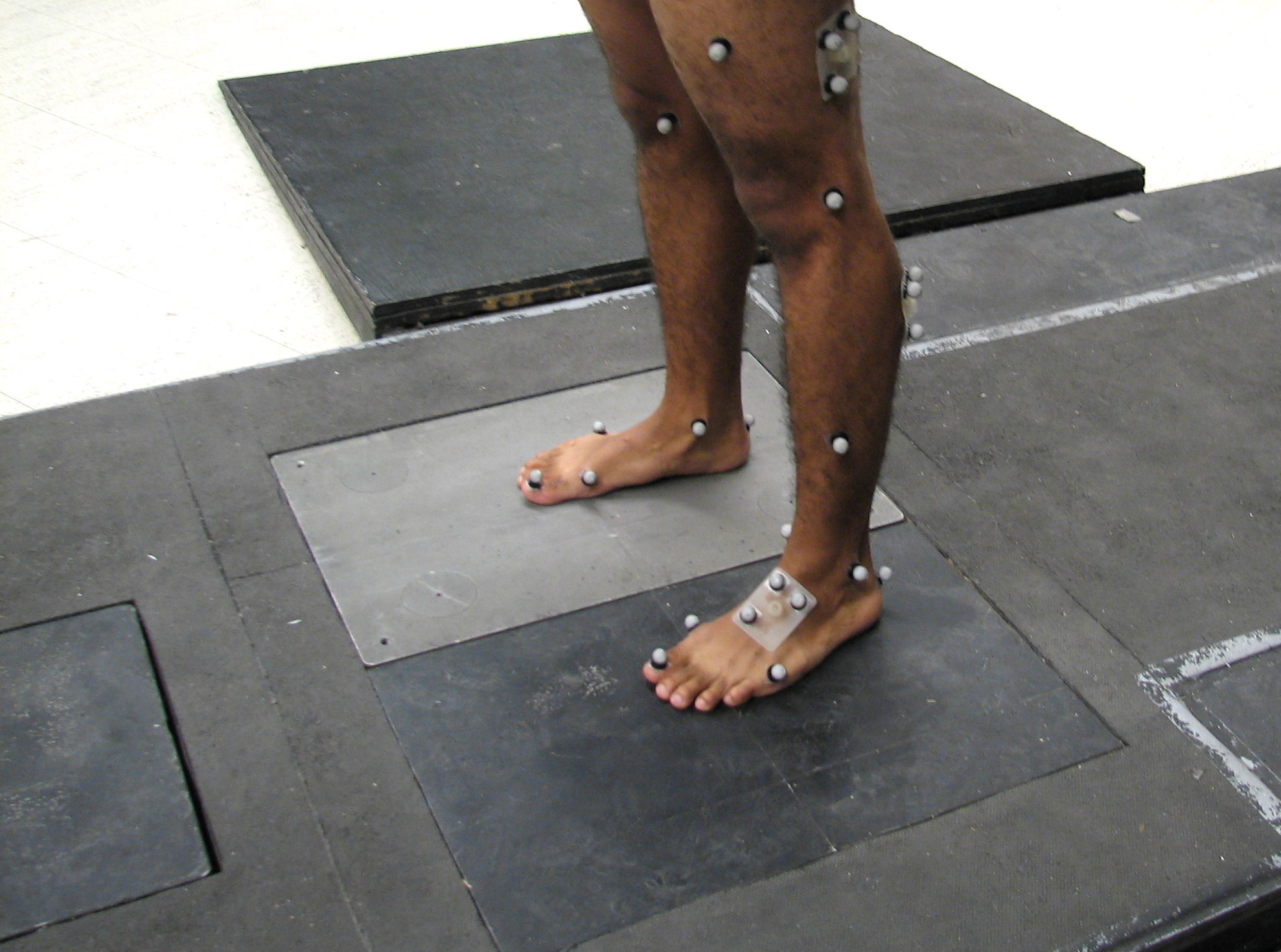

Motion capture (sometimes referred as mocap or mo-cap, for short) is the process of recording high-resolution movement of objects or people into a computer system. It is used in military, entertainment, sports, medical applications, and for validation of computer vision and robots. In films, television shows and video games, motion capture refers to recording actions of human actors and using that information to animate digital character models in 2D or 3D computer animation. When it includes face and fingers or captures subtle expressions, it is often referred to as performance capture. In many fields, motion capture is sometimes called motion tracking, but in filmmaking and games, motion tracking usually refers more to match moving. In motion capture sessions, movements of one or more actors are sampled many times per second. Whereas early techniques used images from multiple cameras to calculate 3D positions, often the purpose of motion capture is to record only the movem ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Part-of-speech Tagging

In corpus linguistics, part-of-speech tagging (POS tagging, PoS tagging, or POST), also called grammatical tagging, is the process of marking up a word in a text ( corpus) as corresponding to a particular part of speech, based on both its definition and its context. A simplified form of this is commonly taught to school-age children, in the identification of words as nouns, verbs, adjectives, adverbs, etc. Once performed by hand, POS tagging is now done in the context of computational linguistics, using algorithms which associate discrete terms, as well as hidden parts of speech, by a set of descriptive tags. POS-tagging algorithms fall into two distinctive groups: rule-based and stochastic. E. Brill's tagger, one of the first and most widely used English POS taggers, employs rule-based algorithms. Principle Part-of-speech tagging is harder than just having a list of words and their parts of speech, because some words can represent more than one part of speech at different t ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Natural Language Processing

Natural language processing (NLP) is a subfield of computer science and especially artificial intelligence. It is primarily concerned with providing computers with the ability to process data encoded in natural language and is thus closely related to information retrieval, knowledge representation and computational linguistics, a subfield of linguistics. Major tasks in natural language processing are speech recognition, text classification, natural-language understanding, natural language understanding, and natural language generation. History Natural language processing has its roots in the 1950s. Already in 1950, Alan Turing published an article titled "Computing Machinery and Intelligence" which proposed what is now called the Turing test as a criterion of intelligence, though at the time that was not articulated as a problem separate from artificial intelligence. The proposed test includes a task that involves the automated interpretation and generation of natural language ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |