|

Spiking Neural Networks

Spiking neural networks (SNNs) are artificial neural networks that more closely mimic natural neural networks. In addition to neuronal A neuron, neurone, or nerve cell is an electrically excitable cell that communicates with other cells via specialized connections called synapses. The neuron is the main component of nervous tissue in all animals except sponges and placozoa. No ... and Electrical synapse, synaptic state, SNNs incorporate the concept of time into their Operating Model, operating model. The idea is that Artificial neuron, neurons in the SNN do not transmit information at each propagation cycle (as it happens with typical multi-layer perceptron, perceptron networks), but rather transmit information only when a membrane potential – an intrinsic quality of the neuron related to its membrane electrical charge – reaches a specific value, called the threshold. When the membrane potential reaches the threshold, the neuron fires, and generates a signal th ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Neurotransmitter

A neurotransmitter is a signaling molecule secreted by a neuron to affect another cell across a synapse. The cell receiving the signal, any main body part or target cell, may be another neuron, but could also be a gland or muscle cell. Neurotransmitters are released from synaptic vesicles into the synaptic cleft where they are able to interact with neurotransmitter receptors on the target cell. The neurotransmitter's effect on the target cell is determined by the receptor it binds. Many neurotransmitters are synthesized from simple and plentiful precursors such as amino acids, which are readily available and often require a small number of biosynthetic steps for conversion. Neurotransmitters are essential to the function of complex neural systems. The exact number of unique neurotransmitters in humans is unknown, but more than 100 have been identified. Common neurotransmitters include glutamate, GABA, acetylcholine, glycine and norepinephrine. Mechanism and cycle Synthes ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Backpropagation

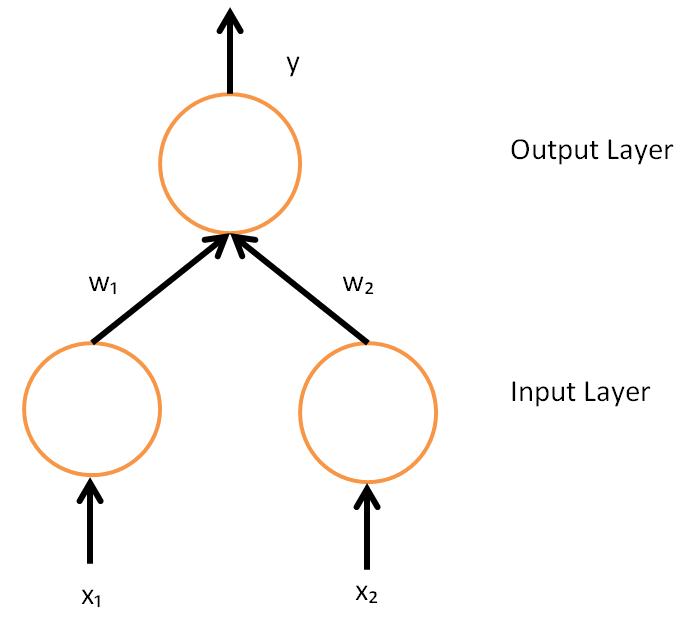

In machine learning, backpropagation (backprop, BP) is a widely used algorithm for training feedforward neural network, feedforward artificial neural networks. Generalizations of backpropagation exist for other artificial neural networks (ANNs), and for functions generally. These classes of algorithms are all referred to generically as "backpropagation". In Artificial neural network#Learning, fitting a neural network, backpropagation computes the gradient of the loss function with respect to the Glossary of graph theory terms#weight, weights of the network for a single input–output example, and does so Algorithmic efficiency, efficiently, unlike a naive direct computation of the gradient with respect to each weight individually. This efficiency makes it feasible to use gradient methods for training multilayer networks, updating weights to minimize loss; gradient descent, or variants such as stochastic gradient descent, are commonly used. The backpropagation algorithm works by ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Gradient Descent

In mathematics, gradient descent (also often called steepest descent) is a first-order iterative optimization algorithm for finding a local minimum of a differentiable function. The idea is to take repeated steps in the opposite direction of the gradient (or approximate gradient) of the function at the current point, because this is the direction of steepest descent. Conversely, stepping in the direction of the gradient will lead to a local maximum of that function; the procedure is then known as gradient ascent. Gradient descent is generally attributed to Augustin-Louis Cauchy, who first suggested it in 1847. Jacques Hadamard independently proposed a similar method in 1907. Its convergence properties for non-linear optimization problems were first studied by Haskell Curry in 1944, with the method becoming increasingly well-studied and used in the following decades. Description Gradient descent is based on the observation that if the multi-variable function F(\mathbf) is def ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Spike-timing-dependent Plasticity

Spike-timing-dependent plasticity (STDP) is a biological process that adjusts the strength of connections between neurons in the brain. The process adjusts the connection strengths based on the relative timing of a particular neuron's output and input action potentials (or spikes). The STDP process partially explains the activity-dependent development of nervous systems, especially with regard to long-term potentiation and long-term depression. Process Under the STDP process, if an input spike to a neuron tends, on average, to occur immediately ''before'' that neuron's output spike, then that particular input is made somewhat stronger. If an input spike tends, on average, to occur immediately ''after'' an output spike, then that particular input is made somewhat weaker hence: "spike-timing-dependent plasticity". Thus, inputs that might be the cause of the post-synaptic neuron's excitation are made even more likely to contribute in the future, whereas inputs that are not the cause ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Hebbian Theory

Hebbian theory is a neuroscientific theory claiming that an increase in synaptic efficacy arises from a presynaptic cell's repeated and persistent stimulation of a postsynaptic cell. It is an attempt to explain synaptic plasticity, the adaptation of brain neurons during the learning process. It was introduced by Donald Hebb in his 1949 book ''The Organization of Behavior.'' The theory is also called Hebb's rule, Hebb's postulate, and cell assembly theory. Hebb states it as follows: Let us assume that the persistence or repetition of a reverberatory activity (or "trace") tends to induce lasting cellular changes that add to its stability. ... When an axon of cell ''A'' is near enough to excite a cell ''B'' and repeatedly or persistently takes part in firing it, some growth process or metabolic change takes place in one or both cells such that ''A''’s efficiency, as one of the cells firing ''B'', is increased. The theory is often summarized as "Cells that fire together wire toget ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Recurrent Neural Network

A recurrent neural network (RNN) is a class of artificial neural networks where connections between nodes can create a cycle, allowing output from some nodes to affect subsequent input to the same nodes. This allows it to exhibit temporal dynamic behavior. Derived from feedforward neural networks, RNNs can use their internal state (memory) to process variable length sequences of inputs. This makes them applicable to tasks such as unsegmented, connected handwriting recognition or speech recognition. Recurrent neural networks are theoretically Turing complete and can run arbitrary programs to process arbitrary sequences of inputs. The term "recurrent neural network" is used to refer to the class of networks with an infinite impulse response, whereas "convolutional neural network" refers to the class of finite impulse response. Both classes of networks exhibit temporal dynamic behavior. A finite impulse recurrent network is a directed acyclic graph that can be unrolled and replace ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Convolutional Neural Network

In deep learning, a convolutional neural network (CNN, or ConvNet) is a class of artificial neural network (ANN), most commonly applied to analyze visual imagery. CNNs are also known as Shift Invariant or Space Invariant Artificial Neural Networks (SIANN), based on the shared-weight architecture of the convolution kernels or filters that slide along input features and provide translation-equivariant responses known as feature maps. Counter-intuitively, most convolutional neural networks are not invariant to translation, due to the downsampling operation they apply to the input. They have applications in image and video recognition, recommender systems, image classification, image segmentation, medical image analysis, natural language processing, brain–computer interfaces, and financial time series. CNNs are regularized versions of multilayer perceptrons. Multilayer perceptrons usually mean fully connected networks, that is, each neuron in one layer is connected to all neuro ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Temporal Coding

Neural coding (or Neural representation) is a neuroscience field concerned with characterising the hypothetical relationship between the stimulus and the individual or ensemble neuronal responses and the relationship among the electrical activity of the neurons in the ensemble. Based on the theory that sensory and other information is represented in the brain by networks of neurons, it is thought that neurons can encode both digital and analog information. Overview Neurons are remarkable among the cells of the body in their ability to propagate signals rapidly over large distances. They do this by generating characteristic electrical pulses called action potentials: voltage spikes that can travel down axons. Sensory neurons change their activities by firing sequences of action potentials in various temporal patterns, with the presence of external sensory stimuli, such as light, sound, taste, smell and touch. It is known that information about the stimulus is encoded in this pa ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Neural Coding

Neural coding (or Neural representation) is a neuroscience field concerned with characterising the hypothetical relationship between the stimulus and the individual or ensemble neuronal responses and the relationship among the electrical activity of the neurons in the ensemble. Based on the theory that sensory and other information is represented in the brain by networks of neurons, it is thought that neurons can encode both digital and analog information. Overview Neurons are remarkable among the cells of the body in their ability to propagate signals rapidly over large distances. They do this by generating characteristic electrical pulses called action potentials: voltage spikes that can travel down axons. Sensory neurons change their activities by firing sequences of action potentials in various temporal patterns, with the presence of external sensory stimuli, such as light, sound, taste, smell and touch. It is known that information about the stimulus is encoded in this pa ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Hindmarsh–Rose Model

The Hindmarsh–Rose model of neuronal activity is aimed to study the spiking-bursting behavior of the membrane potential observed in experiments made with a single neuron. The relevant variable is the membrane potential, ''x''(''t''), which is written in dimensionless units. There are two more variables, ''y''(''t'') and ''z''(''t''), which take into account the transport of ions across the membrane through the ion channels. The transport of sodium and potassium ions is made through fast ion channels and its rate is measured by ''y''(''t''), which is called the spiking variable. ''z''(''t'') corresponds to an adaptation current, which is incremented at every spike, leading to a decrease in the firing rate. Then, the Hindmarsh–Rose model has the mathematical form of a system of three nonlinear ordinary differential equations on the dimensionless dynamical variables ''x''(''t''), ''y''(''t''), and ''z''(''t''). They read: : \begin \frac &= y+\phi(x)-z+I, \\ \frac &= \psi(x)-y ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |