|

Simple Rational Approximation

Simple rational approximation (SRA) is a subset of interpolating methods using rational functions. Especially, SRA interpolates a given function with a specific rational function whose poles and zeros are simple, which means that there is no multiplicity in poles and zeros. Sometimes, it only implies simple poles. The main application of SRA lies in finding the zeros of secular functions. A divide-and-conquer algorithm to find the eigenvalues and eigenvectors for various kinds of matrices is well known in numerical analysis. In a strict sense, SRA implies a specific interpolation using simple rational functions as a part of the divide-and-conquer algorithm. Since such secular functions consist of a series of rational functions with simple poles, SRA is the best candidate to interpolate the zeros of the secular function. Moreover, based on previous researches, a simple zero that lies between two adjacent poles can be considerably well interpolated by using a two-dominant-pole rat ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Interpolation

In the mathematics, mathematical field of numerical analysis, interpolation is a type of estimation, a method of constructing (finding) new data points based on the range of a discrete set of known data points. In engineering and science, one often has a number of data points, obtained by sampling (statistics), sampling or experimentation, which represent the values of a function for a limited number of values of the Dependent and independent variables, independent variable. It is often required to interpolate; that is, estimate the value of that function for an intermediate value of the independent variable. A closely related problem is the function approximation, approximation of a complicated function by a simple function. Suppose the formula for some given function is known, but too complicated to evaluate efficiently. A few data points from the original function can be interpolated to produce a simpler function which is still fairly close to the original. The resulting gai ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Rational Function

In mathematics, a rational function is any function that can be defined by a rational fraction, which is an algebraic fraction such that both the numerator and the denominator are polynomials. The coefficients of the polynomials need not be rational numbers; they may be taken in any field . In this case, one speaks of a rational function and a rational fraction ''over ''. The values of the variables may be taken in any field containing . Then the domain of the function is the set of the values of the variables for which the denominator is not zero, and the codomain is . The set of rational functions over a field is a field, the field of fractions of the ring of the polynomial functions over . Definitions A function f is called a rational function if it can be written in the form : f(x) = \frac where P and Q are polynomial functions of x and Q is not the zero function. The domain of f is the set of all values of x for which the denominator Q(x) is not zero. How ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

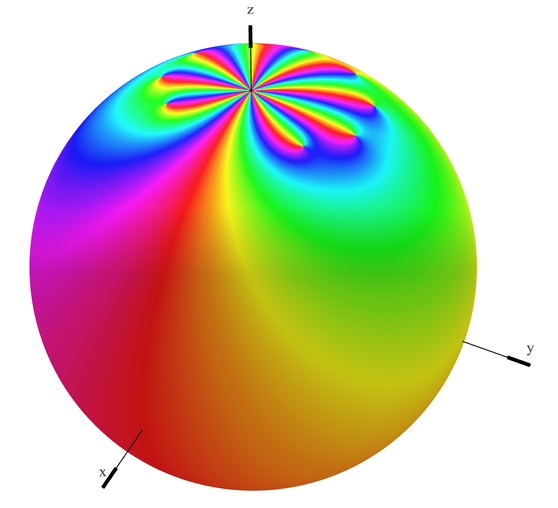

Pole (complex Analysis)

In complex analysis (a branch of mathematics), a pole is a certain type of singularity (mathematics), singularity of a complex-valued function of a complex number, complex variable. It is the simplest type of non-removable singularity of such a function (see essential singularity). Technically, a point is a pole of a function if it is a zero of a function, zero of the function and is holomorphic function, holomorphic (i.e. complex differentiable) in some neighbourhood (mathematics), neighbourhood of . A function is meromorphic function, meromorphic in an open set if for every point of there is a neighborhood of in which at least one of and is holomorphic. If is meromorphic in , then a zero of is a pole of , and a pole of is a zero of . This induces a duality between ''zeros'' and ''poles'', that is fundamental for the study of meromorphic functions. For example, if a function is meromorphic on the whole complex plane plus the point at infinity, then the sum of the ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Root Of A Function

In mathematics, a zero (also sometimes called a root) of a real-, complex-, or generally vector-valued function f, is a member x of the domain of f such that f(x) ''vanishes'' at x; that is, the function f attains the value of 0 at x, or equivalently, x is a solution to the equation f(x) = 0. A "zero" of a function is thus an input value that produces an output of 0. A root of a polynomial is a zero of the corresponding polynomial function. The fundamental theorem of algebra shows that any non-zero polynomial has a number of roots at most equal to its degree, and that the number of roots and the degree are equal when one considers the complex roots (or more generally, the roots in an algebraically closed extension) counted with their multiplicities. For example, the polynomial f of degree two, defined by f(x)=x^2-5x+6=(x-2)(x-3) has the two roots (or zeros) that are 2 and 3. f(2)=2^2-5\times 2+6= 0\textf(3)=3^2-5\times 3+6=0. If the function maps real numbers to real n ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Secular Function

In linear algebra, the characteristic polynomial of a square matrix is a polynomial which is invariant under matrix similarity and has the eigenvalues as roots. It has the determinant and the trace of the matrix among its coefficients. The characteristic polynomial of an endomorphism of a finite-dimensional vector space is the characteristic polynomial of the matrix of that endomorphism over any basis (that is, the characteristic polynomial does not depend on the choice of a basis). The characteristic equation, also known as the determinantal equation, is the equation obtained by equating the characteristic polynomial to zero. In spectral graph theory, the characteristic polynomial of a graph is the characteristic polynomial of its adjacency matrix. Motivation In linear algebra, eigenvalues and eigenvectors play a fundamental role, since, given a linear transformation, an eigenvector is a vector whose direction is not changed by the transformation, and the corresponding eigenv ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Divide-and-conquer Algorithm

In computer science, divide and conquer is an algorithm design paradigm. A divide-and-conquer algorithm recursively breaks down a problem into two or more sub-problems of the same or related type, until these become simple enough to be solved directly. The solutions to the sub-problems are then combined to give a solution to the original problem. The divide-and-conquer technique is the basis of efficient algorithms for many problems, such as sorting (e.g., quicksort, merge sort), multiplying large numbers (e.g., the Karatsuba algorithm), finding the closest pair of points, syntactic analysis (e.g., top-down parsers), and computing the discrete Fourier transform ( FFT). Designing efficient divide-and-conquer algorithms can be difficult. As in mathematical induction, it is often necessary to generalize the problem to make it amenable to a recursive solution. The correctness of a divide-and-conquer algorithm is usually proved by mathematical induction, and its computational cos ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Eigenvalues

In linear algebra, an eigenvector ( ) or characteristic vector is a vector that has its direction unchanged (or reversed) by a given linear transformation. More precisely, an eigenvector \mathbf v of a linear transformation T is scaled by a constant factor \lambda when the linear transformation is applied to it: T\mathbf v=\lambda \mathbf v. The corresponding eigenvalue, characteristic value, or characteristic root is the multiplying factor \lambda (possibly a negative or complex number). Geometrically, vectors are multi-dimensional quantities with magnitude and direction, often pictured as arrows. A linear transformation rotates, stretches, or shears the vectors upon which it acts. A linear transformation's eigenvectors are those vectors that are only stretched or shrunk, with neither rotation nor shear. The corresponding eigenvalue is the factor by which an eigenvector is stretched or shrunk. If the eigenvalue is negative, the eigenvector's direction is reversed. The ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Eigenvectors

In linear algebra, an eigenvector ( ) or characteristic vector is a Vector (mathematics and physics), vector that has its direction (geometry), direction unchanged (or reversed) by a given linear map, linear transformation. More precisely, an eigenvector \mathbf v of a linear transformation T is scalar multiplication, scaled by a constant factor \lambda when the linear transformation is applied to it: T\mathbf v=\lambda \mathbf v. The corresponding eigenvalue, characteristic value, or characteristic root is the multiplying factor \lambda (possibly a negative number, negative or complex number, complex number). Euclidean vector, Geometrically, vectors are multi-dimensional quantities with magnitude and direction, often pictured as arrows. A linear transformation Rotation (mathematics), rotates, Scaling (geometry), stretches, or Shear mapping, shears the vectors upon which it acts. A linear transformation's eigenvectors are those vectors that are only stretched or shrunk, with nei ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Matrix (mathematics)

In mathematics, a matrix (: matrices) is a rectangle, rectangular array or table of numbers, symbol (formal), symbols, or expression (mathematics), expressions, with elements or entries arranged in rows and columns, which is used to represent a mathematical object or property of such an object. For example, \begin1 & 9 & -13 \\20 & 5 & -6 \end is a matrix with two rows and three columns. This is often referred to as a "two-by-three matrix", a " matrix", or a matrix of dimension . Matrices are commonly used in linear algebra, where they represent linear maps. In geometry, matrices are widely used for specifying and representing geometric transformations (for example rotation (mathematics), rotations) and coordinate changes. In numerical analysis, many computational problems are solved by reducing them to a matrix computation, and this often involves computing with matrices of huge dimensions. Matrices are used in most areas of mathematics and scientific fields, either directly ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Numerical Analysis

Numerical analysis is the study of algorithms that use numerical approximation (as opposed to symbolic computation, symbolic manipulations) for the problems of mathematical analysis (as distinguished from discrete mathematics). It is the study of numerical methods that attempt to find approximate solutions of problems rather than the exact ones. Numerical analysis finds application in all fields of engineering and the physical sciences, and in the 21st century also the life and social sciences like economics, medicine, business and even the arts. Current growth in computing power has enabled the use of more complex numerical analysis, providing detailed and realistic mathematical models in science and engineering. Examples of numerical analysis include: ordinary differential equations as found in celestial mechanics (predicting the motions of planets, stars and galaxies), numerical linear algebra in data analysis, and stochastic differential equations and Markov chains for simulati ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Interpolation

In the mathematics, mathematical field of numerical analysis, interpolation is a type of estimation, a method of constructing (finding) new data points based on the range of a discrete set of known data points. In engineering and science, one often has a number of data points, obtained by sampling (statistics), sampling or experimentation, which represent the values of a function for a limited number of values of the Dependent and independent variables, independent variable. It is often required to interpolate; that is, estimate the value of that function for an intermediate value of the independent variable. A closely related problem is the function approximation, approximation of a complicated function by a simple function. Suppose the formula for some given function is known, but too complicated to evaluate efficiently. A few data points from the original function can be interpolated to produce a simpler function which is still fairly close to the original. The resulting gai ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

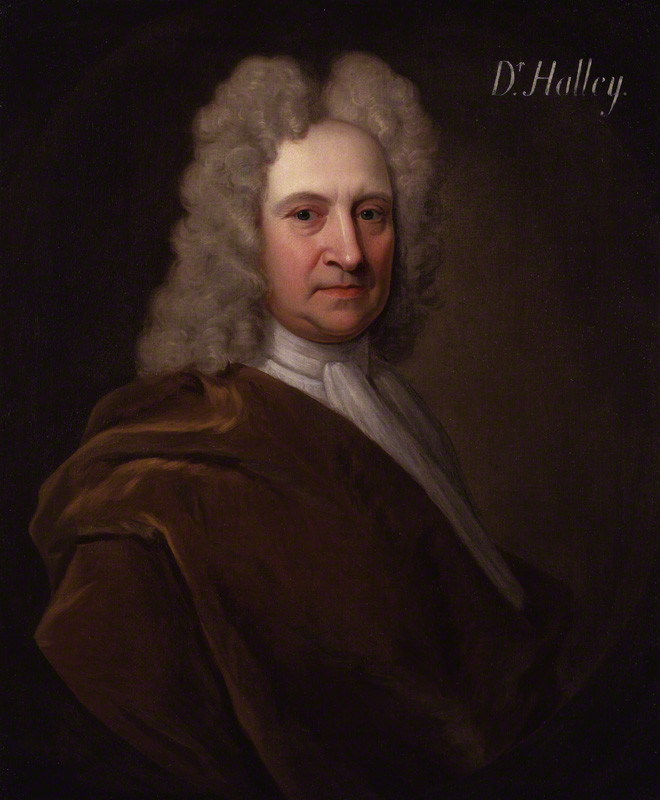

Edmond Halley

Edmond (or Edmund) Halley (; – ) was an English astronomer, mathematician and physicist. He was the second Astronomer Royal in Britain, succeeding John Flamsteed in 1720. From an observatory he constructed on Saint Helena in 1676–77, Halley catalogued the southern celestial hemisphere and recorded a transit of Mercury across the Sun. He realised that a similar transit of Venus could be used to determine the distances between Earth, Venus, and the Sun. Upon his return to England, he was made a fellow of the Royal Society, and with the help of King Charles II of England, Charles II, was granted a master's degree from University of Oxford, Oxford. Halley encouraged and helped fund the publication of Isaac Newton's influential ''Philosophiæ Naturalis Principia Mathematica'' (1687). From observations Halley made in September 1682, he used Newton's law of universal gravitation to compute the periodicity of Halley's Comet in his 1705 ''Synopsis of the Astronomy of Comets''. It ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |