|

Ruzzo–Tompa Algorithm

The Ruzzo–Tompa algorithm or the RT algorithm is a Time complexity#Linear time, linear-time algorithm for finding all non-overlapping, contiguous, maximal scoring subsequences in a sequence of real numbers. The Ruzzo–Tompa algorithm was proposed by Walter L. Ruzzo and Martin Tompa. This algorithm is an improvement over previously known quadratic time algorithms. The maximum scoring subsequence from the set produced by the algorithm is also a solution to the maximum subarray problem. The Ruzzo–Tompa algorithm has applications in bioinformatics, web scraping, and information retrieval. Applications Bioinformatics The Ruzzo–Tompa algorithm has been used in Bioinformatics tools to study biological data. The problem of finding disjoint maximal subsequences is of practical importance in the analysis of DNA. Maximal subsequences algorithms have been used in the identification of transmembrane segments and the evaluation of sequence homology. The algorithm is used in sequence align ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Time Complexity

In theoretical computer science, the time complexity is the computational complexity that describes the amount of computer time it takes to run an algorithm. Time complexity is commonly estimated by counting the number of elementary operations performed by the algorithm, supposing that each elementary operation takes a fixed amount of time to perform. Thus, the amount of time taken and the number of elementary operations performed by the algorithm are taken to be related by a constant factor. Since an algorithm's running time may vary among different inputs of the same size, one commonly considers the worst-case time complexity, which is the maximum amount of time required for inputs of a given size. Less common, and usually specified explicitly, is the average-case complexity, which is the average of the time taken on inputs of a given size (this makes sense because there are only a finite number of possible inputs of a given size). In both cases, the time complexity is gene ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

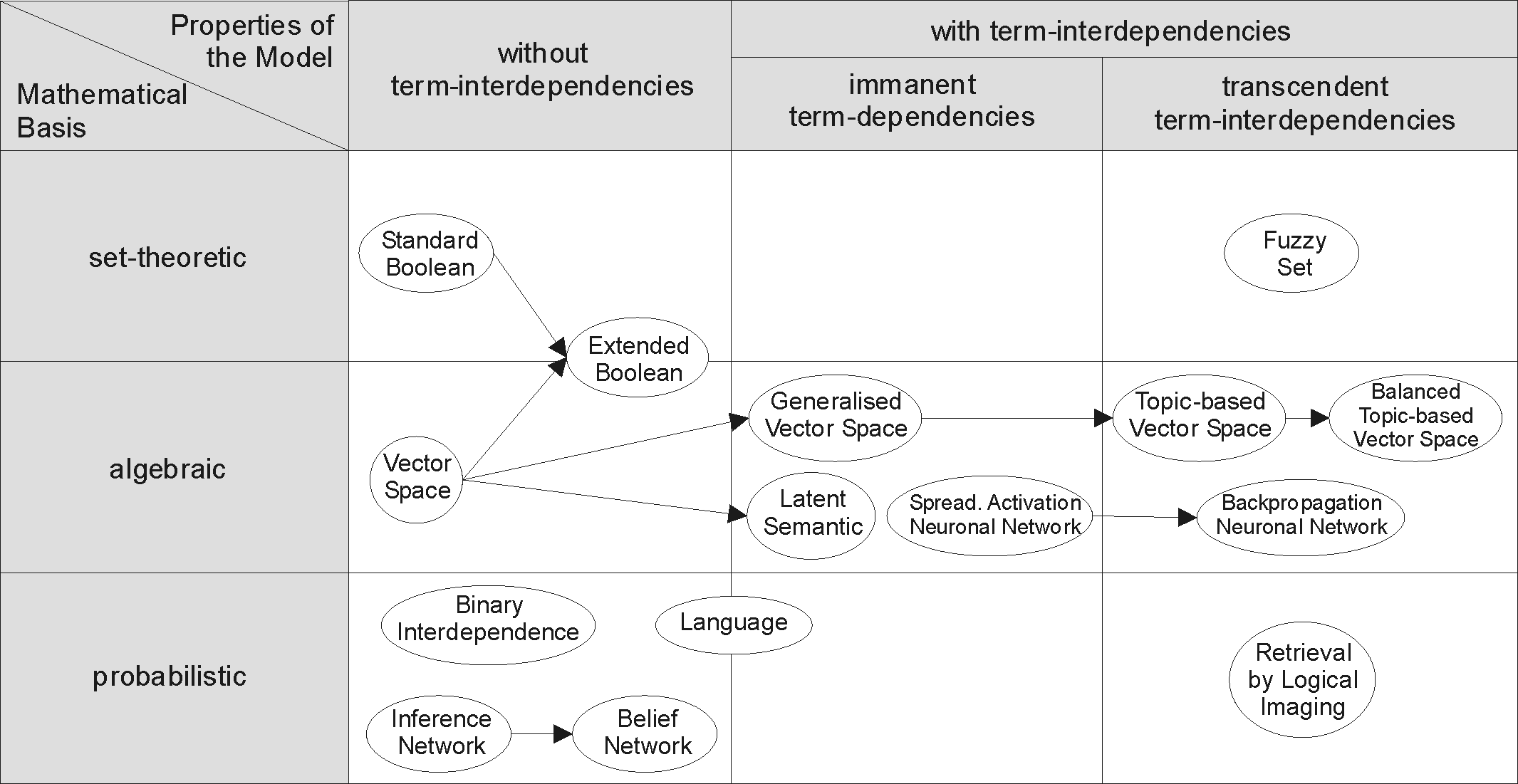

Information Retrieval

Information retrieval (IR) in computing and information science is the task of identifying and retrieving information system resources that are relevant to an Information needs, information need. The information need can be specified in the form of a search query. In the case of document retrieval, queries can be based on full-text search, full-text or other content-based indexing. Information retrieval is the science of searching for information in a document, searching for documents themselves, and also searching for the metadata that describes data, and for databases of texts, images or sounds. Automated information retrieval systems are used to reduce what has been called information overload. An IR system is a software system that provides access to books, journals and other documents; it also stores and manages those documents. Web search engines are the most visible IR applications. Overview An information retrieval process begins when a user enters a query into the sys ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Optimization Algorithms And Methods

Mathematical optimization (alternatively spelled ''optimisation'') or mathematical programming is the selection of a best element, with regard to some criteria, from some set of available alternatives. It is generally divided into two subfields: discrete optimization and continuous optimization. Optimization problems arise in all quantitative disciplines from computer science and engineering to operations research and economics, and the development of solution methods has been of interest in mathematics for centuries. In the more general approach, an optimization problem consists of maximizing or minimizing a real function by systematically choosing Argument of a function, input values from within an allowed set and computing the Value (mathematics), value of the function. The generalization of optimization theory and techniques to other formulations constitutes a large area of applied mathematics. Optimization problems Optimization problems can be divided into two categ ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Maximum Subarray Problem

In computer science, the maximum sum subarray problem, also known as the maximum segment sum problem, is the task of finding a contiguous subarray with the largest sum, within a given one-dimensional array A ...nof numbers. It can be solved in O(n) time and O(1) space. Formally, the task is to find indices i and j with 1 \leq i \leq j \leq n , such that the sum : \sum_^j A is as large as possible. (Some formulations of the problem also allow the empty subarray to be considered; by convention, the sum of all values of the empty subarray is zero.) Each number in the input array A could be positive, negative, or zero. For example, for the array of values minus;2, 1, −3, 4, −1, 2, 1, −5, 4 the contiguous subarray with the largest sum is , −1, 2, 1 with sum 6. Some properties of this problem are: # If the array contains all non-negative numbers, then the problem is trivial; a maximum subarray is the entire array. # If the array contains all non-positive ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Python (programming Language)

Python is a high-level programming language, high-level, general-purpose programming language. Its design philosophy emphasizes code readability with the use of significant indentation. Python is type system#DYNAMIC, dynamically type-checked and garbage collection (computer science), garbage-collected. It supports multiple programming paradigms, including structured programming, structured (particularly procedural programming, procedural), object-oriented and functional programming. It is often described as a "batteries included" language due to its comprehensive standard library. Guido van Rossum began working on Python in the late 1980s as a successor to the ABC (programming language), ABC programming language, and he first released it in 1991 as Python 0.9.0. Python 2.0 was released in 2000. Python 3.0, released in 2008, was a major revision not completely backward-compatible with earlier versions. Python 2.7.18, released in 2020, was the last release of ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Animation Of Ruzzo-Tompa Algorithm

Animation is a filmmaking technique whereby still images are manipulated to create moving images. In traditional animation, images are drawn or painted by hand on transparent celluloid sheets to be photographed and exhibited on film. Animation has been recognised as an artistic medium, specifically within the entertainment industry. Many animations are either traditional animations or computer animations made with computer-generated imagery (CGI). Stop motion animation, in particular claymation, has continued to exist alongside these other forms. Animation is contrasted with live action, although the two do not exist in isolation. Many moviemakers have produced films that are a hybrid of the two. As CGI increasingly approximates photographic imagery, filmmakers can easily composite 3D animations into their film rather than using practical effects for showy visual effects (VFX). General overview Computer animation can be very detailed 3D animation, while 2D computer an ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Quicksort

Quicksort is an efficient, general-purpose sorting algorithm. Quicksort was developed by British computer scientist Tony Hoare in 1959 and published in 1961. It is still a commonly used algorithm for sorting. Overall, it is slightly faster than merge sort and heapsort for randomized data, particularly on larger distributions. Quicksort is a divide-and-conquer algorithm. It works by selecting a "pivot" element from the array and partitioning the other elements into two sub-arrays, according to whether they are less than or greater than the pivot. For this reason, it is sometimes called partition-exchange sort. The sub-arrays are then sorted recursively. This can be done in-place, requiring small additional amounts of memory to perform the sorting. Quicksort is a comparison sort, meaning that it can sort items of any type for which a "less-than" relation (formally, a total order) is defined. It is a comparison-based sort since elements ''a'' and ''b'' are only swapped in ca ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Bursting

Bursting, or burst firing, is an extremely diverse general phenomenon of the activation patterns of neurons in the central nervous system where periods of rapid action potential spiking are followed by quiescent periods much longer than typical inter-spike intervals. Bursting is thought to be important in the operation of robust central pattern generators, the transmission of neural codes, and some neuropathologies such as epilepsy. The study of bursting both directly and in how it takes part in other neural phenomena has been very popular since the beginnings of cellular neuroscience and is closely tied to the fields of neural synchronization, neural coding, plasticity, and attention. Observed bursts are named by the number of discrete action potentials they are composed of: a ''doublet'' is a two-spike burst, a ''triplet'' three and a ''quadruplet'' four. Neurons that are intrinsically prone to bursting behavior are referred to as ''bursters'' and this tendency to burst may ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Data Fusion

Data fusion is the process of integrating multiple data sources to produce more consistent, accurate, and useful information than that provided by any individual data source. Data fusion processes are often categorized as low, intermediate, or high, depending on the processing stage at which fusion takes place. Low-level data fusion combines several sources of raw data to produce new raw data. The expectation is that fused data is more informative and synthetic than the original inputs. For example, sensor fusion is also known as (multi-sensor) data fusion and is a subset of information fusion. The concept of data fusion has origins in the evolved capacity of humans and animals to incorporate information from multiple senses to improve their ability to survive. For example, a combination of sight, touch, smell, and taste may indicate whether a substance is edible. The JDL/DFIG model In the mid-1980s, the Joint Directors of Laboratories formed the Data Fusion Subpanel (whic ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Lexical Analysis

Lexical tokenization is conversion of a text into (semantically or syntactically) meaningful ''lexical tokens'' belonging to categories defined by a "lexer" program. In case of a natural language, those categories include nouns, verbs, adjectives, punctuations etc. In case of a programming language, the categories include identifiers, operators, grouping symbols, data types and language keywords. Lexical tokenization is related to the type of tokenization used in large language models (LLMs) but with two differences. First, lexical tokenization is usually based on a lexical grammar, whereas LLM tokenizers are usually probability-based. Second, LLM tokenizers perform a second step that converts the tokens into numerical values. Rule-based programs A rule-based program, performing lexical tokenization, is called ''tokenizer'', or ''scanner'', although ''scanner'' is also a term for the first stage of a lexer. A lexer forms the first phase of a compiler frontend in processing. ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |