|

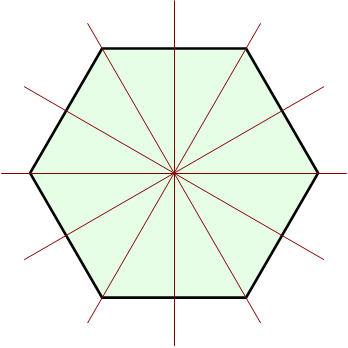

Rotation Group SO(3)

In mechanics and geometry, the 3D rotation group, often denoted SO(3), is the group of all rotations about the origin of three-dimensional Euclidean space \R^3 under the operation of composition. By definition, a rotation about the origin is a transformation that preserves the origin, Euclidean distance (so it is an isometry), and orientation (i.e., ''handedness'' of space). Composing two rotations results in another rotation, every rotation has a unique inverse rotation, and the identity map satisfies the definition of a rotation. Owing to the above properties (along composite rotations' associative property), the set of all rotations is a group under composition. Every non-trivial rotation is determined by its axis of rotation (a line through the origin) and its angle of rotation. Rotations are not commutative (for example, rotating ''R'' 90° in the x-y plane followed by ''S'' 90° in the y-z plane is not the same as ''S'' followed by ''R''), making the 3D rotation group a non ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Classical Mechanics

Classical mechanics is a physical theory describing the motion of macroscopic objects, from projectiles to parts of machinery, and astronomical objects, such as spacecraft, planets, stars, and galaxies. For objects governed by classical mechanics, if the present state is known, it is possible to predict how it will move in the future (determinism), and how it has moved in the past (reversibility). The earliest development of classical mechanics is often referred to as Newtonian mechanics. It consists of the physical concepts based on foundational works of Sir Isaac Newton, and the mathematical methods invented by Gottfried Wilhelm Leibniz, Joseph-Louis Lagrange, Leonhard Euler, and other contemporaries, in the 17th century to describe the motion of bodies under the influence of a system of forces. Later, more abstract methods were developed, leading to the reformulations of classical mechanics known as Lagrangian mechanics and Hamiltonian mechanics. These advances, ma ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Smooth Function

In mathematical analysis, the smoothness of a function (mathematics), function is a property measured by the number of Continuous function, continuous Derivative (mathematics), derivatives it has over some domain, called ''differentiability class''. At the very minimum, a function could be considered smooth if it is differentiable everywhere (hence continuous). At the other end, it might also possess derivatives of all Order of derivation, orders in its Domain of a function, domain, in which case it is said to be infinitely differentiable and referred to as a C-infinity function (or C^ function). Differentiability classes Differentiability class is a classification of functions according to the properties of their derivatives. It is a measure of the highest order of derivative that exists and is continuous for a function. Consider an open set U on the real line and a function f defined on U with real values. Let ''k'' be a non-negative integer. The function f is said to be of ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Elementary Particle

In particle physics, an elementary particle or fundamental particle is a subatomic particle that is not composed of other particles. Particles currently thought to be elementary include electrons, the fundamental fermions ( quarks, leptons, antiquarks, and antileptons, which generally are matter particles and antimatter particles), as well as the fundamental bosons ( gauge bosons and the Higgs boson), which generally are force particles that mediate interactions among fermions. A particle containing two or more elementary particles is a composite particle. Ordinary matter is composed of atoms, once presumed to be elementary particles – ''atomos'' meaning "unable to be cut" in Greek – although the atom's existence remained controversial until about 1905, as some leading physicists regarded molecules as mathematical illusions, and matter as ultimately composed of energy. Subatomic constituents of the atom were first identified in the early 1930s; the electron and the proto ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Group Representation

In the mathematical field of representation theory, group representations describe abstract groups in terms of bijective linear transformations of a vector space to itself (i.e. vector space automorphisms); in particular, they can be used to represent group elements as invertible matrices so that the group operation can be represented by matrix multiplication. In chemistry, a group representation can relate mathematical group elements to symmetric rotations and reflections of molecules. Representations of groups are important because they allow many group-theoretic problems to be reduced to problems in linear algebra, which is well understood. They are also important in physics because, for example, they describe how the symmetry group of a physical system affects the solutions of equations describing that system. The term ''representation of a group'' is also used in a more general sense to mean any "description" of a group as a group of transformations of some mathematical o ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Matrix Multiplication

In mathematics, particularly in linear algebra, matrix multiplication is a binary operation that produces a matrix from two matrices. For matrix multiplication, the number of columns in the first matrix must be equal to the number of rows in the second matrix. The resulting matrix, known as the matrix product, has the number of rows of the first and the number of columns of the second matrix. The product of matrices and is denoted as . Matrix multiplication was first described by the French mathematician Jacques Philippe Marie Binet in 1812, to represent the composition of linear maps that are represented by matrices. Matrix multiplication is thus a basic tool of linear algebra, and as such has numerous applications in many areas of mathematics, as well as in applied mathematics, statistics, physics, economics, and engineering. Computing matrix products is a central operation in all computational applications of linear algebra. Notation This article will use the following notati ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Determinant

In mathematics, the determinant is a scalar value that is a function of the entries of a square matrix. It characterizes some properties of the matrix and the linear map represented by the matrix. In particular, the determinant is nonzero if and only if the matrix is invertible and the linear map represented by the matrix is an isomorphism. The determinant of a product of matrices is the product of their determinants (the preceding property is a corollary of this one). The determinant of a matrix is denoted , , or . The determinant of a matrix is :\begin a & b\\c & d \end=ad-bc, and the determinant of a matrix is : \begin a & b & c \\ d & e & f \\ g & h & i \end= aei + bfg + cdh - ceg - bdi - afh. The determinant of a matrix can be defined in several equivalent ways. Leibniz formula expresses the determinant as a sum of signed products of matrix entries such that each summand is the product of different entries, and the number of these summands is n!, the factorial of (t ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Identity Matrix

In linear algebra, the identity matrix of size n is the n\times n square matrix with ones on the main diagonal and zeros elsewhere. Terminology and notation The identity matrix is often denoted by I_n, or simply by I if the size is immaterial or can be trivially determined by the context. I_1 = \begin 1 \end ,\ I_2 = \begin 1 & 0 \\ 0 & 1 \end ,\ I_3 = \begin 1 & 0 & 0 \\ 0 & 1 & 0 \\ 0 & 0 & 1 \end ,\ \dots ,\ I_n = \begin 1 & 0 & 0 & \cdots & 0 \\ 0 & 1 & 0 & \cdots & 0 \\ 0 & 0 & 1 & \cdots & 0 \\ \vdots & \vdots & \vdots & \ddots & \vdots \\ 0 & 0 & 0 & \cdots & 1 \end. The term unit matrix has also been widely used, but the term ''identity matrix'' is now standard. The term ''unit matrix'' is ambiguous, because it is also used for a matrix of ones and for any unit of the ring of all n\times n matrices. In some fields, such as group theory or quantum mechanics, the identity matrix is sometimes denoted by a boldface one, \mathbf, or called "id" (short for identity). ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Transpose

In linear algebra, the transpose of a matrix is an operator which flips a matrix over its diagonal; that is, it switches the row and column indices of the matrix by producing another matrix, often denoted by (among other notations). The transpose of a matrix was introduced in 1858 by the British mathematician Arthur Cayley. In the case of a logical matrix representing a binary relation R, the transpose corresponds to the converse relation RT. Transpose of a matrix Definition The transpose of a matrix , denoted by , , , A^, , , or , may be constructed by any one of the following methods: # Reflect over its main diagonal (which runs from top-left to bottom-right) to obtain #Write the rows of as the columns of #Write the columns of as the rows of Formally, the -th row, -th column element of is the -th row, -th column element of : :\left mathbf^\operatorname\right = \left mathbf\right. If is an matrix, then is an matrix. In the case of square matrices, ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Orthogonal Matrix

In linear algebra, an orthogonal matrix, or orthonormal matrix, is a real square matrix whose columns and rows are orthonormal vectors. One way to express this is Q^\mathrm Q = Q Q^\mathrm = I, where is the transpose of and is the identity matrix. This leads to the equivalent characterization: a matrix is orthogonal if its transpose is equal to its inverse: Q^\mathrm=Q^, where is the inverse of . An orthogonal matrix is necessarily invertible (with inverse ), unitary (), where is the Hermitian adjoint (conjugate transpose) of , and therefore normal () over the real numbers. The determinant of any orthogonal matrix is either +1 or −1. As a linear transformation, an orthogonal matrix preserves the inner product of vectors, and therefore acts as an isometry of Euclidean space, such as a rotation, reflection or rotoreflection. In other words, it is a unitary transformation. The set of orthogonal matrices, under multiplication, forms the group , known as the orthogonal gr ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Orthonormal Basis

In mathematics, particularly linear algebra, an orthonormal basis for an inner product space ''V'' with finite dimension is a basis for V whose vectors are orthonormal, that is, they are all unit vectors and orthogonal to each other. For example, the standard basis for a Euclidean space \R^n is an orthonormal basis, where the relevant inner product is the dot product of vectors. The image of the standard basis under a rotation or reflection (or any orthogonal transformation) is also orthonormal, and every orthonormal basis for \R^n arises in this fashion. For a general inner product space V, an orthonormal basis can be used to define normalized orthogonal coordinates on V. Under these coordinates, the inner product becomes a dot product of vectors. Thus the presence of an orthonormal basis reduces the study of a finite-dimensional inner product space to the study of \R^n under dot product. Every finite-dimensional inner product space has an orthonormal basis, which may be ob ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Basis Of A Vector Space

In mathematics, a set of vectors in a vector space is called a basis if every element of may be written in a unique way as a finite linear combination of elements of . The coefficients of this linear combination are referred to as components or coordinates of the vector with respect to . The elements of a basis are called . Equivalently, a set is a basis if its elements are linearly independent and every element of is a linear combination of elements of . In other words, a basis is a linearly independent spanning set. A vector space can have several bases; however all the bases have the same number of elements, called the ''dimension'' of the vector space. This article deals mainly with finite-dimensional vector spaces. However, many of the principles are also valid for infinite-dimensional vector spaces. Definition A basis of a vector space over a field (such as the real numbers or the complex numbers ) is a linearly independent subset of that spans . This mean ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Matrix (mathematics)

In mathematics, a matrix (plural matrices) is a rectangular array or table of numbers, symbols, or expressions, arranged in rows and columns, which is used to represent a mathematical object or a property of such an object. For example, \begin1 & 9 & -13 \\20 & 5 & -6 \end is a matrix with two rows and three columns. This is often referred to as a "two by three matrix", a "-matrix", or a matrix of dimension . Without further specifications, matrices represent linear maps, and allow explicit computations in linear algebra. Therefore, the study of matrices is a large part of linear algebra, and most properties and operations of abstract linear algebra can be expressed in terms of matrices. For example, matrix multiplication represents composition of linear maps. Not all matrices are related to linear algebra. This is, in particular, the case in graph theory, of incidence matrices, and adjacency matrices. ''This article focuses on matrices related to linear algebra, and, unle ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |