|

Quickprop

Quickprop is an iterative method for determining the minimum of the loss function of an artificial neural network, following an algorithm inspired by the Newton's method. Sometimes, the algorithm is classified to the group of the second order learning methods. It follows a quadratic approximation of the previous gradient step and the current gradient, which is expected to be close to the minimum of the loss function, under the assumption that the loss function is locally approximately square, trying to describe it by means of an upwardly open parabola. The minimum is sought in the vertex of the parabola. The procedure requires only local information of the artificial neuron to which it is applied. The k -th approximation step is given by: \Delta^ \, w_ = \Delta^ \, w_ \left ( \frac \right) Where w_ is the weight of input i of neuron j , and E is the loss function. The Quickprop algorithm is an implementation of the error backpropagation In machine learning, backpropag ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Artificial Neural Network

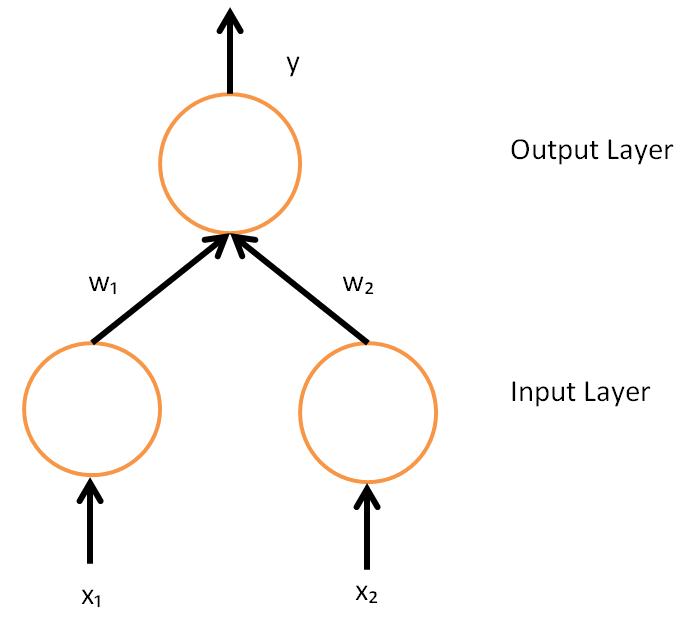

In machine learning, a neural network (also artificial neural network or neural net, abbreviated ANN or NN) is a computational model inspired by the structure and functions of biological neural networks. A neural network consists of connected units or nodes called '' artificial neurons'', which loosely model the neurons in the brain. Artificial neuron models that mimic biological neurons more closely have also been recently investigated and shown to significantly improve performance. These are connected by ''edges'', which model the synapses in the brain. Each artificial neuron receives signals from connected neurons, then processes them and sends a signal to other connected neurons. The "signal" is a real number, and the output of each neuron is computed by some non-linear function of the sum of its inputs, called the '' activation function''. The strength of the signal at each connection is determined by a ''weight'', which adjusts during the learning process. Typically, ne ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Machine Learning Algorithms

The following outline is provided as an overview of, and topical guide to, machine learning: Machine learning (ML) is a subfield of artificial intelligence within computer science that evolved from the study of pattern recognition and computational learning theory.http://www.britannica.com/EBchecked/topic/1116194/machine-learning In 1959, Arthur Samuel defined machine learning as a "field of study that gives computers the ability to learn without being explicitly programmed". ML involves the study and construction of algorithms that can learn from and make predictions on data. These algorithms operate by building a model from a training set of example observations to make data-driven predictions or decisions expressed as outputs, rather than following strictly static program instructions. How can machine learning be categorized? * An academic discipline * A branch of science ** An applied science *** A subfield of computer science **** A branch of artificial intelligenc ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Artificial Neural Networks

In machine learning, a neural network (also artificial neural network or neural net, abbreviated ANN or NN) is a computational model inspired by the structure and functions of biological neural networks. A neural network consists of connected units or nodes called '' artificial neurons'', which loosely model the neurons in the brain. Artificial neuron models that mimic biological neurons more closely have also been recently investigated and shown to significantly improve performance. These are connected by ''edges'', which model the synapses in the brain. Each artificial neuron receives signals from connected neurons, then processes them and sends a signal to other connected neurons. The "signal" is a real number, and the output of each neuron is computed by some non-linear function of the sum of its inputs, called the '' activation function''. The strength of the signal at each connection is determined by a ''weight'', which adjusts during the learning process. Typically, neur ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

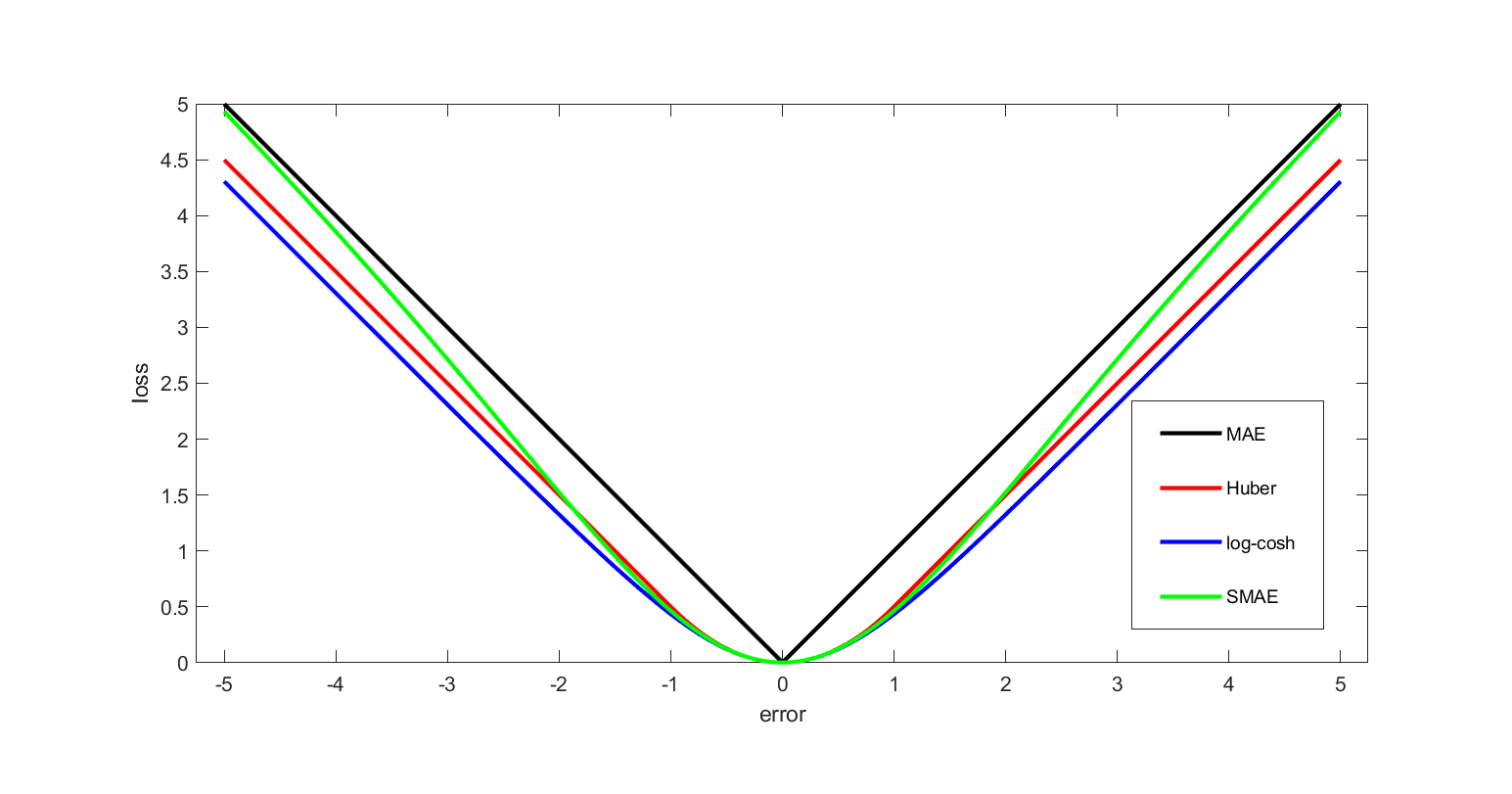

Loss Function

In mathematical optimization and decision theory, a loss function or cost function (sometimes also called an error function) is a function that maps an event or values of one or more variables onto a real number intuitively representing some "cost" associated with the event. An optimization problem seeks to minimize a loss function. An objective function is either a loss function or its opposite (in specific domains, variously called a reward function, a profit function, a utility function, a fitness function, etc.), in which case it is to be maximized. The loss function could include terms from several levels of the hierarchy. In statistics, typically a loss function is used for parameter estimation, and the event in question is some function of the difference between estimated and true values for an instance of data. The concept, as old as Pierre-Simon Laplace, Laplace, was reintroduced in statistics by Abraham Wald in the middle of the 20th century. In the context of economi ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Newton's Method

In numerical analysis, the Newton–Raphson method, also known simply as Newton's method, named after Isaac Newton and Joseph Raphson, is a root-finding algorithm which produces successively better approximations to the roots (or zeroes) of a real-valued function. The most basic version starts with a real-valued function , its derivative , and an initial guess for a root of . If satisfies certain assumptions and the initial guess is close, then x_ = x_0 - \frac is a better approximation of the root than . Geometrically, is the x-intercept of the tangent of the graph of at : that is, the improved guess, , is the unique root of the linear approximation of at the initial guess, . The process is repeated as x_ = x_n - \frac until a sufficiently precise value is reached. The number of correct digits roughly doubles with each step. This algorithm is first in the class of Householder's methods, and was succeeded by Halley's method. The method can also be extended t ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Gradient

In vector calculus, the gradient of a scalar-valued differentiable function f of several variables is the vector field (or vector-valued function) \nabla f whose value at a point p gives the direction and the rate of fastest increase. The gradient transforms like a vector under change of basis of the space of variables of f. If the gradient of a function is non-zero at a point p, the direction of the gradient is the direction in which the function increases most quickly from p, and the magnitude of the gradient is the rate of increase in that direction, the greatest absolute directional derivative. Further, a point where the gradient is the zero vector is known as a stationary point. The gradient thus plays a fundamental role in optimization theory, where it is used to minimize a function by gradient descent. In coordinate-free terms, the gradient of a function f(\mathbf) may be defined by: df=\nabla f \cdot d\mathbf where df is the total infinitesimal change in f for a ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Parabola

In mathematics, a parabola is a plane curve which is Reflection symmetry, mirror-symmetrical and is approximately U-shaped. It fits several superficially different Mathematics, mathematical descriptions, which can all be proved to define exactly the same curves. One description of a parabola involves a Point (geometry), point (the Focus (geometry), focus) and a Line (geometry), line (the Directrix (conic section), directrix). The focus does not lie on the directrix. The parabola is the locus (mathematics), locus of points in that plane that are equidistant from the directrix and the focus. Another description of a parabola is as a conic section, created from the intersection of a right circular conical surface and a plane (geometry), plane Parallel (geometry), parallel to another plane that is tangential to the conical surface. The graph of a function, graph of a quadratic function y=ax^2+bx+ c (with a\neq 0 ) is a parabola with its axis parallel to the -axis. Conversely, every ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Artificial Neuron

An artificial neuron is a mathematical function conceived as a model of a biological neuron in a neural network. The artificial neuron is the elementary unit of an ''artificial neural network''. The design of the artificial neuron was inspired by biological neural circuitry. Its inputs are analogous to excitatory postsynaptic potentials and inhibitory postsynaptic potentials at neural dendrites, or . Its weights are analogous to synaptic weights, and its output is analogous to a neuron's action potential which is transmitted along its axon. Usually, each input is separately weighted, and the sum is often added to a term known as a ''bias'' (loosely corresponding to the threshold potential), before being passed through a nonlinear function known as an activation function. Depending on the task, these functions could have a sigmoid shape (e.g. for binary classification), but they may also take the form of other nonlinear functions, piecewise linear functions, or step fun ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Backpropagation

In machine learning, backpropagation is a gradient computation method commonly used for training a neural network to compute its parameter updates. It is an efficient application of the chain rule to neural networks. Backpropagation computes the gradient of a loss function with respect to the weights of the network for a single input–output example, and does so efficiently, computing the gradient one layer at a time, iterating backward from the last layer to avoid redundant calculations of intermediate terms in the chain rule; this can be derived through dynamic programming. Strictly speaking, the term ''backpropagation'' refers only to an algorithm for efficiently computing the gradient, not how the gradient is used; but the term is often used loosely to refer to the entire learning algorithm – including how the gradient is used, such as by stochastic gradient descent, or as an intermediate step in a more complicated optimizer, such as Adaptive Moment Estimation. The ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |