|

Part-based Models

Part-based models refers to a broad class of detection algorithms used on images, in which various parts of the image are used separately in order to determine if and where an object of interest exists. Amongst these methods a very popular one is the constellation model which refers to those schemes which seek to detect a small number of features and their relative positions to then determine whether or not the object of interest is present. These models build on the original idea of Fischler and Elschlager of using the relative position of a few template matches and evolve in complexity in the work of Perona and others. These models will be covered in the constellation models section. To get a better idea of what is meant by constellation model an example may be more illustrative. Say we are trying to detect faces. A constellation model would use smaller part detectors, for instance mouth, nose and eye detectors and make a judgment about whether an image has a face based on t ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Constellation Model

The constellation model is a probabilistic, generative model for category-level object recognition in computer vision. Like other part-based models, the constellation model attempts to represent an object class by a set of ''N'' parts under mutual geometric constraints. Because it considers the geometric relationship between different parts, the constellation model differs significantly from appearance-only, or " bag-of-words" representation models, which explicitly disregard the location of image features. The problem of defining a generative model for object recognition is difficult. The task becomes significantly complicated by factors such as background clutter, occlusion, and variations in viewpoint, illumination, and scale. Ideally, we would like the particular representation we choose to be robust to as many of these factors as possible. In category-level recognition, the problem is even more challenging because of the fundamental problem of intra-class variation. Even if two ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Face Detection

Face detection is a computer technology being used in a variety of applications that identifies human faces in digital images. Face detection also refers to the psychological process by which humans locate and attend to faces in a visual scene. Definition and related algorithms Face detection can be regarded as a specific case of object-class detection. In object-class detection, the task is to find the locations and sizes of all objects in an image that belong to a given class. Examples include upper torsos, pedestrians, and cars. Face detection simply answers two question, 1. are there any human faces in the collected images or video? 2. where is the face located? Face-detection algorithms focus on the detection of frontal human faces. It is analogous to image detection in which the image of a person is matched bit by bit. Image matches with the image stores in database. Any facial feature changes in the database will invalidate the matching process. A reliable face-de ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Maxima And Minima

In mathematical analysis, the maximum and minimum of a function are, respectively, the greatest and least value taken by the function. Known generically as extremum, they may be defined either within a given range (the ''local'' or ''relative'' extrema) or on the entire domain (the ''global'' or ''absolute'' extrema) of a function. Pierre de Fermat was one of the first mathematicians to propose a general technique, adequality, for finding the maxima and minima of functions. As defined in set theory, the maximum and minimum of a set are the greatest and least elements in the set, respectively. Unbounded infinite sets, such as the set of real numbers, have no minimum or maximum. In statistics, the corresponding concept is the sample maximum and minimum. Definition A real-valued function ''f'' defined on a domain ''X'' has a global (or absolute) maximum point at ''x''∗, if for all ''x'' in ''X''. Similarly, the function has a global (or absolute) minimum point at ''x''� ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Error Function

In mathematics, the error function (also called the Gauss error function), often denoted by , is a function \mathrm: \mathbb \to \mathbb defined as: \operatorname z = \frac\int_0^z e^\,\mathrm dt. The integral here is a complex Contour integration, contour integral which is path-independent because \exp(-t^2) is Holomorphic function, holomorphic on the whole complex plane \mathbb. In many applications, the function argument is a real number, in which case the function value is also real. In some old texts, the error function is defined without the factor of \frac. This nonelementary integral is a sigmoid function, sigmoid function that occurs often in probability, statistics, and partial differential equations. In statistics, for non-negative real values of , the error function has the following interpretation: for a real random variable that is normal distribution, normally distributed with mean 0 and standard deviation \frac, is the probability that falls in the range . ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Template Matching

Template matching is a technique in digital image processing for finding small parts of an image which match a template image. It can be used for quality control in manufacturing, navigation of mobile robots, or edge detection in images. The main challenges in a template matching task are detection of occlusion, when a sought-after object is partly hidden in an image; detection of non-rigid transformations, when an object is distorted or imaged from different angles; sensitivity to illumination and background changes; background clutter; and scale changes. Feature-based approach The feature-based approach to template matching relies on the extraction of image features, such as shapes, textures, and colors, that match the target image or frame. This approach is usually achieved using neural networks and deep-learning classifiers such as VGG, AlexNet, and ResNet.Convolutional neural networks (CNNs), which many modern classifiers are based on, process an image by passing it th ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Scale Invariance

In physics, mathematics and statistics, scale invariance is a feature of objects or laws that do not change if scales of length, energy, or other variables, are multiplied by a common factor, and thus represent a universality. The technical term for this transformation is a dilatation (also known as dilation). Dilatations can form part of a larger conformal symmetry. *In mathematics, scale invariance usually refers to an invariance of individual functions or curves. A closely related concept is self-similarity, where a function or curve is invariant under a discrete subset of the dilations. It is also possible for the probability distributions of random processes to display this kind of scale invariance or self-similarity. *In classical field theory, scale invariance most commonly applies to the invariance of a whole theory under dilatations. Such theories typically describe classical physical processes with no characteristic length scale. *In quantum field theory, scale inva ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Computer Vision

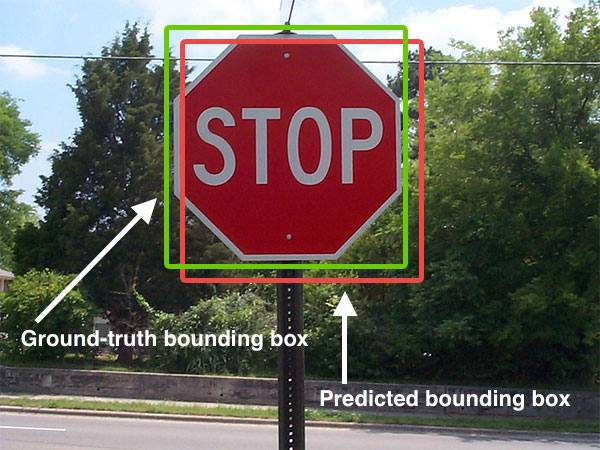

Computer vision tasks include methods for image sensor, acquiring, Image processing, processing, Image analysis, analyzing, and understanding digital images, and extraction of high-dimensional data from the real world in order to produce numerical or symbolic information, e.g. in the form of decisions. "Understanding" in this context signifies the transformation of visual images (the input to the retina) into descriptions of the world that make sense to thought processes and can elicit appropriate action. This image understanding can be seen as the disentangling of symbolic information from image data using models constructed with the aid of geometry, physics, statistics, and learning theory. The scientific discipline of computer vision is concerned with the theory behind artificial systems that extract information from images. Image data can take many forms, such as video sequences, views from multiple cameras, multi-dimensional data from a 3D scanning, 3D scanner, 3D point clouds ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Template Matching

Template matching is a technique in digital image processing for finding small parts of an image which match a template image. It can be used for quality control in manufacturing, navigation of mobile robots, or edge detection in images. The main challenges in a template matching task are detection of occlusion, when a sought-after object is partly hidden in an image; detection of non-rigid transformations, when an object is distorted or imaged from different angles; sensitivity to illumination and background changes; background clutter; and scale changes. Feature-based approach The feature-based approach to template matching relies on the extraction of image features, such as shapes, textures, and colors, that match the target image or frame. This approach is usually achieved using neural networks and deep-learning classifiers such as VGG, AlexNet, and ResNet.Convolutional neural networks (CNNs), which many modern classifiers are based on, process an image by passing it th ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |