|

Principle Of Maximum Entropy

The principle of maximum entropy states that the probability distribution which best represents the current state of knowledge about a system is the one with largest entropy, in the context of precisely stated prior data (such as a proposition that expresses testable information). Another way of stating this: Take precisely stated prior data or testable information about a probability distribution function. Consider the set of all trial probability distributions that would encode the prior data. According to this principle, the distribution with maximal information entropy is the best choice. History The principle was first expounded by E. T. Jaynes in two papers in 1957, where he emphasized a natural correspondence between statistical mechanics and information theory. In particular, Jaynes argued that the Gibbsian method of statistical mechanics is sound by also arguing that the entropy of statistical mechanics and the information entropy of information theory are the same ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

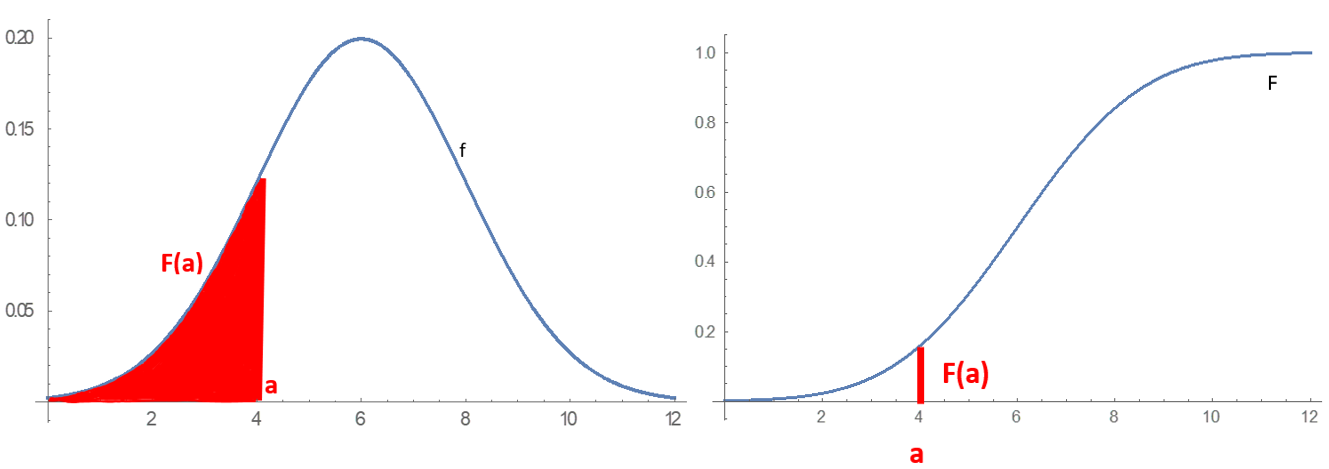

Probability Distribution

In probability theory and statistics, a probability distribution is a Function (mathematics), function that gives the probabilities of occurrence of possible events for an Experiment (probability theory), experiment. It is a mathematical description of a Randomness, random phenomenon in terms of its sample space and the Probability, probabilities of Event (probability theory), events (subsets of the sample space). For instance, if is used to denote the outcome of a coin toss ("the experiment"), then the probability distribution of would take the value 0.5 (1 in 2 or 1/2) for , and 0.5 for (assuming that fair coin, the coin is fair). More commonly, probability distributions are used to compare the relative occurrence of many different random values. Probability distributions can be defined in different ways and for discrete or for continuous variables. Distributions with special properties or for especially important applications are given specific names. Introduction A prob ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Ergodic

In mathematics, ergodicity expresses the idea that a point of a moving system, either a dynamical system or a stochastic process, will eventually visit all parts of the space that the system moves in, in a uniform and random sense. This implies that the average behavior of the system can be deduced from the trajectory of a "typical" point. Equivalently, a sufficiently large collection of random samples from a process can represent the average statistical properties of the entire process. Ergodicity is a property of the system; it is a statement that the system cannot be reduced or factored into smaller components. Ergodic theory is the study of systems possessing ergodicity. Ergodic systems occur in a broad range of systems in physics and in geometry. This can be roughly understood to be due to a common phenomenon: the motion of particles, that is, geodesics on a hyperbolic manifold are divergent; when that manifold is compact, that is, of finite size, those orbits return to the ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Natural Language Processing

Natural language processing (NLP) is a subfield of computer science and especially artificial intelligence. It is primarily concerned with providing computers with the ability to process data encoded in natural language and is thus closely related to information retrieval, knowledge representation and computational linguistics, a subfield of linguistics. Major tasks in natural language processing are speech recognition, text classification, natural-language understanding, natural language understanding, and natural language generation. History Natural language processing has its roots in the 1950s. Already in 1950, Alan Turing published an article titled "Computing Machinery and Intelligence" which proposed what is now called the Turing test as a criterion of intelligence, though at the time that was not articulated as a problem separate from artificial intelligence. The proposed test includes a task that involves the automated interpretation and generation of natural language ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Maximum Entropy Inference

In mathematical analysis, the maximum and minimum of a function are, respectively, the greatest and least value taken by the function. Known generically as extremum, they may be defined either within a given range (the ''local'' or ''relative'' extrema) or on the entire domain (the ''global'' or ''absolute'' extrema) of a function. Pierre de Fermat was one of the first mathematicians to propose a general technique, adequality, for finding the maxima and minima of functions. As defined in set theory, the maximum and minimum of a set are the greatest and least elements in the set, respectively. Unbounded infinite sets, such as the set of real numbers, have no minimum or maximum. In statistics, the corresponding concept is the sample maximum and minimum. Definition A real-valued function ''f'' defined on a domain ''X'' has a global (or absolute) maximum point at ''x''∗, if for all ''x'' in ''X''. Similarly, the function has a global (or absolute) minimum point at ''x''∗, ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Probability Kinematics

Radical probabilism is a hypothesis in philosophy, in particular epistemology, and probability theory that holds that no facts are known for certain. That view holds profound implications for statistical inference. The philosophy is particularly associated with Richard Jeffrey who wittily characterised it with the ''dictum'' "It's probabilities all the way down." Background Bayes' theorem states a rule for updating a probability conditioned on other information. In 1967, Ian Hacking argued that in a static form, Bayes' theorem only connects probabilities that are held simultaneously; it does not tell the learner how to update probabilities when new evidence becomes available over time, contrary to what contemporary Bayesians suggested. According to Hacking, adopting Bayes' theorem is a temptation. Suppose that a learner forms probabilities ''P''old(''A'' & ''B'') = ''p'' and ''P''old(''B'') = ''q''. If the learner subsequently learns that ''B'' is ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Richard Jeffrey

Richard Carl Jeffrey (August 5, 1926 – November 9, 2002) was an American philosopher, logician, and probability theorist. He is best known for developing and championing the philosophy of radical probabilism and the associated heuristic of probability kinematics, also known as Jeffrey conditioning. Life and career Born in Boston, Massachusetts, Jeffrey served in the U.S. Navy during World War II. As a graduate student he studied under Rudolf Carnap and Carl Hempel. He received his M.A. from the University of Chicago in 1952 and his Ph.D. from Princeton in 1957. After holding academic positions at MIT, City College of New York, Stanford University, and the University of Pennsylvania, he joined the faculty of Princeton in 1974 and became a professor emeritus there in 1999. He was also a visiting professor at the University of California, Irvine. Jeffrey, who died of lung cancer at the age of 76, was known for his sense of humor, which often came through in his breezy ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Radical Probabilism

Radical probabilism is a hypothesis in philosophy, in particular epistemology, and probability theory that holds that no facts are known for certain. That view holds profound implications for statistical inference. The philosophy is particularly associated with Richard Jeffrey who wittily characterised it with the ''dictum'' "It's probabilities all the way down." Background Bayes' theorem states a rule for updating a probability conditioned on other information. In 1967, Ian Hacking argued that in a static form, Bayes' theorem only connects probabilities that are held simultaneously; it does not tell the learner how to update probabilities when new evidence becomes available over time, contrary to what contemporary Bayesians suggested. According to Hacking, adopting Bayes' theorem is a temptation. Suppose that a learner forms probabilities ''P''old(''A'' & ''B'') = ''p'' and ''P''old(''B'') = ''q''. If the learner subsequently learns that ''B'' is ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Journal Of The American Statistical Association

The ''Journal of the American Statistical Association'' is a quarterly peer-reviewed scientific journal published by Taylor & Francis on behalf of the American Statistical Association. It covers work primarily focused on the application of statistics, statistical theory and methods in economic, social, physical, engineering, and health sciences. The journal also includes reviews of books which are relevant to the field. The journal was established in 1888 as the ''Publications of the American Statistical Association''. It was renamed ''Quarterly Publications of the American Statistical Association'' in 1912, obtaining its current title in 1922. Reception According to the ''Journal Citation Reports ''Journal Citation Reports'' (''JCR'') is an annual publication by Clarivate. It has been integrated with the Web of Science and is accessed from the Web of Science Core Collection. It provides information about academic journals in the natur ...'', the journal has a 2023 impac ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Journal Of Econometrics

The ''Journal of Econometrics'' is a monthly peer-reviewed academic journal covering econometrics. It was established in 1973. The editors-in-chief are Michael Jansson (University of California Berkeley) and Aureo de Paula (University College London). According to the ''Journal Citation Reports'', the journal has a 2023 impact factor of 9.9. The journal covers work dealing with estimation and other methodological aspects of the application of statistical inference to economic data, as well as papers dealing with the application of econometric techniques to economics Economics () is a behavioral science that studies the Production (economics), production, distribution (economics), distribution, and Consumption (economics), consumption of goods and services. Economics focuses on the behaviour and interac .... Unusually among journals the title of Fellow of Journal of Econometrics is offered to anyone publishing four or more articles in the Journal.Maasoumi, E. (1992). Fel ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Channel Coding

In computing, telecommunication, information theory, and coding theory, forward error correction (FEC) or channel coding is a technique used for error control, controlling errors in data transmission over unreliable or noisy communication channels. The central idea is that the sender encodes the message in a Redundancy (information theory), redundant way, most often by using an error correction code, or error correcting code (ECC). The redundancy allows the receiver not only to error detection, detect errors that may occur anywhere in the message, but often to correct a limited number of errors. Therefore a reverse channel to request re-transmission may not be needed. The cost is a fixed, higher forward channel bandwidth. The American mathematician Richard Hamming pioneered this field in the 1940s and invented the first error-correcting code in 1950: the Hamming (7,4) code. FEC can be applied in situations where re-transmissions are costly or impossible, such as one-way communic ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Bayesian Inference

Bayesian inference ( or ) is a method of statistical inference in which Bayes' theorem is used to calculate a probability of a hypothesis, given prior evidence, and update it as more information becomes available. Fundamentally, Bayesian inference uses a prior distribution to estimate posterior probabilities. Bayesian inference is an important technique in statistics, and especially in mathematical statistics. Bayesian updating is particularly important in the dynamic analysis of a sequence of data. Bayesian inference has found application in a wide range of activities, including science, engineering, philosophy, medicine, sport, and law. In the philosophy of decision theory, Bayesian inference is closely related to subjective probability, often called "Bayesian probability". Introduction to Bayes' rule Formal explanation Bayesian inference derives the posterior probability as a consequence of two antecedents: a prior probability and a "likelihood function" derive ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Uniform Distribution (discrete)

In probability theory and statistics, the discrete uniform distribution is a symmetric probability distribution wherein each of some finite whole number ''n'' of outcome values are equally likely to be observed. Thus every one of the ''n'' outcome values has equal probability 1/''n''. Intuitively, a discrete uniform distribution is "a known, finite number of outcomes all equally likely to happen." A simple example of the discrete uniform distribution comes from throwing a fair six-sided die. The possible values are 1, 2, 3, 4, 5, 6, and each time the die is thrown the probability of each given value is 1/6. If two dice were thrown and their values added, the possible sums would not have equal probability and so the distribution of sums of two dice rolls is not uniform. Although it is common to consider discrete uniform distributions over a contiguous range of integers, such as in this six-sided die example, one can define discrete uniform distributions over any finite set. ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |