|

Outline Of Linear Algebra

This is an outline of topics related to linear algebra, the branch of mathematics concerning linear equations and linear maps and their representations in vector spaces and through matrices. Linear equations Linear equation * System of linear equations *Determinant ** Minor **Cauchy–Binet formula *Cramer's rule *Gaussian elimination * Gauss–Jordan elimination *Overcompleteness * Strassen algorithm Matrices Matrix * Matrix addition *Matrix multiplication *Basis transformation matrix *Characteristic polynomial * Trace *Eigenvalue, eigenvector and eigenspace **Cayley–Hamilton theorem **Spread of a matrix **Jordan normal form ** Weyr canonical form *Rank *Matrix inversion, invertible matrix **Pseudoinverse * Adjugate *Transpose **Dot product **Symmetric matrix **Orthogonal matrix **Skew-symmetric matrix **Conjugate transpose ***Unitary matrix ***Hermitian matrix, Antihermitian matrix * Positive-definite, positive-semidefinite matrix *Pfaffian * Projection *Spectral theorem *Per ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Linear Equation

In mathematics, a linear equation is an equation that may be put in the form a_1x_1+\ldots+a_nx_n+b=0, where x_1,\ldots,x_n are the variables (or unknowns), and b,a_1,\ldots,a_n are the coefficients, which are often real numbers. The coefficients may be considered as parameters of the equation, and may be arbitrary expressions, provided they do not contain any of the variables. To yield a meaningful equation, the coefficients a_1, \ldots, a_n are required to not all be zero. Alternatively, a linear equation can be obtained by equating to zero a linear polynomial over some field, from which the coefficients are taken. The solutions of such an equation are the values that, when substituted for the unknowns, make the equality true. In the case of just one variable, there is exactly one solution (provided that a_1\ne 0). Often, the term ''linear equation'' refers implicitly to this particular case, in which the variable is sensibly called the ''unknown''. In the case of two ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

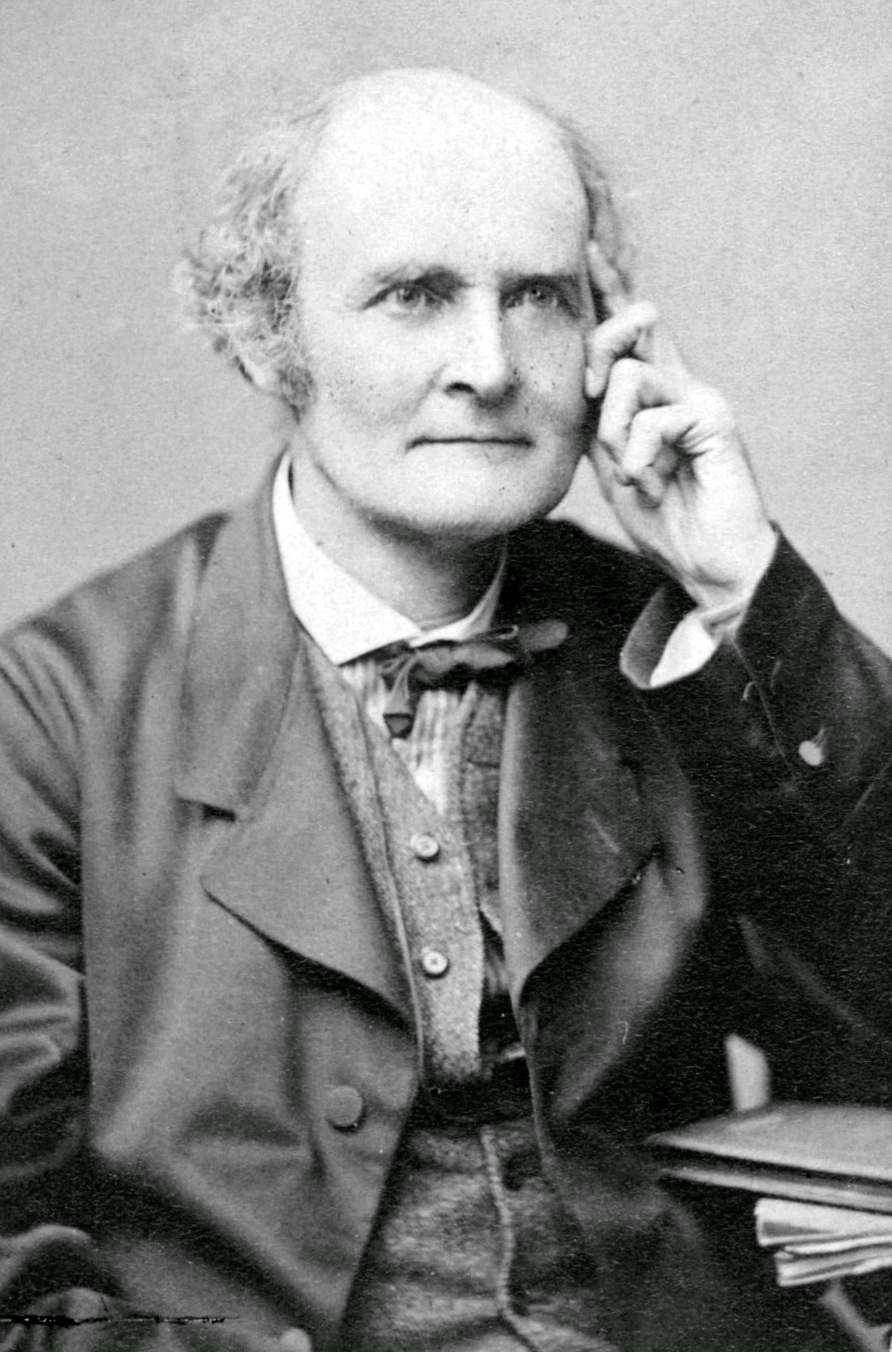

Cayley–Hamilton Theorem

In linear algebra, the Cayley–Hamilton theorem (named after the mathematicians Arthur Cayley and William Rowan Hamilton) states that every square matrix over a commutative ring (such as the real or complex numbers or the integers) satisfies its own characteristic equation. If is a given matrix and is the identity matrix, then the characteristic polynomial of is defined as p_A(\lambda)=\det(\lambda I_n-A), where is the determinant operation and is a variable for a scalar element of the base ring. Since the entries of the matrix (\lambda I_n-A) are (linear or constant) polynomials in , the determinant is also a degree- monic polynomial in , p_A(\lambda) = \lambda^n + c_\lambda^ + \cdots + c_1\lambda + c_0~. One can create an analogous polynomial p_A(A) in the matrix instead of the scalar variable , defined as p_A(A) = A^n + c_A^ + \cdots + c_1A + c_0I_n~. The Cayley–Hamilton theorem states that this polynomial expression is equal to the zero matrix, which is to sa ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Skew-symmetric Matrix

In mathematics, particularly in linear algebra, a skew-symmetric (or antisymmetric or antimetric) matrix is a square matrix whose transpose equals its negative. That is, it satisfies the condition In terms of the entries of the matrix, if a_ denotes the entry in the i-th row and j-th column, then the skew-symmetric condition is equivalent to Example The matrix :A = \begin 0 & 2 & -45 \\ -2 & 0 & -4 \\ 45 & 4 & 0 \end is skew-symmetric because : -A = \begin 0 & -2 & 45 \\ 2 & 0 & 4 \\ -45 & -4 & 0 \end = A^\textsf . Properties Throughout, we assume that all matrix entries belong to a field \mathbb whose characteristic is not equal to 2. That is, we assume that , where 1 denotes the multiplicative identity and 0 the additive identity of the given field. If the characteristic of the field is 2, then a skew-symmetric matrix is the same thing as a symmetric matrix. * The sum of two skew-symmetric matrices is skew-symmetric. * A sca ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Orthogonal Matrix

In linear algebra, an orthogonal matrix, or orthonormal matrix, is a real square matrix whose columns and rows are orthonormal vectors. One way to express this is Q^\mathrm Q = Q Q^\mathrm = I, where is the transpose of and is the identity matrix. This leads to the equivalent characterization: a matrix is orthogonal if its transpose is equal to its inverse: Q^\mathrm=Q^, where is the inverse of . An orthogonal matrix is necessarily invertible (with inverse ), unitary (), where is the Hermitian adjoint (conjugate transpose) of , and therefore normal () over the real numbers. The determinant of any orthogonal matrix is either +1 or −1. As a linear transformation, an orthogonal matrix preserves the inner product of vectors, and therefore acts as an isometry of Euclidean space, such as a rotation, reflection or rotoreflection. In other words, it is a unitary transformation. The set of orthogonal matrices, under multiplication, forms the group , known as the o ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Symmetric Matrix

In linear algebra, a symmetric matrix is a square matrix that is equal to its transpose. Formally, Because equal matrices have equal dimensions, only square matrices can be symmetric. The entries of a symmetric matrix are symmetric with respect to the main diagonal. So if a_ denotes the entry in the ith row and jth column then for all indices i and j. Every square diagonal matrix is symmetric, since all off-diagonal elements are zero. Similarly in characteristic different from 2, each diagonal element of a skew-symmetric matrix must be zero, since each is its own negative. In linear algebra, a real symmetric matrix represents a self-adjoint operator represented in an orthonormal basis over a real inner product space. The corresponding object for a complex inner product space is a Hermitian matrix with complex-valued entries, which is equal to its conjugate transpose. Therefore, in linear algebra over the complex numbers, it is often assumed that a symmetric ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Dot Product

In mathematics, the dot product or scalar productThe term ''scalar product'' means literally "product with a scalar as a result". It is also used sometimes for other symmetric bilinear forms, for example in a pseudo-Euclidean space. is an algebraic operation that takes two equal-length sequences of numbers (usually coordinate vectors), and returns a single number. In Euclidean geometry, the dot product of the Cartesian coordinates of two vectors is widely used. It is often called the inner product (or rarely projection product) of Euclidean space, even though it is not the only inner product that can be defined on Euclidean space (see Inner product space for more). Algebraically, the dot product is the sum of the products of the corresponding entries of the two sequences of numbers. Geometrically, it is the product of the Euclidean magnitudes of the two vectors and the cosine of the angle between them. These definitions are equivalent when using Cartesian coordinates. ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Transpose

In linear algebra, the transpose of a matrix is an operator which flips a matrix over its diagonal; that is, it switches the row and column indices of the matrix by producing another matrix, often denoted by (among other notations). The transpose of a matrix was introduced in 1858 by the British mathematician Arthur Cayley. In the case of a logical matrix representing a binary relation R, the transpose corresponds to the converse relation RT. Transpose of a matrix Definition The transpose of a matrix , denoted by , , , A^, , , or , may be constructed by any one of the following methods: # Reflect over its main diagonal (which runs from top-left to bottom-right) to obtain #Write the rows of as the columns of #Write the columns of as the rows of Formally, the -th row, -th column element of is the -th row, -th column element of : :\left mathbf^\operatorname\right = \left mathbf\right. If is an matrix, then is an matrix. In the case of square matric ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Adjugate

In linear algebra, the adjugate or classical adjoint of a square matrix is the transpose of its cofactor matrix and is denoted by . It is also occasionally known as adjunct matrix, or "adjoint", though the latter today normally refers to a different concept, the adjoint operator which is the conjugate transpose of the matrix. The product of a matrix with its adjugate gives a diagonal matrix (entries not on the main diagonal are zero) whose diagonal entries are the determinant of the original matrix: :\mathbf \operatorname(\mathbf) = \det(\mathbf) \mathbf, where is the identity matrix of the same size as . Consequently, the multiplicative inverse of an invertible matrix can be found by dividing its adjugate by its determinant. Definition The adjugate of is the transpose of the cofactor matrix of , :\operatorname(\mathbf) = \mathbf^\mathsf. In more detail, suppose is a unital commutative ring and is an matrix with entries from . The -''minor'' of , denoted , is the determ ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Pseudoinverse

In mathematics, and in particular, algebra, a generalized inverse (or, g-inverse) of an element ''x'' is an element ''y'' that has some properties of an inverse element but not necessarily all of them. The purpose of constructing a generalized inverse of a matrix is to obtain a matrix that can serve as an inverse in some sense for a wider class of matrices than invertible matrices. Generalized inverses can be defined in any mathematical structure that involves associative multiplication, that is, in a semigroup. This article describes generalized inverses of a matrix A. A matrix A^\mathrm \in \mathbb^ is a generalized inverse of a matrix A \in \mathbb^ if AA^\mathrmA = A. A generalized inverse exists for an arbitrary matrix, and when a matrix has a regular inverse, this inverse is its unique generalized inverse. Motivation Consider the linear system :Ax = y where A is an n \times m matrix and y \in \mathcal R(A), the column space of A. If A is nonsingular (which implie ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Invertible Matrix

In linear algebra, an -by- square matrix is called invertible (also nonsingular or nondegenerate), if there exists an -by- square matrix such that :\mathbf = \mathbf = \mathbf_n \ where denotes the -by- identity matrix and the multiplication used is ordinary matrix multiplication. If this is the case, then the matrix is uniquely determined by , and is called the (multiplicative) ''inverse'' of , denoted by . Matrix inversion is the process of finding the matrix that satisfies the prior equation for a given invertible matrix . A square matrix that is ''not'' invertible is called singular or degenerate. A square matrix is singular if and only if its determinant is zero. Singular matrices are rare in the sense that if a square matrix's entries are randomly selected from any finite region on the number line or complex plane, the probability that the matrix is singular is 0, that is, it will "almost never" be singular. Non-square matrices (-by- matrices for which ) do not hav ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Matrix Inversion

In linear algebra, an -by- square matrix is called invertible (also nonsingular or nondegenerate), if there exists an -by- square matrix such that :\mathbf = \mathbf = \mathbf_n \ where denotes the -by- identity matrix and the multiplication used is ordinary matrix multiplication. If this is the case, then the matrix is uniquely determined by , and is called the (multiplicative) ''inverse'' of , denoted by . Matrix inversion is the process of finding the matrix that satisfies the prior equation for a given invertible matrix . A square matrix that is ''not'' invertible is called singular or degenerate. A square matrix is singular if and only if its determinant is zero. Singular matrices are rare in the sense that if a square matrix's entries are randomly selected from any finite region on the number line or complex plane, the probability that the matrix is singular is 0, that is, it will "almost never" be singular. Non-square matrices (-by- matrices for which ) do not h ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Rank (matrix Theory)

In linear algebra, the rank of a matrix is the dimension of the vector space generated (or spanned) by its columns. p. 48, § 1.16 This corresponds to the maximal number of linearly independent columns of . This, in turn, is identical to the dimension of the vector space spanned by its rows. Rank is thus a measure of the " nondegenerateness" of the system of linear equations and linear transformation encoded by . There are multiple equivalent definitions of rank. A matrix's rank is one of its most fundamental characteristics. The rank is commonly denoted by or ; sometimes the parentheses are not written, as in .Alternative notation includes \rho (\Phi) from and . Main definitions In this section, we give some definitions of the rank of a matrix. Many definitions are possible; see Alternative definitions for several of these. The column rank of is the dimension of the column space of , while the row rank of is the dimension of the row space of . A fundamental result in ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |