|

Optimality Criterion

In statistics, an optimality criterion provides a measure of the fit of the data to a given hypothesis, to aid in model selection. A model is designated as the "best" of the candidate models if it gives the best value of an objective function measuring the degree of satisfaction of the criterion used to evaluate the alternative hypotheses. The term has been used to identify the different criteria that are used to evaluate a phylogenetic tree. For example, in order to determine the best topology between two phylogenetic trees using the maximum likelihood optimality criterion, one would calculate the maximum likelihood score of each tree and choose the one that had the better score. However, different optimality criteria can select different hypotheses. In such circumstances caution should be exercised when making strong conclusions. Many other disciplines use similar criteria or have specific measures geared toward the objectives of the field. Optimality criteria include maximum l ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Statistics

Statistics (from German language, German: ''wikt:Statistik#German, Statistik'', "description of a State (polity), state, a country") is the discipline that concerns the collection, organization, analysis, interpretation, and presentation of data. In applying statistics to a scientific, industrial, or social problem, it is conventional to begin with a statistical population or a statistical model to be studied. Populations can be diverse groups of people or objects such as "all people living in a country" or "every atom composing a crystal". Statistics deals with every aspect of data, including the planning of data collection in terms of the design of statistical survey, surveys and experimental design, experiments.Dodge, Y. (2006) ''The Oxford Dictionary of Statistical Terms'', Oxford University Press. When census data cannot be collected, statisticians collect data by developing specific experiment designs and survey sample (statistics), samples. Representative sampling as ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Hypothesis

A hypothesis (plural hypotheses) is a proposed explanation for a phenomenon. For a hypothesis to be a scientific hypothesis, the scientific method requires that one can test it. Scientists generally base scientific hypotheses on previous observations that cannot satisfactorily be explained with the available scientific theories. Even though the words "hypothesis" and "theory" are often used interchangeably, a scientific hypothesis is not the same as a scientific theory. A working hypothesis is a provisionally accepted hypothesis proposed for further research in a process beginning with an educated guess or thought. A different meaning of the term ''hypothesis'' is used in formal logic, to denote the antecedent of a proposition; thus in the proposition "If ''P'', then ''Q''", ''P'' denotes the hypothesis (or antecedent); ''Q'' can be called a consequent. ''P'' is the assumption in a (possibly counterfactual) ''What If'' question. The adjective ''hypothetical'', meaning "hav ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Model Selection

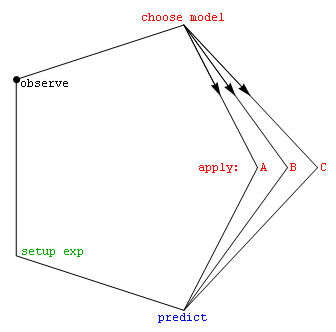

Model selection is the task of selecting a statistical model from a set of candidate models, given data. In the simplest cases, a pre-existing set of data is considered. However, the task can also involve the design of experiments such that the data collected is well-suited to the problem of model selection. Given candidate models of similar predictive or explanatory power, the simplest model is most likely to be the best choice (Occam's razor). state, "The majority of the problems in statistical inference can be considered to be problems related to statistical modeling". Relatedly, has said, "How hetranslation from subject-matter problem to statistical model is done is often the most critical part of an analysis". Model selection may also refer to the problem of selecting a few representative models from a large set of computational models for the purpose of decision making or optimization under uncertainty. Introduction In its most basic forms, model selection is one ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Statistical Model

A statistical model is a mathematical model that embodies a set of statistical assumptions concerning the generation of Sample (statistics), sample data (and similar data from a larger Statistical population, population). A statistical model represents, often in considerably idealized form, the data-generating process. A statistical model is usually specified as a mathematical relationship between one or more random variables and other non-random variables. As such, a statistical model is "a formal representation of a theory" (Herman J. Adèr, Herman Adèr quoting Kenneth A. Bollen, Kenneth Bollen). All Statistical hypothesis testing, statistical hypothesis tests and all Estimator, statistical estimators are derived via statistical models. More generally, statistical models are part of the foundation of statistical inference. Introduction Informally, a statistical model can be thought of as a statistical assumption (or set of statistical assumptions) with a certain property: that ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Objective Function

In mathematical optimization and decision theory, a loss function or cost function (sometimes also called an error function) is a function that maps an event or values of one or more variables onto a real number intuitively representing some "cost" associated with the event. An optimization problem seeks to minimize a loss function. An objective function is either a loss function or its opposite (in specific domains, variously called a reward function, a profit function, a utility function, a fitness function, etc.), in which case it is to be maximized. The loss function could include terms from several levels of the hierarchy. In statistics, typically a loss function is used for parameter estimation, and the event in question is some function of the difference between estimated and true values for an instance of data. The concept, as old as Laplace, was reintroduced in statistics by Abraham Wald in the middle of the 20th century. In the context of economics, for example, this is ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Phylogenetic Tree

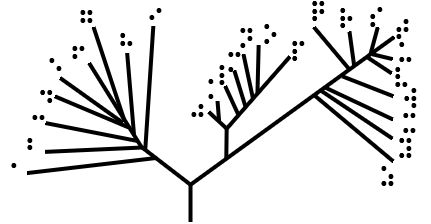

A phylogenetic tree (also phylogeny or evolutionary tree Felsenstein J. (2004). ''Inferring Phylogenies'' Sinauer Associates: Sunderland, MA.) is a branching diagram or a tree showing the evolutionary relationships among various biological species or other entities based upon similarities and differences in their physical or genetic characteristics. All life on Earth is part of a single phylogenetic tree, indicating common ancestry. In a ''rooted'' phylogenetic tree, each node with descendants represents the inferred most recent common ancestor of those descendants, and the edge lengths in some trees may be interpreted as time estimates. Each node is called a taxonomic unit. Internal nodes are generally called hypothetical taxonomic units, as they cannot be directly observed. Trees are useful in fields of biology such as bioinformatics, systematics, and phylogenetics. ''Unrooted'' trees illustrate only the relatedness of the leaf nodes and do not require the ancestral root to b ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Maximum Likelihood

In statistics, maximum likelihood estimation (MLE) is a method of estimation theory, estimating the Statistical parameter, parameters of an assumed probability distribution, given some observed data. This is achieved by Mathematical optimization, maximizing a likelihood function so that, under the assumed statistical model, the Realization (probability), observed data is most probable. The point estimate, point in the parameter space that maximizes the likelihood function is called the maximum likelihood estimate. The logic of maximum likelihood is both intuitive and flexible, and as such the method has become a dominant means of statistical inference. If the likelihood function is Differentiable function, differentiable, the derivative test for finding maxima can be applied. In some cases, the first-order conditions of the likelihood function can be solved analytically; for instance, the ordinary least squares estimator for a linear regression model maximizes the likelihood when ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Bayesian Probability

Bayesian probability is an Probability interpretations, interpretation of the concept of probability, in which, instead of frequentist probability, frequency or propensity probability, propensity of some phenomenon, probability is interpreted as reasonable expectation representing a state of knowledge or as quantification of a personal belief. The Bayesian interpretation of probability can be seen as an extension of propositional logic that enables reasoning with Hypothesis, hypotheses; that is, with propositions whose truth value, truth or falsity is unknown. In the Bayesian view, a probability is assigned to a hypothesis, whereas under frequentist inference, a hypothesis is typically tested without being assigned a probability. Bayesian probability belongs to the category of evidential probabilities; to evaluate the probability of a hypothesis, the Bayesian probabilist specifies a prior probability. This, in turn, is then updated to a posterior probability in the light of new, re ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Maximum Parsimony

In phylogenetics, maximum parsimony is an optimality criterion under which the phylogenetic tree that minimizes the total number of character-state changes (or miminizes the cost of differentially weighted character-state changes) is preferred. Under the maximum-parsimony criterion, the optimal tree will minimize the amount of homoplasy (i.e., convergent evolution, parallel evolution, and evolutionary reversals). In other words, under this criterion, the shortest possible tree that explains the data is considered best. Some of the basic ideas behind maximum parsimony were presented by James S. Farris in 1970 and Walter M. Fitch in 1971. Maximum parsimony is an intuitive and simple criterion, and it is popular for this reason. However, although it is easy to ''score'' a phylogenetic tree (by counting the number of character-state changes), there is no algorithm to quickly ''generate'' the most-parsimonious tree. Instead, the most-parsimonious tree must be sought in "tree space" ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Sum Of Squared Residuals

In statistics, the residual sum of squares (RSS), also known as the sum of squared estimate of errors (SSE), is the sum of the squares of residuals (deviations predicted from actual empirical values of data). It is a measure of the discrepancy between the data and an estimation model, such as a linear regression. A small RSS indicates a tight fit of the model to the data. It is used as an optimality criterion in parameter selection and model selection. In general, total sum of squares = explained sum of squares + residual sum of squares. For a proof of this in the multivariate ordinary least squares (OLS) case, see partitioning in the general OLS model. One explanatory variable In a model with a single explanatory variable, RSS is given by: :\operatorname = \sum_^n (y_i - f(x_i))^2 where ''y''''i'' is the ''i''th value of the variable to be predicted, ''x''''i'' is the ''i''th value of the explanatory variable, and f(x_i) is the predicted value of ''y''''i'' (also terme ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Least Absolute Deviations

Least absolute deviations (LAD), also known as least absolute errors (LAE), least absolute residuals (LAR), or least absolute values (LAV), is a statistical optimality criterion and a statistical optimization technique based minimizing the ''sum of absolute deviations'' (sum of absolute residuals or sum of absolute errors) or the ''L''1 norm of such values. It is analogous to the least squares technique, except that it is based on ''absolute values'' instead of squared values. It attempts to find a function which closely approximates a set of data by minimizing residuals between points generated by the function and corresponding data points. The LAD estimate also arises as the maximum likelihood estimate if the errors have a Laplace distribution. It was introduced in 1757 by Roger Joseph Boscovich. Formulation Suppose that the data set consists of the points (''x''''i'', ''y''''i'') with ''i'' = 1, 2, ..., ''n''. We want to find a function ''f'' such that f(x_i)\approx y_i. ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Statistical Hypothesis Testing

A statistical hypothesis test is a method of statistical inference used to decide whether the data at hand sufficiently support a particular hypothesis. Hypothesis testing allows us to make probabilistic statements about population parameters. History Early use While hypothesis testing was popularized early in the 20th century, early forms were used in the 1700s. The first use is credited to John Arbuthnot (1710), followed by Pierre-Simon Laplace (1770s), in analyzing the human sex ratio at birth; see . Modern origins and early controversy Modern significance testing is largely the product of Karl Pearson ( ''p''-value, Pearson's chi-squared test), William Sealy Gosset ( Student's t-distribution), and Ronald Fisher ("null hypothesis", analysis of variance, "significance test"), while hypothesis testing was developed by Jerzy Neyman and Egon Pearson (son of Karl). Ronald Fisher began his life in statistics as a Bayesian (Zabell 1992), but Fisher soon grew disenchanted with t ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |