|

Nullspace Property

In compressed sensing, the Kernel (linear algebra), nullspace property gives necessary and sufficient conditions on the reconstruction of sparse signals using the techniques of Relaxation (approximation), \ell_1-relaxation. The term "nullspace property" originates from Cohen, Dahmen, and DeVore. The nullspace property is often difficult to check in practice, and the restricted isometry property is a more modern condition in the field of compressed sensing. The technique of Relaxation (approximation), \ell_1-relaxation The Convex optimization, non-convex \ell_0-minimization problem, \min\limits_ \, x\, _0 subject to Ax = b, is a standard problem in compressed sensing. However, \ell_0-minimization is known to be NP-hardness, NP-hard in general. As such, the technique of Relaxation (approximation), \ell_1-relaxation is sometimes employed to circumvent the difficulties of signal reconstruction using the \ell_0-norm. In Relaxation (approximation), \ell_1-relaxation, the Relaxation (a ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Compressed Sensing

Compressed sensing (also known as compressive sensing, compressive sampling, or sparse sampling) is a signal processing technique for efficiently acquiring and reconstructing a Signal (electronics), signal, by finding solutions to Underdetermined system, underdetermined linear systems. This is based on the principle that, through optimization, the sparsity of a signal can be exploited to recover it from far fewer samples than required by the Nyquist–Shannon sampling theorem. There are two conditions under which recovery is possible. The first one is sparsity, which requires the signal to be sparse in some domain. The second one is incoherence, which is applied through the isometric property, which is sufficient for sparse signals. Overview A common goal of the engineering field of signal processing is to reconstruct a signal from a series of sampling measurements. In general, this task is impossible because there is no way to reconstruct a signal during the times that the signa ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Kernel (linear Algebra)

In mathematics, the kernel of a linear map, also known as the null space or nullspace, is the linear subspace of the domain of the map which is mapped to the zero vector. That is, given a linear map between two vector spaces and , the kernel of is the vector space of all elements of such that , where denotes the zero vector in , or more symbolically: :\ker(L) = \left\ . Properties The kernel of is a linear subspace of the domain .Linear algebra, as discussed in this article, is a very well established mathematical discipline for which there are many sources. Almost all of the material in this article can be found in , , and Strang's lectures. In the linear map L : V \to W, two elements of have the same image in if and only if their difference lies in the kernel of , that is, L\left(\mathbf_1\right) = L\left(\mathbf_2\right) \quad \text \quad L\left(\mathbf_1-\mathbf_2\right) = \mathbf. From this, it follows that the image of is isomorphic to the quotient of by the ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Relaxation (approximation)

In mathematical optimization and related fields, relaxation is a modeling strategy. A relaxation is an approximation of a difficult problem by a nearby problem that is easier to solve. A solution of the relaxed problem provides information about the original problem. For example, a linear programming relaxation of an integer programming problem removes the integrality constraint and so allows non-integer rational solutions. A Lagrangian relaxation of a complicated problem in combinatorial optimization penalizes violations of some constraints, allowing an easier relaxed problem to be solved. Relaxation techniques complement or supplement branch and bound algorithms of combinatorial optimization; linear programming and Lagrangian relaxations are used to obtain bounds in branch-and-bound algorithms for integer programming. The modeling strategy of relaxation should not be confused with iterative methods of relaxation, such as successive over-relaxation (SOR); iterative methods of ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Restricted Isometry Property

In linear algebra, the restricted isometry property (RIP) characterizes matrices which are nearly orthonormal, at least when operating on sparse vectors. The concept was introduced by Emmanuel Candès and Terence TaoE. J. Candes and T. Tao, "Decoding by Linear Programming," IEEE Trans. Inf. Th., 51(12): 4203–4215 (2005). and is used to prove many theorems in the field of compressed sensing. There are no known large matrices with bounded restricted isometry constants (computing these constants is strongly NP-hard, and is hard to approximate as well), but many random matrices have been shown to remain bounded. In particular, it has been shown that with exponentially high probability, random Gaussian, Bernoulli, and partial Fourier matrices satisfy the RIP with number of measurements nearly linear in the sparsity level. The current smallest upper bounds for any large rectangular matrices are for those of Gaussian matrices. Web forms to evaluate bounds for the Gaussian ensemble ar ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

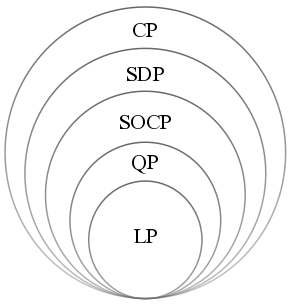

Convex Optimization

Convex optimization is a subfield of mathematical optimization that studies the problem of minimizing convex functions over convex sets (or, equivalently, maximizing concave functions over convex sets). Many classes of convex optimization problems admit polynomial-time algorithms, whereas mathematical optimization is in general NP-hard. Convex optimization has applications in a wide range of disciplines, such as automatic control systems, estimation and signal processing, communications and networks, electronic circuit design, data analysis and modeling, finance, statistics ( optimal experimental design), and structural optimization, where the approximation concept has proven to be efficient. With recent advancements in computing and optimization algorithms, convex programming is nearly as straightforward as linear programming. Definition A convex optimization problem is an optimization problem in which the objective function is a convex function and the feasible set is a c ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

NP-hardness

In computational complexity theory, NP-hardness ( non-deterministic polynomial-time hardness) is the defining property of a class of problems that are informally "at least as hard as the hardest problems in NP". A simple example of an NP-hard problem is the subset sum problem. A more precise specification is: a problem ''H'' is NP-hard when every problem ''L'' in NP can be reduced in polynomial time to ''H''; that is, assuming a solution for ''H'' takes 1 unit time, ''H''s solution can be used to solve ''L'' in polynomial time. As a consequence, finding a polynomial time algorithm to solve any NP-hard problem would give polynomial time algorithms for all the problems in NP. As it is suspected that P≠NP, it is unlikely that such an algorithm exists. It is suspected that there are no polynomial-time algorithms for NP-hard problems, but that has not been proven. Moreover, the class P, in which all problems can be solved in polynomial time, is contained in the NP class. Defi ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Linear Programming

Linear programming (LP), also called linear optimization, is a method to achieve the best outcome (such as maximum profit or lowest cost) in a mathematical model whose requirements are represented by linear function#As a polynomial function, linear relationships. Linear programming is a special case of mathematical programming (also known as mathematical optimization). More formally, linear programming is a technique for the mathematical optimization, optimization of a linear objective function, subject to linear equality and linear inequality Constraint (mathematics), constraints. Its feasible region is a convex polytope, which is a set defined as the intersection (mathematics), intersection of finitely many Half-space (geometry), half spaces, each of which is defined by a linear inequality. Its objective function is a real number, real-valued affine function, affine (linear) function defined on this polyhedron. A linear programming algorithm finds a point in the polytope where ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Triangle Inequality

In mathematics, the triangle inequality states that for any triangle, the sum of the lengths of any two sides must be greater than or equal to the length of the remaining side. This statement permits the inclusion of degenerate triangles, but some authors, especially those writing about elementary geometry, will exclude this possibility, thus leaving out the possibility of equality. If , , and are the lengths of the sides of the triangle, with no side being greater than , then the triangle inequality states that :z \leq x + y , with equality only in the degenerate case of a triangle with zero area. In Euclidean geometry and some other geometries, the triangle inequality is a theorem about distances, and it is written using vectors and vector lengths ( norms): :\, \mathbf x + \mathbf y\, \leq \, \mathbf x\, + \, \mathbf y\, , where the length of the third side has been replaced by the vector sum . When and are real numbers, they can be viewed as vectors in , and the trian ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |