|

Naum Z. Shor

Naum Zuselevich Shor (russian: Наум Зуселевич Шор) (1 January 1937 – 26 February 2006) was a Soviet and Ukrainian mathematician specializing in optimization. He made significant contributions to nonlinear and stochastic programming, numerical techniques for non-smooth optimization, discrete optimization problems, matrix optimization, dual quadratic bounds in multi-extremal programming problems. Shor became a full member of the National Academy of Science of Ukraine in 1998. Subgradient methods N. Z. Shor is well known for his method of generalized gradient descent with space dilation in the direction of the difference of two successive subgradients (the so-called r-algorithm), that was created in collaboration with Nikolay G. Zhurbenko. The ellipsoid method was re-invigorated by A.S. Nemirovsky and D.B. Yudin, who developed a careful complexity analysis of its approximation properties for problems of convex minimization with real data. However, it ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

National Academy Of Science Of Ukraine

The National Academy of Sciences of Ukraine (NASU; uk, Національна академія наук України, ''Natsional’na akademiya nauk Ukrayiny'', abbr: NAN Ukraine) is a self-governing state-funded organization in Ukraine that is the main center of development of science and technology by coordinating a system of research institutes in the country. It is the main research oriented organization along with the five other academies in Ukraine specialized in various scientific disciplines. NAS Ukraine consists of numerous departments, sections, research institutes, scientific centers and various other supporting scientific organizations. The Academy reports on the annual basis to the Cabinet of Ministers of Ukraine. The presidium of the academy is located at vulytsia Volodymyrska, 57, across the street from the Building of Pedagogical Museum, which was used to host the Central Council during the independence period of 1917-18. In 1919–1991 it was a repu ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Rate Of Convergence

In numerical analysis, the order of convergence and the rate of convergence of a convergent sequence are quantities that represent how quickly the sequence approaches its limit. A sequence (x_n) that converges to x^* is said to have ''order of convergence'' q \geq 1 and ''rate of convergence'' \mu if : \lim _ \frac=\mu. The rate of convergence \mu is also called the ''asymptotic error constant''. Note that this terminology is not standardized and some authors will use ''rate'' where this article uses ''order'' (e.g., ). In practice, the rate and order of convergence provide useful insights when using iterative methods for calculating numerical approximations. If the order of convergence is higher, then typically fewer iterations are necessary to yield a useful approximation. Strictly speaking, however, the asymptotic behavior of a sequence does not give conclusive information about any finite part of the sequence. Similar concepts are used for discretization methods. The solutio ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Linear Programming

Linear programming (LP), also called linear optimization, is a method to achieve the best outcome (such as maximum profit or lowest cost) in a mathematical model whose requirements are represented by linear function#As a polynomial function, linear relationships. Linear programming is a special case of mathematical programming (also known as mathematical optimization). More formally, linear programming is a technique for the mathematical optimization, optimization of a linear objective function, subject to linear equality and linear inequality Constraint (mathematics), constraints. Its feasible region is a convex polytope, which is a set defined as the intersection (mathematics), intersection of finitely many Half-space (geometry), half spaces, each of which is defined by a linear inequality. Its objective function is a real number, real-valued affine function, affine (linear) function defined on this polyhedron. A linear programming algorithm finds a point in the polytope where ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Algorithm

In mathematics and computer science, an algorithm () is a finite sequence of rigorous instructions, typically used to solve a class of specific Computational problem, problems or to perform a computation. Algorithms are used as specifications for performing calculations and data processing. More advanced algorithms can perform automated deductions (referred to as automated reasoning) and use mathematical and logical tests to divert the code execution through various routes (referred to as automated decision-making). Using human characteristics as descriptors of machines in metaphorical ways was already practiced by Alan Turing with terms such as "memory", "search" and "stimulus". In contrast, a Heuristic (computer science), heuristic is an approach to problem solving that may not be fully specified or may not guarantee correct or optimal results, especially in problem domains where there is no well-defined correct or optimal result. As an effective method, an algorithm ca ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Leonid Khachiyan

Leonid Genrikhovich Khachiyan (; russian: Леони́д Ге́нрихович Хачия́н; May 3, 1952April 29, 2005) was a Soviet and American mathematician and computer scientist. He was most famous for his ellipsoid algorithm (1979) for linear programming, which was the first such algorithm known to have a polynomial running time. Even though this algorithm was shown to be impractical, it has inspired other randomized algorithms for convex programming and is considered a significant theoretical breakthrough. Early life and education Khachiyan was born on May 3, 1952, in Leningrad to Armenian parents Genrikh Borisovich Khachiyan, a mathematician and professor of theoretical mechanics, and Zhanna Saakovna Khachiyan, a civil engineer. His grandparents were Karabakh Armenians. He had two brothers: Boris and Yevgeniy (Eugene). His family moved to Moscow in 1961, when he was nine. He received a master's degree from the Moscow Institute of Physics and Technology. In 1978 he ear ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

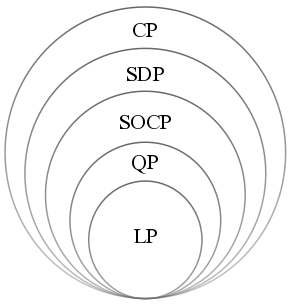

Convex Minimization

Convex optimization is a subfield of mathematical optimization that studies the problem of minimizing convex functions over convex sets (or, equivalently, maximizing concave functions over convex sets). Many classes of convex optimization problems admit polynomial-time algorithms, whereas mathematical optimization is in general NP-hard. Convex optimization has applications in a wide range of disciplines, such as automatic control systems, estimation and signal processing, communications and networks, electronic circuit design, data analysis and modeling, finance, statistics ( optimal experimental design), and structural optimization, where the approximation concept has proven to be efficient. With recent advancements in computing and optimization algorithms, convex programming is nearly as straightforward as linear programming. Definition A convex optimization problem is an optimization problem in which the objective function is a convex function and the feasible se ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Approximation Algorithm

In computer science and operations research, approximation algorithms are efficient algorithms that find approximate solutions to optimization problems (in particular NP-hard problems) with provable guarantees on the distance of the returned solution to the optimal one. Approximation algorithms naturally arise in the field of theoretical computer science as a consequence of the widely believed P ≠ NP conjecture. Under this conjecture, a wide class of optimization problems cannot be solved exactly in polynomial time. The field of approximation algorithms, therefore, tries to understand how closely it is possible to approximate optimal solutions to such problems in polynomial time. In an overwhelming majority of the cases, the guarantee of such algorithms is a multiplicative one expressed as an approximation ratio or approximation factor i.e., the optimal solution is always guaranteed to be within a (predetermined) multiplicative factor of the returned solution. However, there are ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Analysis Of Algorithms

In computer science, the analysis of algorithms is the process of finding the computational complexity of algorithms—the amount of time, storage, or other resources needed to execute them. Usually, this involves determining a function that relates the size of an algorithm's input to the number of steps it takes (its time complexity) or the number of storage locations it uses (its space complexity). An algorithm is said to be efficient when this function's values are small, or grow slowly compared to a growth in the size of the input. Different inputs of the same size may cause the algorithm to have different behavior, so best, worst and average case descriptions might all be of practical interest. When not otherwise specified, the function describing the performance of an algorithm is usually an upper bound, determined from the worst case inputs to the algorithm. The term "analysis of algorithms" was coined by Donald Knuth. Algorithm analysis is an important part of a broader ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Ellipsoid Method

In mathematical optimization, the ellipsoid method is an iterative method for convex optimization, minimizing convex functions. When specialized to solving feasible linear optimization problems with rational data, the ellipsoid method is an algorithm which finds an optimal solution in a number of steps that is polynomial in the input size. The ellipsoid method generates a sequence of ellipsoids whose volume uniformly decreases at every step, thus enclosing a minimizer of a convex function. History The ellipsoid method has a long history. As an iterative method, a preliminary version was introduced by Naum Z. Shor. In 1972, an approximation algorithm for real convex optimization, convex minimization was studied by Arkadi Nemirovski and David B. Yudin (Judin). As an algorithm for solving linear programming problems with rational data, the ellipsoid algorithm was studied by Leonid Khachiyan; Khachiyan's achievement was to prove the Polynomial time, polynomial-time solvability of li ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Quasi-Newton Method

Quasi-Newton methods are methods used to either find zeroes or local maxima and minima of functions, as an alternative to Newton's method. They can be used if the Jacobian or Hessian is unavailable or is too expensive to compute at every iteration. The "full" Newton's method requires the Jacobian in order to search for zeros, or the Hessian for finding extrema. Search for zeros: root finding Newton's method to find zeroes of a function g of multiple variables is given by x_ = x_n - _g(x_n) g(x_n), where _g(x_n) is the left inverse of the Jacobian matrix J_g(x_n) of g evaluated for x_n. Strictly speaking, any method that replaces the exact Jacobian J_g(x_n) with an approximation is a quasi-Newton method. For instance, the chord method (where J_g(x_n) is replaced by J_g(x_0) for all iterations) is a simple example. The methods given below for optimization refer to an important subclass of quasi-Newton methods, secant methods. Using methods developed to find extrema in order to fi ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |