|

Neural Machine Translation (

Neural machine translation (NMT) is an approach to machine translation that uses an artificial neural network to predict the likelihood of a sequence of words, typically modeling entire sentences in a single integrated model. It is the dominant approach today and can produce translations that rival human translations when translating between high-resource languages under specific conditions. However, there still remain challenges, especially with languages where less high-quality data is available, and with Domain adaptation#Domain shift, domain shift between the data a system was trained on and the texts it is supposed to translate. NMT systems also tend to produce fairly literal translations. Overview In the translation task, a sentence \mathbf = x_ (consisting of I tokens x_i) in the source language is to be translated into a sentence \mathbf = x_ (consisting of J tokens x_j) in the target language. The source and target tokens (which in the simple event are used for each other ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Machine Translation

Machine translation is use of computational techniques to translate text or speech from one language to another, including the contextual, idiomatic and pragmatic nuances of both languages. Early approaches were mostly rule-based or statistical. These methods have since been superseded by neural machine translation and large language models. History Origins The origins of machine translation can be traced back to the work of Al-Kindi, a ninth-century Arabic cryptographer who developed techniques for systemic language translation, including cryptanalysis, frequency analysis, and probability and statistics, which are used in modern machine translation. The idea of machine translation later appeared in the 17th century. In 1629, René Descartes proposed a universal language, with equivalent ideas in different tongues sharing one symbol. The idea of using digital computers for translation of natural languages was proposed as early as 1947 by England's A. D. Booth and Warr ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

CNN Encoder

Cable News Network (CNN) is a multinational news organization operating, most notably, a website and a TV channel headquartered in Atlanta. Founded in 1980 by American media proprietor Ted Turner and Reese Schonfeld as a 24-hour cable news channel, and presently owned by the Manhattan-based media conglomerate Warner Bros. Discovery (WBD), CNN was the first television channel to provide 24-hour news coverage and the first all-news television channel in the United States. As of December 2023, CNN had 68,974,000 television households as subscribers in the United States. According to Nielsen, down from 80 million in March 2021. In June 2021, CNN ranked third in viewership among cable news networks, behind Fox News and MSNBC, averaging 580,000 viewers throughout the day, down 49% from a year earlier, amid sharp declines in viewers across all cable news networks. While CNN ranked 14th among all basic cable networks in 2019, then jumped to 7th during a major surge for the thre ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

GPT-4

Generative Pre-trained Transformer 4 (GPT-4) is a multimodal large language model trained and created by OpenAI and the fourth in its series of GPT foundation models. It was launched on March 14, 2023, and made publicly available via the paid chatbot product ChatGPT Plus until being replaced in 2025, via OpenAI's API, and via the free chatbot Microsoft Copilot. GPT-4 is more capable than its predecessor GPT-3.5. GPT-4 Vision (GPT-4V) is a version of GPT-4 that can process images in addition to text. OpenAI has not revealed technical details and statistics about GPT-4, such as the precise size of the model. As a transformer-based model, GPT-4 uses a paradigm where pre-training using both public data and "data licensed from third-party providers" is used to predict the next token. After this step, the model was then fine-tuned with reinforcement learning feedback from humans and AI for human alignment and policy compliance. Background OpenAI introduced the fir ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Zero-shot Learning

Zero-shot learning (ZSL) is a problem setup in deep learning where, at test time, a learner observes samples from classes which were ''not'' observed during training, and needs to predict the class that they belong to. The name is a play on words based on the earlier concept of one-shot learning, in which classification can be learned from only one, or a few, examples. Zero-shot methods generally work by associating observed and non-observed classes through some form of auxiliary information, which encodes observable distinguishing properties of objects. For example, given a set of images of animals to be classified, along with auxiliary textual descriptions of what animals look like, an artificial intelligence model which has been trained to recognize horses, but has never been given a zebra, can still recognize a zebra when it also knows that zebras look like striped horses. This problem is widely studied in computer vision, natural language processing, and machine perception. ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

GPT 3

Generative Pre-trained Transformer 3 (GPT-3) is a large language model released by OpenAI in 2020. Like its predecessor, GPT-2, it is a decoder-only transformer model of deep neural network, which supersedes recurrence and convolution-based architectures with a technique known as "attention". This attention mechanism allows the model to focus selectively on segments of input text it predicts to be most relevant. GPT-3 has 175 billion parameters, each with 16-bit precision, requiring 350GB of storage since each parameter occupies 2 bytes. It has a context window size of 2048 tokens, and has demonstrated strong "zero-shot" and " few-shot" learning abilities on many tasks. On September 22, 2020, Microsoft announced that it had licensed GPT-3 exclusively. Others can still receive output from its public API, but only Microsoft has access to the underlying model. Background According to ''The Economist'', improved algorithms, more powerful computers, and a recent increase in the ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Generative Model

In statistical classification, two main approaches are called the generative approach and the discriminative approach. These compute classifiers by different approaches, differing in the degree of statistical modelling. Terminology is inconsistent, but three major types can be distinguished: # A generative model is a statistical model of the joint probability distribution P(X, Y) on a given observable variable ''X'' and target variable ''Y'';: "Generative classifiers learn a model of the joint probability, p(x, y), of the inputs ''x'' and the label ''y'', and make their predictions by using Bayes rules to calculate p(y\mid x), and then picking the most likely label ''y''. A generative model can be used to "generate" random instances ( outcomes) of an observation ''x''. # A discriminative model is a model of the conditional probability P(Y\mid X = x) of the target ''Y'', given an observation ''x''. It can be used to "discriminate" the value of the target variable ''Y'', given an ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

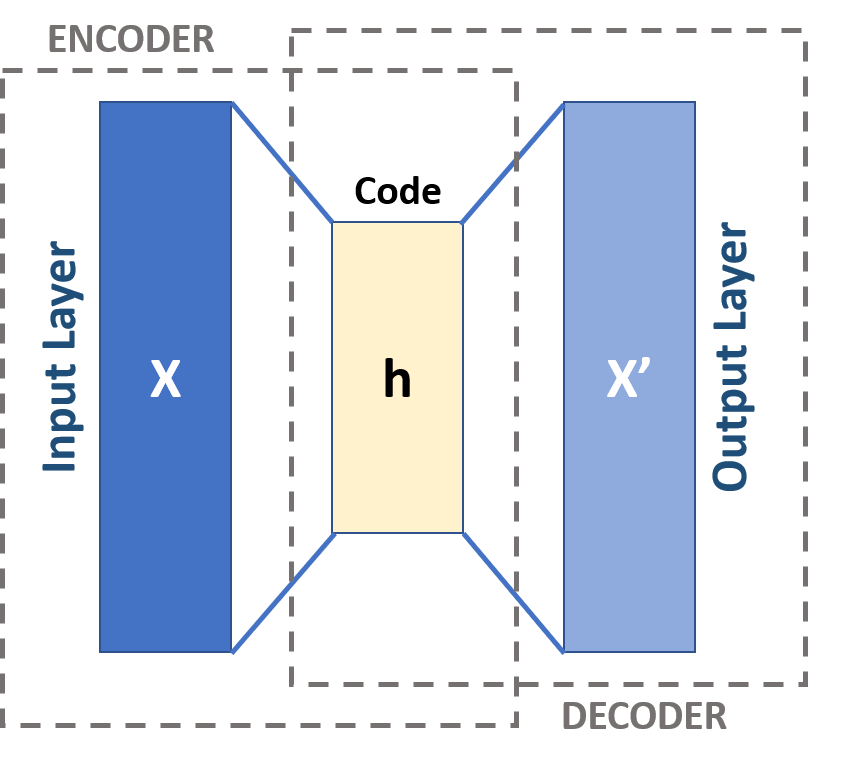

Autoencoder

An autoencoder is a type of artificial neural network used to learn efficient codings of unlabeled data (unsupervised learning). An autoencoder learns two functions: an encoding function that transforms the input data, and a decoding function that recreates the input data from the encoded representation. The autoencoder learns an efficient representation (encoding) for a set of data, typically for dimensionality reduction, to generate lower-dimensional embeddings for subsequent use by other machine learning algorithms. Variants exist which aim to make the learned representations assume useful properties. Examples are regularized autoencoders (''sparse'', ''denoising'' and ''contractive'' autoencoders), which are effective in learning representations for subsequent classification tasks, and ''variational'' autoencoders, which can be used as generative models. Autoencoders are applied to many problems, including facial recognition, feature detection, anomaly detection, and l ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Natural Language Processing

Natural language processing (NLP) is a subfield of computer science and especially artificial intelligence. It is primarily concerned with providing computers with the ability to process data encoded in natural language and is thus closely related to information retrieval, knowledge representation and computational linguistics, a subfield of linguistics. Major tasks in natural language processing are speech recognition, text classification, natural-language understanding, natural language understanding, and natural language generation. History Natural language processing has its roots in the 1950s. Already in 1950, Alan Turing published an article titled "Computing Machinery and Intelligence" which proposed what is now called the Turing test as a criterion of intelligence, though at the time that was not articulated as a problem separate from artificial intelligence. The proposed test includes a task that involves the automated interpretation and generation of natural language ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Fine-tuning (deep Learning)

In deep learning, fine-tuning is an approach to transfer learning in which the parameters of a pre-trained neural network model are trained on new data. Fine-tuning can be done on the entire neural network, or on only a subset of its layers, in which case the layers that are not being fine-tuned are "frozen" (i.e., not changed during backpropagation). A model may also be augmented with "adapters" that consist of far fewer parameters than the original model, and fine-tuned in a parameter-efficient way by tuning the weights of the adapters and leaving the rest of the model's weights frozen. For some architectures, such as convolutional neural networks, it is common to keep the earlier layers (those closest to the input layer) frozen, as they capture lower-level features, while later layers often discern high-level features that can be more related to the task that the model is trained on. Models that are pre-trained on large, general corpora are usually fine-tuned by reusing their ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

BERT (language Model)

Bidirectional encoder representations from transformers (BERT) is a language model introduced in October 2018 by researchers at Google. It learns to represent text as a sequence of vectors using self-supervised learning. It uses the Transformer (machine learning model), encoder-only transformer architecture. BERT dramatically improved the State of the art, state-of-the-art for large language model, large language models. , BERT is a ubiquitous baseline in natural language processing (NLP) experiments. BERT is trained by masked token prediction and next sentence prediction. As a result of this training process, BERT learns contextual, Latent space, latent representations of tokens in their context, similar to ELMo and GPT-2. It found applications for many natural language processing tasks, such as coreference resolution and polysemy resolution. It is an evolutionary step over ELMo, and spawned the study of "BERTology", which attempts to interpret what is learned by BERT. BERT wa ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Large Language Model

A large language model (LLM) is a language model trained with self-supervised machine learning on a vast amount of text, designed for natural language processing tasks, especially language generation. The largest and most capable LLMs are generative pretrained transformers (GPTs), which are largely used in generative chatbots such as ChatGPT or Gemini. LLMs can be fine-tuned for specific tasks or guided by prompt engineering. These models acquire predictive power regarding syntax, semantics, and ontologies inherent in human language corpora, but they also inherit inaccuracies and biases present in the data they are trained in. History Before the emergence of transformer-based models in 2017, some language models were considered large relative to the computational and data constraints of their time. In the early 1990s, IBM's statistical models pioneered word alignment techniques for machine translation, laying the groundwork for corpus-based language modeling. A sm ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

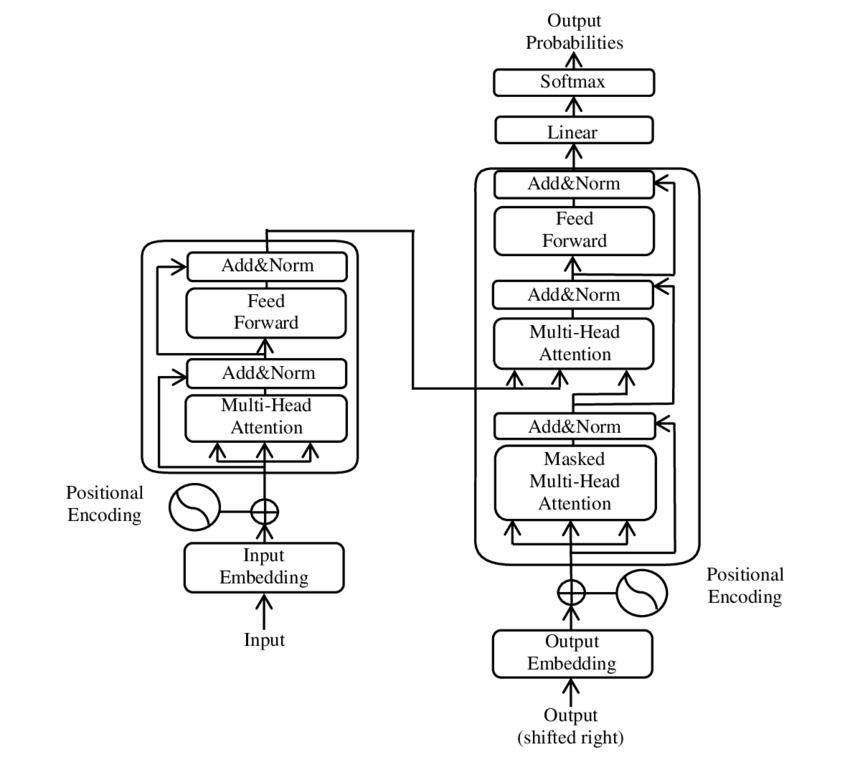

Transformer (machine Learning Model)

The transformer is a deep learning architecture based on the multi-head attention mechanism, in which text is converted to numerical representations called tokens, and each token is converted into a vector via lookup from a word embedding table. At each layer, each token is then contextualized within the scope of the context window with other (unmasked) tokens via a parallel multi-head attention mechanism, allowing the signal for key tokens to be amplified and less important tokens to be diminished. Transformers have the advantage of having no recurrent units, therefore requiring less training time than earlier recurrent neural architectures (RNNs) such as long short-term memory (LSTM). Later variations have been widely adopted for training large language models (LLM) on large (language) datasets. The modern version of the transformer was proposed in the 2017 paper " Attention Is All You Need" by researchers at Google. Transformers were first developed as an improvement ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |