|

Mixed-precision Arithmetic

Mixed-precision arithmetic is a form of floating-point arithmetic that uses numbers with varying widths in a single operation. Overview A common usage of mixed-precision arithmetic is for operating on inaccurate numbers with a small width and expanding them to a larger, more accurate representation. For example, two half-precision or bfloat16 (16-bit) floating-point numbers may be multiplied together to result in a more accurate single-precision (32-bit) float. In this way, mixed-precision arithmetic approximates arbitrary-precision arithmetic, albeit with a low number of possible precisions. Iterative algorithms (like gradient descent) are good candidates for mixed-precision arithmetic. In an iterative algorithm like square root, a coarse integral guess can be made and refined over many iterations until the error in precision makes it such that the smallest addition or subtraction to the guess is still too coarse to be an acceptable answer. When this happens, the precision can b ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Floating-point Arithmetic

In computing, floating-point arithmetic (FP) is arithmetic on subsets of real numbers formed by a ''significand'' (a Sign (mathematics), signed sequence of a fixed number of digits in some Radix, base) multiplied by an integer power of that base. Numbers of this form are called floating-point numbers. For example, the number 2469/200 is a floating-point number in base ten with five digits: 2469/200 = 12.345 = \! \underbrace_\text \! \times \! \underbrace_\text\!\!\!\!\!\!\!\overbrace^ However, 7716/625 = 12.3456 is not a floating-point number in base ten with five digits—it needs six digits. The nearest floating-point number with only five digits is 12.346. And 1/3 = 0.3333… is not a floating-point number in base ten with any finite number of digits. In practice, most floating-point systems use Binary number, base two, though base ten (decimal floating point) is also common. Floating-point arithmetic operations, such as addition and division, approximate the correspond ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Double Precision

Double-precision floating-point format (sometimes called FP64 or float64) is a floating-point arithmetic, floating-point computer number format, number format, usually occupying 64 Bit, bits in computer memory; it represents a wide range of numeric values by using a floating radix point. Double precision may be chosen when the range or precision of single-precision floating-point format, single precision would be insufficient. In the IEEE 754 standardization, standard, the 64-bit base-2 format is officially referred to as binary64; it was called double in IEEE 754-1985. IEEE 754 specifies additional floating-point formats, including 32-bit base-2 ''single precision'' and, more recently, base-10 representations (decimal floating point). One of the first programming languages to provide floating-point data types was Fortran. Before the widespread adoption of IEEE 754-1985, the representation and properties of floating-point data types depended on the computer manufacturer and compu ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Exponential Backoff

Exponential backoff is an algorithm that uses feedback to multiplicatively decrease the rate of some process, in order to gradually find an acceptable rate. These algorithms find usage in a wide range of systems and processes, with radio networks and computer networks being particularly notable. Exponential backoff algorithm An exponential backoff algorithm is a form of closed-loop control system that reduces the rate of a controlled process in response to adverse events. For example, if a smartphone app fails to connect to its server, it might try again 1 second later, then if it fails again, 2 seconds later, then 4, etc. Each time the pause is multiplied by a fixed amount (in this case 2). In this case, the adverse event is failing to connect to the server. Other examples of adverse events include collisions of network traffic, an error response from a service, or an explicit request to reduce the rate (i.e. ''back off''). The rate reduction can be modelled as an exponenti ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Backpropagation

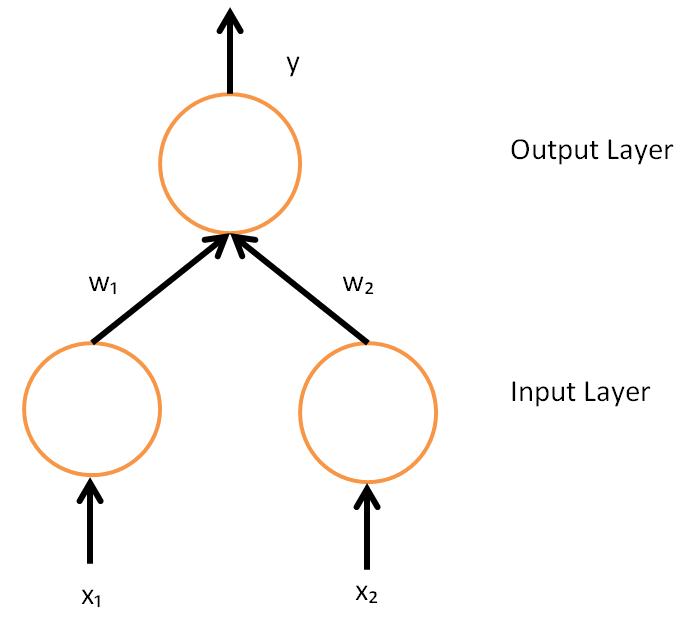

In machine learning, backpropagation is a gradient computation method commonly used for training a neural network to compute its parameter updates. It is an efficient application of the chain rule to neural networks. Backpropagation computes the gradient of a loss function with respect to the weights of the network for a single input–output example, and does so efficiently, computing the gradient one layer at a time, iterating backward from the last layer to avoid redundant calculations of intermediate terms in the chain rule; this can be derived through dynamic programming. Strictly speaking, the term ''backpropagation'' refers only to an algorithm for efficiently computing the gradient, not how the gradient is used; but the term is often used loosely to refer to the entire learning algorithm – including how the gradient is used, such as by stochastic gradient descent, or as an intermediate step in a more complicated optimizer, such as Adaptive Moment Estimation. The ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Stochastic Gradient Descent

Stochastic gradient descent (often abbreviated SGD) is an Iterative method, iterative method for optimizing an objective function with suitable smoothness properties (e.g. Differentiable function, differentiable or Subderivative, subdifferentiable). It can be regarded as a stochastic approximation of gradient descent optimization, since it replaces the actual gradient (calculated from the entire data set) by an estimate thereof (calculated from a randomly selected subset of the data). Especially in high-dimensional optimization problems this reduces the very high Computational complexity, computational burden, achieving faster iterations in exchange for a lower Rate of convergence, convergence rate. The basic idea behind stochastic approximation can be traced back to the Robbins–Monro algorithm of the 1950s. Today, stochastic gradient descent has become an important optimization method in machine learning. Background Both statistics, statistical M-estimation, estimation and ma ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Gradient

In vector calculus, the gradient of a scalar-valued differentiable function f of several variables is the vector field (or vector-valued function) \nabla f whose value at a point p gives the direction and the rate of fastest increase. The gradient transforms like a vector under change of basis of the space of variables of f. If the gradient of a function is non-zero at a point p, the direction of the gradient is the direction in which the function increases most quickly from p, and the magnitude of the gradient is the rate of increase in that direction, the greatest absolute directional derivative. Further, a point where the gradient is the zero vector is known as a stationary point. The gradient thus plays a fundamental role in optimization theory, where it is used to minimize a function by gradient descent. In coordinate-free terms, the gradient of a function f(\mathbf) may be defined by: df=\nabla f \cdot d\mathbf where df is the total infinitesimal change in f for a ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Matrix Multiplication

In mathematics, specifically in linear algebra, matrix multiplication is a binary operation that produces a matrix (mathematics), matrix from two matrices. For matrix multiplication, the number of columns in the first matrix must be equal to the number of rows in the second matrix. The resulting matrix, known as the matrix product, has the number of rows of the first and the number of columns of the second matrix. The product of matrices and is denoted as . Matrix multiplication was first described by the French mathematician Jacques Philippe Marie Binet in 1812, to represent the composition of functions, composition of linear maps that are represented by matrices. Matrix multiplication is thus a basic tool of linear algebra, and as such has numerous applications in many areas of mathematics, as well as in applied mathematics, statistics, physics, economics, and engineering. Computing matrix products is a central operation in all computational applications of linear algebra. Not ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

PyTorch

PyTorch is a machine learning library based on the Torch library, used for applications such as computer vision and natural language processing, originally developed by Meta AI and now part of the Linux Foundation umbrella. It is one of the most popular deep learning frameworks, alongside others such as TensorFlow, offering free and open-source software released under the modified BSD license. Although the Python interface is more polished and the primary focus of development, PyTorch also has a C++ interface. A number of pieces of deep learning software are built on top of PyTorch, including Tesla Autopilot, Uber's Pyro, Hugging Face's Transformers, and Catalyst. PyTorch provides two high-level features: * Tensor computing (like NumPy) with strong acceleration via graphics processing units (GPU) * Deep neural networks built on a tape-based automatic differentiation system History In 2001, Torch was written and released under a GPL license. It was a machine-learning li ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Advanced Micro Devices

Advanced Micro Devices, Inc. (AMD) is an American multinational corporation and technology company headquartered in Santa Clara, California and maintains significant operations in Austin, Texas. AMD is a Information technology, hardware and Fabless manufacturing, fabless company that designs and develops List of AMD processors, central processing units (CPUs), List of AMD graphics processing units, graphics processing units (GPUs), field-programmable gate arrays (FPGAs), System on a chip, system-on-chip (SoC), and high-performance computing, high-performance computer solutions. AMD serves a wide range of business and consumer markets, including gaming, data centers, artificial intelligence (AI), and embedded systems. AMD's main products include List of AMD microprocessors, microprocessors, motherboard chipsets, embedded processors, and List of AMD graphics processing units, graphics processors for Server (computing), servers, workstations, personal computers, and embedded syst ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Intel

Intel Corporation is an American multinational corporation and technology company headquartered in Santa Clara, California, and Delaware General Corporation Law, incorporated in Delaware. Intel designs, manufactures, and sells computer components such as central processing units (CPUs) and related products for business and consumer markets. It is one of the world's List of largest semiconductor chip manufacturers, largest semiconductor chip manufacturers by revenue, and ranked in the Fortune 500, ''Fortune'' 500 list of the List of largest companies in the United States by revenue, largest United States corporations by revenue for nearly a decade, from 2007 to 2016 Fiscal year, fiscal years, until it was removed from the ranking in 2018. In 2020, it was reinstated and ranked 45th, being the List of Fortune 500 computer software and information companies, 7th-largest technology company in the ranking. It was one of the first companies listed on Nasdaq. Intel supplies List of I ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Nvidia

Nvidia Corporation ( ) is an American multinational corporation and technology company headquartered in Santa Clara, California, and incorporated in Delaware. Founded in 1993 by Jensen Huang (president and CEO), Chris Malachowsky, and Curtis Priem, it designs and supplies graphics processing units (GPUs), application programming interfaces (APIs) for data science and high-performance computing, and system on a chip units (SoCs) for mobile computing and the automotive market. Nvidia is also a leading supplier of artificial intelligence (AI) hardware and software. Nvidia outsources the manufacturing of the hardware it designs. Nvidia's professional line of GPUs are used for edge-to-cloud computing and in supercomputers and workstations for applications in fields such as architecture, engineering and construction, media and entertainment, automotive, scientific research, and manufacturing design. Its GeForce line of GPUs are aimed at the consumer market and are used in ap ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Machine Learning

Machine learning (ML) is a field of study in artificial intelligence concerned with the development and study of Computational statistics, statistical algorithms that can learn from data and generalise to unseen data, and thus perform Task (computing), tasks without explicit Machine code, instructions. Within a subdiscipline in machine learning, advances in the field of deep learning have allowed Neural network (machine learning), neural networks, a class of statistical algorithms, to surpass many previous machine learning approaches in performance. ML finds application in many fields, including natural language processing, computer vision, speech recognition, email filtering, agriculture, and medicine. The application of ML to business problems is known as predictive analytics. Statistics and mathematical optimisation (mathematical programming) methods comprise the foundations of machine learning. Data mining is a related field of study, focusing on exploratory data analysi ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |