|

Lyapunov's Central Limit Theorem

In probability theory, the central limit theorem (CLT) establishes that, in many situations, when independent random variables are summed up, their properly normalized sum tends toward a normal distribution even if the original variables themselves are not normally distributed. The theorem is a key concept in probability theory because it implies that probabilistic and statistical methods that work for normal distributions can be applicable to many problems involving other types of distributions. This theorem has seen many changes during the formal development of probability theory. Previous versions of the theorem date back to 1811, but in its modern general form, this fundamental result in probability theory was precisely stated as late as 1920, thereby serving as a bridge between classical and modern probability theory. If X_1, X_2, \dots, X_n, \dots are random samples drawn from a population with overall mean \mu and finite variance and if \bar_n is the sample mean of t ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Probability Theory

Probability theory is the branch of mathematics concerned with probability. Although there are several different probability interpretations, probability theory treats the concept in a rigorous mathematical manner by expressing it through a set of axioms. Typically these axioms formalise probability in terms of a probability space, which assigns a measure taking values between 0 and 1, termed the probability measure, to a set of outcomes called the sample space. Any specified subset of the sample space is called an event. Central subjects in probability theory include discrete and continuous random variables, probability distributions, and stochastic processes (which provide mathematical abstractions of non-deterministic or uncertain processes or measured quantities that may either be single occurrences or evolve over time in a random fashion). Although it is not possible to perfectly predict random events, much can be said about their behavior. Two major results in probability ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Random Sample

In statistics, quality assurance, and survey methodology, sampling is the selection of a subset (a statistical sample) of individuals from within a statistical population to estimate characteristics of the whole population. Statisticians attempt to collect samples that are representative of the population in question. Sampling has lower costs and faster data collection than measuring the entire population and can provide insights in cases where it is infeasible to measure an entire population. Each observation measures one or more properties (such as weight, location, colour or mass) of independent objects or individuals. In survey sampling, weights can be applied to the data to adjust for the sample design, particularly in stratified sampling. Results from probability theory and statistical theory are employed to guide the practice. In business and medical research, sampling is widely used for gathering information about a population. Acceptance sampling is used to determine if ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Covariance Matrix

In probability theory and statistics, a covariance matrix (also known as auto-covariance matrix, dispersion matrix, variance matrix, or variance–covariance matrix) is a square matrix giving the covariance between each pair of elements of a given random vector. Any covariance matrix is symmetric and positive semi-definite and its main diagonal contains variances (i.e., the covariance of each element with itself). Intuitively, the covariance matrix generalizes the notion of variance to multiple dimensions. As an example, the variation in a collection of random points in two-dimensional space cannot be characterized fully by a single number, nor would the variances in the x and y directions contain all of the necessary information; a 2 \times 2 matrix would be necessary to fully characterize the two-dimensional variation. The covariance matrix of a random vector \mathbf is typically denoted by \operatorname_ or \Sigma. Definition Throughout this article, boldfaced unsubsc ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Random Vector

In probability, and statistics, a multivariate random variable or random vector is a list of mathematical variables each of whose value is unknown, either because the value has not yet occurred or because there is imperfect knowledge of its value. The individual variables in a random vector are grouped together because they are all part of a single mathematical system — often they represent different properties of an individual statistical unit. For example, while a given person has a specific age, height and weight, the representation of these features of ''an unspecified person'' from within a group would be a random vector. Normally each element of a random vector is a real number. Random vectors are often used as the underlying implementation of various types of aggregate random variables, e.g. a random matrix, random tree, random sequence, stochastic process, etc. More formally, a multivariate random variable is a column vector \mathbf = (X_1,\dots,X_n)^\mathsf (or its ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Indicator Function

In mathematics, an indicator function or a characteristic function of a subset of a set is a function that maps elements of the subset to one, and all other elements to zero. That is, if is a subset of some set , one has \mathbf_(x)=1 if x\in A, and \mathbf_(x)=0 otherwise, where \mathbf_A is a common notation for the indicator function. Other common notations are I_A, and \chi_A. The indicator function of is the Iverson bracket of the property of belonging to ; that is, :\mathbf_(x)= \in A For example, the Dirichlet function is the indicator function of the rational numbers as a subset of the real numbers. Definition The indicator function of a subset of a set is a function \mathbf_A \colon X \to \ defined as \mathbf_A(x) := \begin 1 ~&\text~ x \in A~, \\ 0 ~&\text~ x \notin A~. \end The Iverson bracket provides the equivalent notation, \in A/math> or to be used instead of \mathbf_(x)\,. The function \mathbf_A is sometimes denoted , , , or even just . Nota ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Jarl Waldemar Lindeberg

Jarl Waldemar Lindeberg (4 August 1876, Helsinki – 24 December 1932, Helsinki) was a Finnish mathematician known for work on the central limit theorem. Life and work Lindeberg was son of a teacher at the Helsinki Polytechnical Institute and at an early age showed mathematical talent and interest. The family was well off and later Jarl Waldemar would prefer to be a reader than a full professor. Lindeberg's career centred on the University of Helsinki. His early interests were in partial differential equations and the calculus of variations but from 1920 he worked in probability and statistics. In 1920 he published his first paper on the central limit theorem. His result was similar to that obtained earlier by Lyapunov whose work he did not then know. However, their approaches were quite different; Lindeberg's was based on a convolution argument while Lyapunov used the characteristic function. Two years later Lindeberg used his method to obtain a stronger result: the so-called '' ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Moment (mathematics)

In mathematics, the moments of a function are certain quantitative measures related to the shape of the function's graph. If the function represents mass density, then the zeroth moment is the total mass, the first moment (normalized by total mass) is the center of mass, and the second moment is the moment of inertia. If the function is a probability distribution, then the first moment is the expected value, the second central moment is the variance, the third standardized moment is the skewness, and the fourth standardized moment is the kurtosis. The mathematical concept is closely related to the concept of moment in physics. For a distribution of mass or probability on a bounded interval, the collection of all the moments (of all orders, from to ) uniquely determines the distribution (Hausdorff moment problem). The same is not true on unbounded intervals (Hamburger moment problem). In the mid-nineteenth century, Pafnuty Chebyshev became the first person to think systematic ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

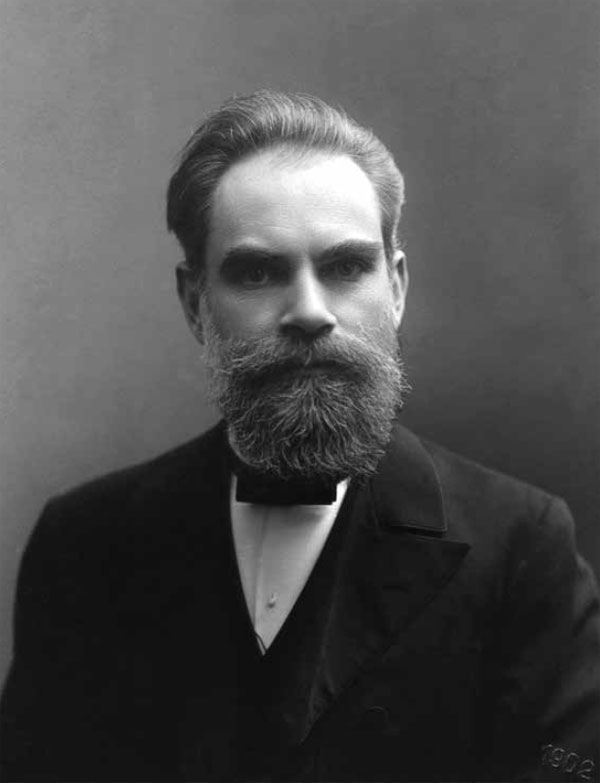

Aleksandr Lyapunov

Aleksandr Mikhailovich Lyapunov (russian: Алекса́ндр Миха́йлович Ляпуно́в, ; – 3 November 1918) was a Russian mathematician, mechanician and physicist. His surname is variously romanized as Ljapunov, Liapunov, Liapounoff or Ljapunow. He was the son of the astronomer Mikhail Lyapunov and the brother of the pianist and composer Sergei Lyapunov. Lyapunov is known for his development of the stability theory of a dynamical system, as well as for his many contributions to mathematical physics and probability theory. Biography Early life Lyapunov was born in Yaroslavl, Russian Empire. His father Mikhail Vasilyevich Lyapunov (1820–1868) was an astronomer employed by the Demidov Lyceum. His brother, Sergei Lyapunov, was a gifted composer and pianist. In 1863, M. V. Lyapunov retired from his scientific career and relocated his family to his wife's estate at Bolobonov, in the Simbirsk province (now Ulyanovsk Oblast). After the death of his father in 18 ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Supremum

In mathematics, the infimum (abbreviated inf; plural infima) of a subset S of a partially ordered set P is a greatest element in P that is less than or equal to each element of S, if such an element exists. Consequently, the term ''greatest lower bound'' (abbreviated as ) is also commonly used. The supremum (abbreviated sup; plural suprema) of a subset S of a partially ordered set P is the least element in P that is greater than or equal to each element of S, if such an element exists. Consequently, the supremum is also referred to as the ''least upper bound'' (or ). The infimum is in a precise sense dual to the concept of a supremum. Infima and suprema of real numbers are common special cases that are important in analysis, and especially in Lebesgue integration. However, the general definitions remain valid in the more abstract setting of order theory where arbitrary partially ordered sets are considered. The concepts of infimum and supremum are close to minimum and max ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Cumulative Distribution Function

In probability theory and statistics, the cumulative distribution function (CDF) of a real-valued random variable X, or just distribution function of X, evaluated at x, is the probability that X will take a value less than or equal to x. Every probability distribution supported on the real numbers, discrete or "mixed" as well as continuous, is uniquely identified by an ''upwards continuous'' ''monotonic increasing'' cumulative distribution function F : \mathbb R \rightarrow ,1/math> satisfying \lim_F(x)=0 and \lim_F(x)=1. In the case of a scalar continuous distribution, it gives the area under the probability density function from minus infinity to x. Cumulative distribution functions are also used to specify the distribution of multivariate random variables. Definition The cumulative distribution function of a real-valued random variable X is the function given by where the right-hand side represents the probability that the random variable X takes on a value less tha ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Convergence In Distribution

In probability theory, there exist several different notions of convergence of random variables. The convergence of sequences of random variables to some limit random variable is an important concept in probability theory, and its applications to statistics and stochastic processes. The same concepts are known in more general mathematics as stochastic convergence and they formalize the idea that a sequence of essentially random or unpredictable events can sometimes be expected to settle down into a behavior that is essentially unchanging when items far enough into the sequence are studied. The different possible notions of convergence relate to how such a behavior can be characterized: two readily understood behaviors are that the sequence eventually takes a constant value, and that values in the sequence continue to change but can be described by an unchanging probability distribution. Background "Stochastic convergence" formalizes the idea that a sequence of essentially random or ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Convergence In Probability

In probability theory, there exist several different notions of convergence of random variables. The convergence of sequences of random variables to some limit random variable is an important concept in probability theory, and its applications to statistics and stochastic processes. The same concepts are known in more general mathematics as stochastic convergence and they formalize the idea that a sequence of essentially random or unpredictable events can sometimes be expected to settle down into a behavior that is essentially unchanging when items far enough into the sequence are studied. The different possible notions of convergence relate to how such a behavior can be characterized: two readily understood behaviors are that the sequence eventually takes a constant value, and that values in the sequence continue to change but can be described by an unchanging probability distribution. Background "Stochastic convergence" formalizes the idea that a sequence of essentially random or ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |