|

Information Entropy

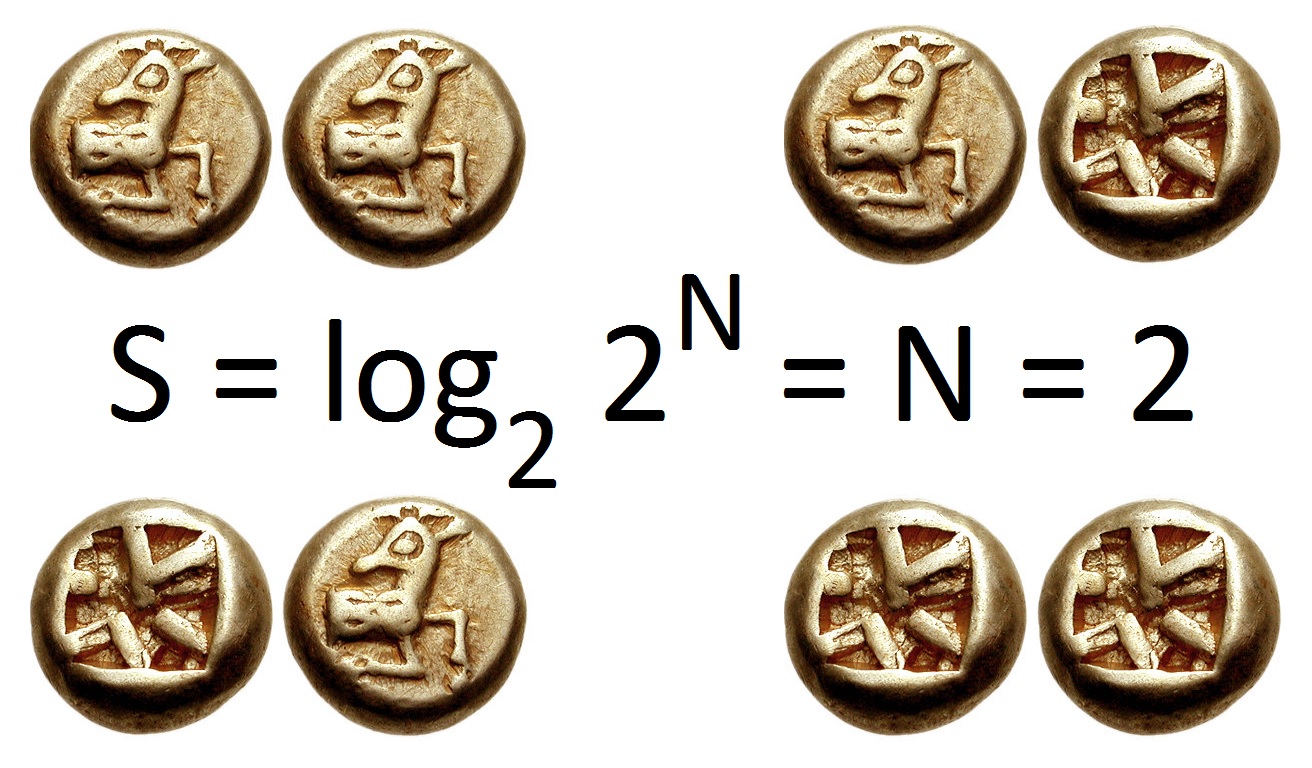

In information theory, the entropy of a random variable is the average level of "information", "surprise", or "uncertainty" inherent to the variable's possible outcomes. Given a discrete random variable X, which takes values in the alphabet \mathcal and is distributed according to p: \mathcal\to , 1/math>: \Eta(X) := -\sum_ p(x) \log p(x) = \mathbb \log p(X), where \Sigma denotes the sum over the variable's possible values. The choice of base for \log, the logarithm, varies for different applications. Base 2 gives the unit of bits (or " shannons"), while base ''e'' gives "natural units" nat, and base 10 gives units of "dits", "bans", or " hartleys". An equivalent definition of entropy is the expected value of the self-information of a variable. The concept of information entropy was introduced by Claude Shannon in his 1948 paper " A Mathematical Theory of Communication",PDF archived froherePDF archived frohere and is also referred to as Shannon entropy. Shannon's theory d ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Information Theory

Information theory is the scientific study of the quantification, storage, and communication of information. The field was originally established by the works of Harry Nyquist and Ralph Hartley, in the 1920s, and Claude Shannon in the 1940s. The field is at the intersection of probability theory, statistics, computer science, statistical mechanics, information engineering, and electrical engineering. A key measure in information theory is entropy. Entropy quantifies the amount of uncertainty involved in the value of a random variable or the outcome of a random process. For example, identifying the outcome of a fair coin flip (with two equally likely outcomes) provides less information (lower entropy) than specifying the outcome from a roll of a die (with six equally likely outcomes). Some other important measures in information theory are mutual information, channel capacity, error exponents, and relative entropy. Important sub-fields of information theory include sourc ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Noisy-channel Coding Theorem

In information theory, the noisy-channel coding theorem (sometimes Shannon's theorem or Shannon's limit), establishes that for any given degree of noise contamination of a communication channel, it is possible to communicate discrete data (digital information) nearly error-free up to a computable maximum rate through the channel. This result was presented by Claude Shannon in 1948 and was based in part on earlier work and ideas of Harry Nyquist and Ralph Hartley. The Shannon limit or Shannon capacity of a communication channel refers to the maximum rate of error-free data that can theoretically be transferred over the channel if the link is subject to random data transmission errors, for a particular noise level. It was first described by Shannon (1948), and shortly after published in a book by Shannon and Warren Weaver entitled '' The Mathematical Theory of Communication'' (1949). This founded the modern discipline of information theory. Overview Stated by Claude Sh ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Data Compression

In information theory, data compression, source coding, or bit-rate reduction is the process of encoding information using fewer bits than the original representation. Any particular compression is either lossy or lossless. Lossless compression reduces bits by identifying and eliminating statistical redundancy. No information is lost in lossless compression. Lossy compression reduces bits by removing unnecessary or less important information. Typically, a device that performs data compression is referred to as an encoder, and one that performs the reversal of the process (decompression) as a decoder. The process of reducing the size of a data file is often referred to as data compression. In the context of data transmission, it is called source coding; encoding done at the source of the data before it is stored or transmitted. Source coding should not be confused with channel coding, for error detection and correction or line coding, the means for mapping data onto a signal. ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Information Content

In information theory, the information content, self-information, surprisal, or Shannon information is a basic quantity derived from the probability of a particular event occurring from a random variable. It can be thought of as an alternative way of expressing probability, much like odds or log-odds, but which has particular mathematical advantages in the setting of information theory. The Shannon information can be interpreted as quantifying the level of "surprise" of a particular outcome. As it is such a basic quantity, it also appears in several other settings, such as the length of a message needed to transmit the event given an optimal source coding of the random variable. The Shannon information is closely related to ''entropy'', which is the expected value of the self-information of a random variable, quantifying how surprising the random variable is "on average". This is the average amount of self-information an observer would expect to gain about a random variable when ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Ternary Numeral System

A ternary numeral system (also called base 3 or trinary) has three as its base. Analogous to a bit, a ternary digit is a trit (trinary digit). One trit is equivalent to log2 3 (about 1.58496) bits of information. Although ''ternary'' most often refers to a system in which the three digits are all non–negative numbers; specifically , , and , the adjective also lends its name to the balanced ternary system; comprising the digits −1, 0 and +1, used in comparison logic and ternary computers. Comparison to other bases Representations of integer numbers in ternary do not get uncomfortably lengthy as quickly as in binary. For example, decimal 365 or senary 1405 corresponds to binary 101101101 (nine digits) and to ternary 111112 (six digits). However, they are still far less compact than the corresponding representations in bases such as decimalsee below for a compact way to codify ternary using nonary (base 9) and septemvigesimal (base 27). As for rational number ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Biased Coin

In probability theory and statistics, a sequence of independent Bernoulli trials with probability 1/2 of success on each trial is metaphorically called a fair coin. One for which the probability is not 1/2 is called a biased or unfair coin. In theoretical studies, the assumption that a coin is fair is often made by referring to an ideal coin. John Edmund Kerrich performed experiments in coin flipping and found that a coin made from a wooden disk about the size of a crown and coated on one side with lead landed heads (wooden side up) 679 times out of 1000. In this experiment the coin was tossed by balancing it on the forefinger, flipping it using the thumb so that it spun through the air for about a foot before landing on a flat cloth spread over a table. Edwin Thompson Jaynes claimed that when a coin is caught in the hand, instead of being allowed to bounce, the physical bias in the coin is insignificant compared to the method of the toss, where with sufficient practice a coin c ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Characterization

Characterization or characterisation is the representation of persons (or other beings or creatures) in narrative and dramatic works. The term character development is sometimes used as a synonym. This representation may include direct methods like the attribution of qualities in description or commentary, and indirect (or "dramatic") methods inviting readers to infer qualities from characters' actions, dialogue, or appearance. Such a personage is called a character. Character is a literary element. History The term ''characterization'' was introduced in the 19th century.Harrison (1998, pp. 51-2) Aristotle promoted the primacy of plot over characters, that is, a plot-driven narrative, arguing in his ''Poetics'' that tragedy "is a representation, not of men, but of action and life." This view was reversed in the 19th century, when the primacy of the character, that is, a character-driven narrative, was affirmed first with the realist novel, and increasingly later with the i ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

YouTube

YouTube is a global online video sharing and social media platform headquartered in San Bruno, California. It was launched on February 14, 2005, by Steve Chen, Chad Hurley, and Jawed Karim. It is owned by Google, and is the second most visited website, after Google Search. YouTube has more than 2.5 billion monthly users who collectively watch more than one billion hours of videos each day. , videos were being uploaded at a rate of more than 500 hours of content per minute. In October 2006, YouTube was bought by Google for $1.65 billion. Google's ownership of YouTube expanded the site's business model, expanding from generating revenue from advertisements alone, to offering paid content such as movies and exclusive content produced by YouTube. It also offers YouTube Premium, a paid subscription option for watching content without ads. YouTube also approved creators to participate in Google's AdSense program, which seeks to generate more revenue for both parties ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Information Content

In information theory, the information content, self-information, surprisal, or Shannon information is a basic quantity derived from the probability of a particular event occurring from a random variable. It can be thought of as an alternative way of expressing probability, much like odds or log-odds, but which has particular mathematical advantages in the setting of information theory. The Shannon information can be interpreted as quantifying the level of "surprise" of a particular outcome. As it is such a basic quantity, it also appears in several other settings, such as the length of a message needed to transmit the event given an optimal source coding of the random variable. The Shannon information is closely related to ''entropy'', which is the expected value of the self-information of a random variable, quantifying how surprising the random variable is "on average". This is the average amount of self-information an observer would expect to gain about a random variable when ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Differential Entropy

Differential entropy (also referred to as continuous entropy) is a concept in information theory that began as an attempt by Claude Shannon to extend the idea of (Shannon) entropy, a measure of average surprisal of a random variable, to continuous probability distributions. Unfortunately, Shannon did not derive this formula, and rather just assumed it was the correct continuous analogue of discrete entropy, but it is not. The actual continuous version of discrete entropy is the limiting density of discrete points (LDDP). Differential entropy (described here) is commonly encountered in the literature, but it is a limiting case of the LDDP, and one that loses its fundamental association with discrete entropy. In terms of measure theory, the differential entropy of a probability measure is the negative relative entropy from that measure to the Lebesgue measure, where the latter is treated as if it were a probability measure, despite being unnormalized. Definition Let X be a rando ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Axiom

An axiom, postulate, or assumption is a statement that is taken to be true, to serve as a premise or starting point for further reasoning and arguments. The word comes from the Ancient Greek word (), meaning 'that which is thought worthy or fit' or 'that which commends itself as evident'. The term has subtle differences in definition when used in the context of different fields of study. As defined in classic philosophy, an axiom is a statement that is so evident or well-established, that it is accepted without controversy or question. As used in modern logic, an axiom is a premise or starting point for reasoning. As used in mathematics, the term ''axiom'' is used in two related but distinguishable senses: "logical axioms" and "non-logical axioms". Logical axioms are usually statements that are taken to be true within the system of logic they define and are often shown in symbolic form (e.g., (''A'' and ''B'') implies ''A''), while non-logical axioms (e.g., ) are actua ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Machine Learning

Machine learning (ML) is a field of inquiry devoted to understanding and building methods that 'learn', that is, methods that leverage data to improve performance on some set of tasks. It is seen as a part of artificial intelligence. Machine learning algorithms build a model based on sample data, known as training data, in order to make predictions or decisions without being explicitly programmed to do so. Machine learning algorithms are used in a wide variety of applications, such as in medicine, email filtering, speech recognition, agriculture, and computer vision, where it is difficult or unfeasible to develop conventional algorithms to perform the needed tasks.Hu, J.; Niu, H.; Carrasco, J.; Lennox, B.; Arvin, F.,Voronoi-Based Multi-Robot Autonomous Exploration in Unknown Environments via Deep Reinforcement Learning IEEE Transactions on Vehicular Technology, 2020. A subset of machine learning is closely related to computational statistics, which focuses on making pred ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

.jpg)