|

Incompressible String

An incompressible string is a string with Kolmogorov complexity equal to its length, so that it has no shorter encodings.V. Chandru and M.R.Rao, '' Algorithms and Theory of Computation Handbook'', CRC Press 1999, p29-30. Example Suppose we have the string 12349999123499991234, and we are using a compression Compression may refer to: Physical science *Compression (physics), size reduction due to forces *Compression member, a structural element such as a column *Compressibility, susceptibility to compression * Gas compression *Compression ratio, of a ... method that works by putting a special character into the string (say '@') followed by a value that points to an entry in a lookup table (or dictionary) of repeating values. Let's imagine we have an algorithm that examines the string in 4 character chunks. Looking at our string, our algorithm might pick out the values 1234 and 9999 to place into its dictionary. Let's say 1234 is entry 0 and 9999 is entry 1. Now the string ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

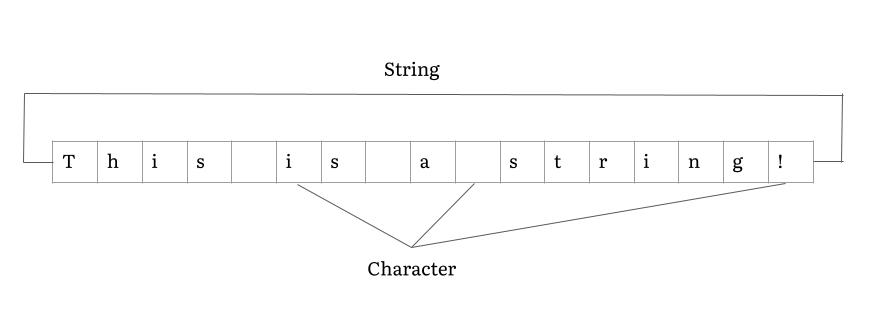

String (computer Science)

In computer programming, a string is traditionally a sequence of characters, either as a literal constant or as some kind of variable. The latter may allow its elements to be mutated and the length changed, or it may be fixed (after creation). A string is generally considered as a data type and is often implemented as an array data structure of bytes (or words) that stores a sequence of elements, typically characters, using some character encoding. ''String'' may also denote more general arrays or other sequence (or list) data types and structures. Depending on the programming language and precise data type used, a variable declared to be a string may either cause storage in memory to be statically allocated for a predetermined maximum length or employ dynamic allocation to allow it to hold a variable number of elements. When a string appears literally in source code, it is known as a string literal or an anonymous string. In formal languages, which are used in mathematical ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Kolmogorov Complexity

In algorithmic information theory (a subfield of computer science and mathematics), the Kolmogorov complexity of an object, such as a piece of text, is the length of a shortest computer program (in a predetermined programming language) that produces the object as output. It is a measure of the computational resources needed to specify the object, and is also known as algorithmic complexity, Solomonoff–Kolmogorov–Chaitin complexity, program-size complexity, descriptive complexity, or algorithmic entropy. It is named after Andrey Kolmogorov, who first published on the subject in 1963 and is a generalization of classical information theory. The notion of Kolmogorov complexity can be used to state and prove impossibility results akin to Cantor's diagonal argument, Gödel's incompleteness theorem, and Turing's halting problem. In particular, no program ''P'' computing a lower bound for each text's Kolmogorov complexity can return a value essentially larger than ''P'''s own leng ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Data Compression

In information theory, data compression, source coding, or bit-rate reduction is the process of encoding information using fewer bits than the original representation. Any particular compression is either lossy or lossless. Lossless compression reduces bits by identifying and eliminating statistical redundancy. No information is lost in lossless compression. Lossy compression reduces bits by removing unnecessary or less important information. Typically, a device that performs data compression is referred to as an encoder, and one that performs the reversal of the process (decompression) as a decoder. The process of reducing the size of a data file is often referred to as data compression. In the context of data transmission, it is called source coding; encoding done at the source of the data before it is stored or transmitted. Source coding should not be confused with channel coding, for error detection and correction or line coding, the means for mapping data onto a signal. ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Lookup Table

In computer science, a lookup table (LUT) is an array that replaces runtime computation with a simpler array indexing operation. The process is termed as "direct addressing" and LUTs differ from hash tables in a way that, to retrieve a value v with key k, a hash table would store the value v in the slot h(k) where h is a hash function i.e. k is used to compute the slot, while in the case of LUT, the value v is stored in slot k, thus directly addressable. The savings in processing time can be significant, because retrieving a value from memory is often faster than carrying out an "expensive" computation or input/output operation. The tables may be precalculated and stored in static program storage, calculated (or "pre-fetched") as part of a program's initialization phase ( memoization), or even stored in hardware in application-specific platforms. Lookup tables are also used extensively to validate input values by matching against a list of valid (or invalid) items in an array and ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Lossless Compression Algorithms

Lossless compression is a class of data compression that allows the original data to be perfectly reconstructed from the compressed data with no loss of information. Lossless compression is possible because most real-world data exhibits statistical redundancy. By contrast, lossy compression permits reconstruction only of an approximation of the original data, though usually with greatly improved compression rates (and therefore reduced media sizes). By operation of the pigeonhole principle, no lossless compression algorithm can efficiently compress all possible data. For this reason, many different algorithms exist that are designed either with a specific type of input data in mind or with specific assumptions about what kinds of redundancy the uncompressed data are likely to contain. Therefore, compression ratios tend to be stronger on human- and machine-readable documents and code in comparison to entropic binary data (random bytes). Lossless data compression is used in many ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |