|

Generalized Vector Space Model

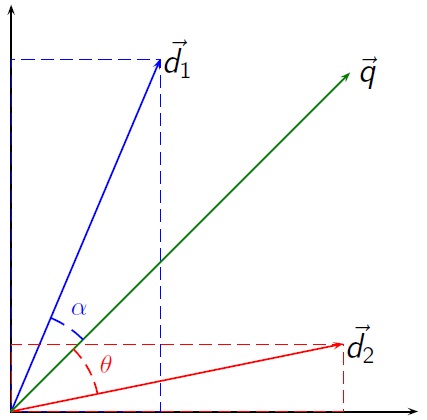

The Generalized vector space model is a generalization of the vector space model used in information retrieval. Wong ''et al.'' presented an analysis of the problems that the pairwise orthogonality assumption of the vector space model (VSM) creates. From here they extended the VSM to the generalized vector space model (GVSM). Definitions GVSM introduces term to term correlations, which deprecate the pairwise orthogonality assumption. More specifically, the factor considered a new space, where each term vector ''ti'' was expressed as a linear combination of ''2n'' vectors ''mr'' where ''r = 1...2n''. For a document ''dk'' and a query ''q'' the similarity function now becomes: :sim(d_k,q) = \frac where ''ti'' and ''tj'' are now vectors of a ''2n'' dimensional space. Term correlation t_i \cdot t_j can be implemented in several ways. For an example, Wong et al. uses the term occurrence frequency matrix obtained from automatic indexing as input to their algorithm. The term occurren ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Vector Space Model

Vector space model or term vector model is an algebraic model for representing text documents (and any objects, in general) as vectors of identifiers (such as index terms). It is used in information filtering, information retrieval, indexing and relevancy rankings. Its first use was in the SMART Information Retrieval System. Definitions Documents and queries are represented as vectors. :d_j = ( w_ ,w_ , \dotsc ,w_ ) :q = ( w_ ,w_ , \dotsc ,w_ ) Each dimension corresponds to a separate term. If a term occurs in the document, its value in the vector is non-zero. Several different ways of computing these values, also known as (term) weights, have been developed. One of the best known schemes is tf-idf weighting (see the example below). The definition of ''term'' depends on the application. Typically terms are single words, keywords, or longer phrases. If words are chosen to be the terms, the dimensionality of the vector is the number of words in the vocabulary (the number of dist ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Information Retrieval

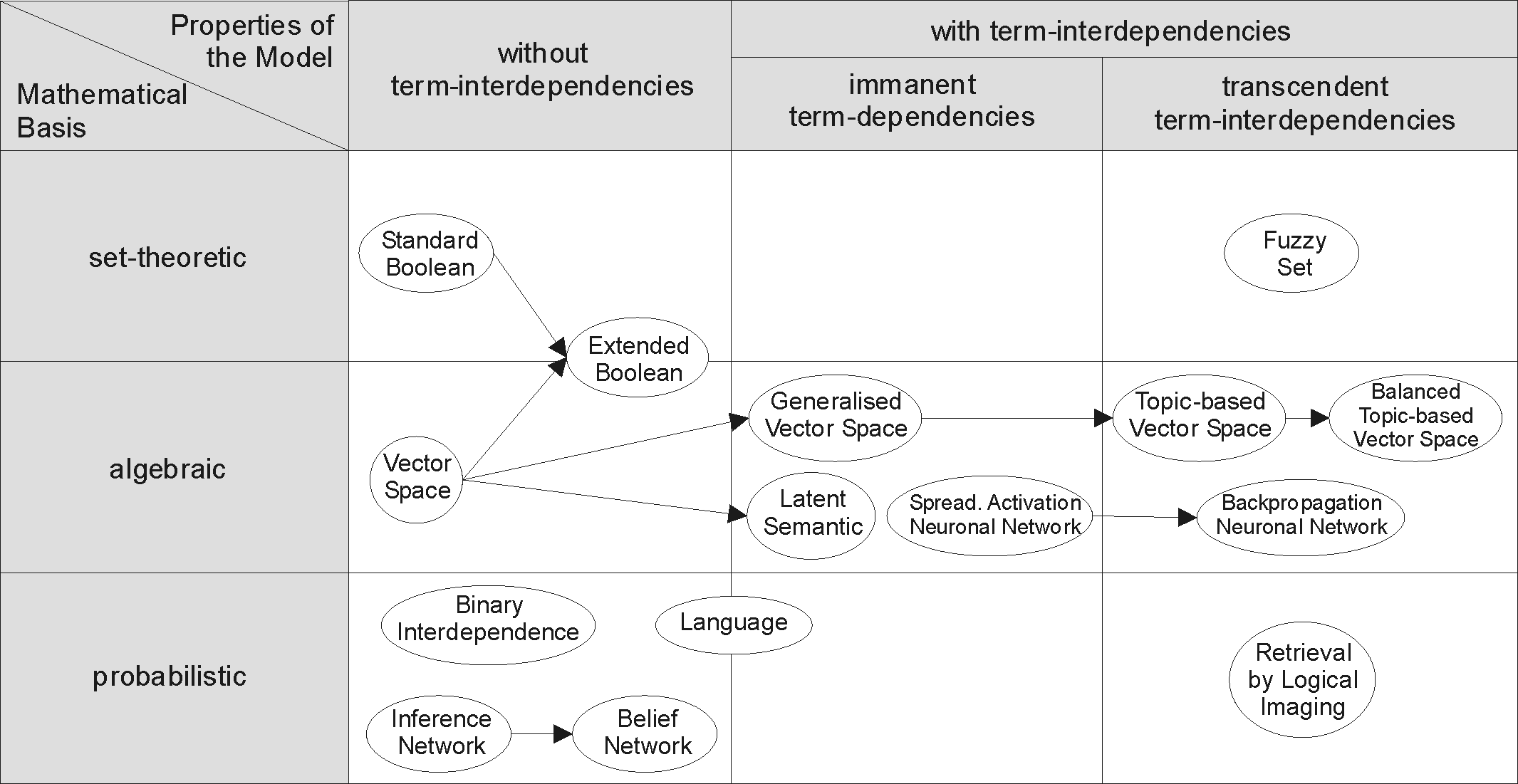

Information retrieval (IR) in computing and information science is the process of obtaining information system resources that are relevant to an information need from a collection of those resources. Searches can be based on full-text or other content-based indexing. Information retrieval is the science of searching for information in a document, searching for documents themselves, and also searching for the metadata that describes data, and for databases of texts, images or sounds. Automated information retrieval systems are used to reduce what has been called information overload. An IR system is a software system that provides access to books, journals and other documents; stores and manages those documents. Web search engines are the most visible IR applications. Overview An information retrieval process begins when a user or searcher enters a query into the system. Queries are formal statements of information needs, for example search strings in web search engines. In inf ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Association For Computing Machinery

The Association for Computing Machinery (ACM) is a US-based international learned society for computing. It was founded in 1947 and is the world's largest scientific and educational computing society. The ACM is a non-profit professional membership group, claiming nearly 110,000 student and professional members . Its headquarters are in New York City. The ACM is an umbrella organization for academic and scholarly interests in computer science ( informatics). Its motto is "Advancing Computing as a Science & Profession". History In 1947, a notice was sent to various people: On January 10, 1947, at the Symposium on Large-Scale Digital Calculating Machinery at the Harvard computation Laboratory, Professor Samuel H. Caldwell of Massachusetts Institute of Technology spoke of the need for an association of those interested in computing machinery, and of the need for communication between them. ..After making some inquiries during May and June, we believe there is ample interest to ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

WordNet

WordNet is a lexical database of semantic relations between words in more than 200 languages. WordNet links words into semantic relations including synonyms, hyponyms, and meronyms. The synonyms are grouped into '' synsets'' with short definitions and usage examples. WordNet can thus be seen as a combination and extension of a dictionary and thesaurus. While it is accessible to human users via a web browser, its primary use is in automatic text analysis and artificial intelligence applications. WordNet was first created in the English language and the English WordNet database and software tools have been released under a BSD style license and are freely available for download from that WordNet website. History and team members WordNet was first created in English only in the Cognitive Science Laboratory of Princeton University under the direction of psychology professor George Armitage Miller starting in 1985 and was later directed by Christiane Fellbaum. The project was ini ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Linked Data

In computing, linked data (often capitalized as Linked Data) is structured data which is interlinked with other data so it becomes more useful through semantic queries. It builds upon standard Web technologies such as HTTP, RDF and URIs, but rather than using them to serve web pages only for human readers, it extends them to share information in a way that can be read automatically by computers. Part of the vision of linked data is for the Internet to become a global database. Tim Berners-Lee, director of the World Wide Web Consortium (W3C), coined the term in a 2006 design note about the Semantic Web project. Linked data may also be open data, in which case it is usually described as Linked Open Data. Principles In his 2006 "Linked Data" note, Tim Berners-Lee outlined four principles of linked data, paraphrased along the following lines: #Uniform Resource Identifiers (URIs) should be used to name and identify individual things. #HTTP URIs should be used to allow these thing ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

DBpedia

DBpedia (from "DB" for "database") is a project aiming to extract structured content from the information created in the Wikipedia project. This structured information is made available on the World Wide Web. DBpedia allows users to semantically query relationships and properties of Wikipedia resources, including links to other related datasets. In 2008, Tim Berners-Lee described DBpedia as one of the most famous parts of the decentralized Linked Data effort. Background The project was started by people at the Free University of Berlin and Leipzig University''DBpedia: A Nucleus for a Web of Open Data'', available a in collaboration with OpenLink Software, and is now maintained by people at the University of Mannheim and Leipzig University. The first publicly available dataset was published in 2007. The data is made available under free licences (CC-BY-SA), allowing others to reuse the dataset; it doesn't however use an open data license to waive the sui generis database ri ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

YAGO (database)

YAGO (Yet Another Great Ontology) is an open source knowledge base developed at the Max Planck Institute for Computer Science in Saarbrücken. It is automatically extracted from Wikipedia and other sources. As of 2019, YAGO3 has knowledge of more than 10 million entities and contains more than 120 million facts about these entities. The information in YAGO is extracted from Wikipedia (e.g., categories, redirects, infoboxes), WordNet (e.g., synsets, hyponymy), and GeoNames. The accuracy of YAGO was manually evaluated to be above 95% on a sample of facts. To integrate it to the linked data cloud, YAGO has been linked to the DBpedia ontology and to the SUMO ontology. YAGO3 is provided in Turtle and tsv formats. Dumps of the whole database are available, as well as thematic and specialized dumps. It can also be queried through various online browsers and through a SPARQL endpoint hosted by OpenLink Software. The source code of YAGO3 is available on GitHub. YAGO has been used in th ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Entity Linking

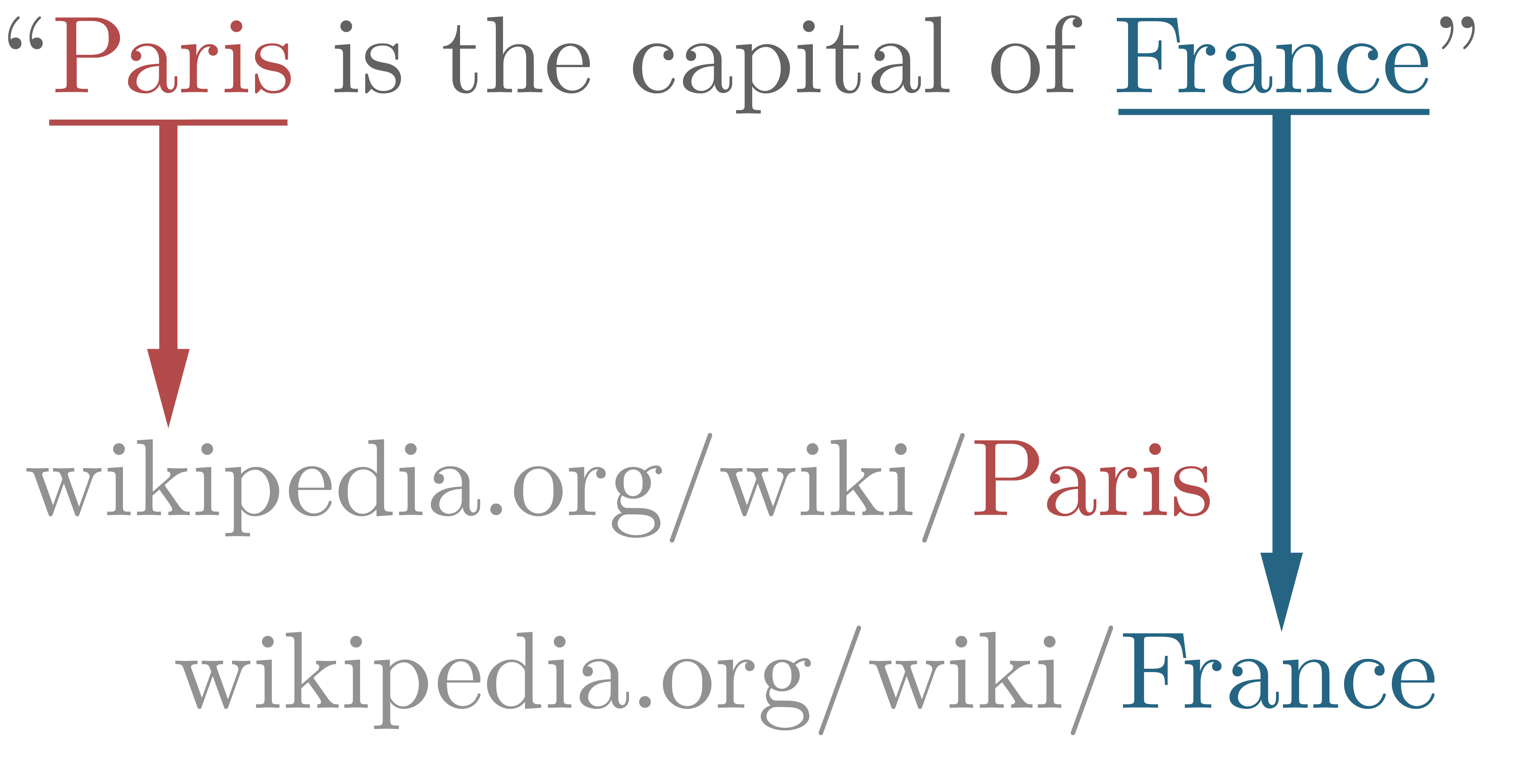

In natural language processing, entity linking, also referred to as named-entity linking (NEL), named-entity disambiguation (NED), named-entity recognition and disambiguation (NERD) or named-entity normalization (NEN) is the task of assigning a unique identity to entities (such as famous individuals, locations, or companies) mentioned in text. For example, given the sentence ''"Paris is the capital of France"'', the idea is to determine that ''"Paris"'' refers to the city of Paris and not to Paris Hilton or any other entity that could be referred to as ''"Paris"''. Entity linking is different from named-entity recognition (NER) in that NER identifies the occurrence of a named entity in text but it does not identify which specific entity it is (see Differences from other techniques). Introduction In entity linking, words of interest (names of persons, locations and companies) are mapped from an input text to corresponding unique entities in a target knowledge base. Words of inte ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |