|

Generated Regressor

In least squares estimation problems, sometimes one or more regressors specified in the model are not observable. One way to circumvent this issue is to estimate or generate regressors from observable data. This generated regressor method is also applicable to unobserved instrumental variables. Under some regularity conditions, consistency and asymptotic normality of least squares estimator is preserved, but asymptotic variance has a different form in general. Suppose the model of interest is the following: :y_=g(x_,x_,\beta)+u_ where g is a conditional mean function and its form is known up to finite-dimensional parameter β. Here x_ is not observable, but we know that x_=h(w_,\gamma) for some function ''h'' known up to parameter \gamma, and a random sample y_=g(x_,x_,\beta)+u_ is available. Suppose we have a consistent estimator \hat\gamma of \gamma that uses the observation w_'s. Then, β can be estimated by (Non-Linear) Least Squares using \hat=h(w_,\hat\gamma). Some exam ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Least Squares

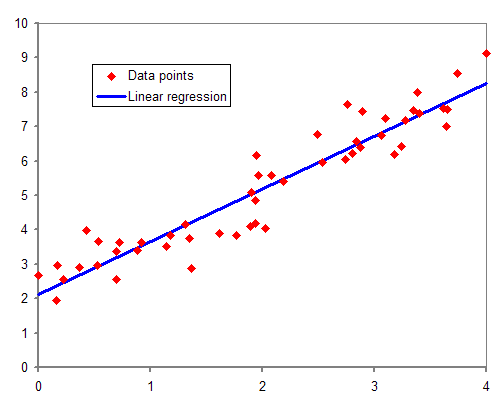

The method of least squares is a standard approach in regression analysis to approximate the solution of overdetermined systems (sets of equations in which there are more equations than unknowns) by minimizing the sum of the squares of the residuals (a residual being the difference between an observed value and the fitted value provided by a model) made in the results of each individual equation. The most important application is in data fitting. When the problem has substantial uncertainties in the independent variable (the ''x'' variable), then simple regression and least-squares methods have problems; in such cases, the methodology required for fitting errors-in-variables models may be considered instead of that for least squares. Least squares problems fall into two categories: linear or ordinary least squares and nonlinear least squares, depending on whether or not the residuals are linear in all unknowns. The linear least-squares problem occurs in statistical regressio ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Regressors

Dependent and independent variables are variables in mathematical modeling, statistical modeling and experimental sciences. Dependent variables receive this name because, in an experiment, their values are studied under the supposition or demand that they depend, by some law or rule (e.g., by a mathematical function), on the values of other variables. Independent variables, in turn, are not seen as depending on any other variable in the scope of the experiment in question. In this sense, some common independent variables are time, space, density, mass, fluid flow rate, and previous values of some observed value of interest (e.g. human population size) to predict future values (the dependent variable). Of the two, it is always the dependent variable whose variation is being studied, by altering inputs, also known as regressors in a statistical context. In an experiment, any variable that can be attributed a value without attributing a value to any other variable is called ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Instrumental Variables

In statistics, econometrics, epidemiology and related disciplines, the method of instrumental variables (IV) is used to estimate causal relationships when controlled experiments are not feasible or when a treatment is not successfully delivered to every unit in a randomized experiment. Intuitively, IVs are used when an explanatory variable of interest is correlated with the error term, in which case ordinary least squares and ANOVA give biased results. A valid instrument induces changes in the explanatory variable but has no independent effect on the dependent variable, allowing a researcher to uncover the causal effect of the explanatory variable on the dependent variable. Instrumental variable methods allow for consistent estimation when the explanatory variables (covariates) are correlated with the error terms in a regression model. Such correlation may occur when: # changes in the dependent variable change the value of at least one of the covariates ("reverse" causation), # ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Two-step M-estimator

Two-step M-estimators deals with M-estimation problems that require preliminary estimation to obtain the parameter of interest. Two-step M-estimation is different from usual M-estimation problem because asymptotic distribution of the second-step estimator generally depends on the first-step estimator. Accounting for this change in asymptotic distribution is important for valid inference. Description The class of two-step M-estimators includes Heckman's sample selection estimator, weighted non-linear least squares, and ordinary least squares with generated regressors.Wooldridge, J.M., Econometric Analysis of Cross Section and Panel Data, MIT Press, Cambridge, Mass. To fix ideas, let \^n_ \subseteq R^d be an i.i.d. sample. \Theta and \Gamma are subsets of Euclidean spaces R^p and R^q , respectively. Given a function m(;;;): R^d \times \Theta \times \Gamma\rightarrow R , two-step M-estimator \hat\theta is defined as: :\hat \theta:=\arg\max_\frac\sum_m\bigl(W_,\theta,\hat\gamma ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Least Squares

The method of least squares is a standard approach in regression analysis to approximate the solution of overdetermined systems (sets of equations in which there are more equations than unknowns) by minimizing the sum of the squares of the residuals (a residual being the difference between an observed value and the fitted value provided by a model) made in the results of each individual equation. The most important application is in data fitting. When the problem has substantial uncertainties in the independent variable (the ''x'' variable), then simple regression and least-squares methods have problems; in such cases, the methodology required for fitting errors-in-variables models may be considered instead of that for least squares. Least squares problems fall into two categories: linear or ordinary least squares and nonlinear least squares, depending on whether or not the residuals are linear in all unknowns. The linear least-squares problem occurs in statistical regressio ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

M-estimators

In statistics, M-estimators are a broad class of extremum estimators for which the objective function is a sample average. Both non-linear least squares and maximum likelihood estimation are special cases of M-estimators. The definition of M-estimators was motivated by robust statistics, which contributed new types of M-estimators. The statistical procedure of evaluating an M-estimator on a data set is called M-estimation. 48 samples of robust M-estimators can be found in a recent review study. More generally, an M-estimator may be defined to be a zero of an estimating function. This estimating function is often the derivative of another statistical function. For example, a maximum-likelihood estimate is the point where the derivative of the likelihood function with respect to the parameter is zero; thus, a maximum-likelihood estimator is a critical point of the score function. In many applications, such M-estimators can be thought of as estimating characteristics of the popula ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Regression Analysis

In statistical modeling, regression analysis is a set of statistical processes for estimating the relationships between a dependent variable (often called the 'outcome' or 'response' variable, or a 'label' in machine learning parlance) and one or more independent variables (often called 'predictors', 'covariates', 'explanatory variables' or 'features'). The most common form of regression analysis is linear regression, in which one finds the line (or a more complex linear combination) that most closely fits the data according to a specific mathematical criterion. For example, the method of ordinary least squares computes the unique line (or hyperplane) that minimizes the sum of squared differences between the true data and that line (or hyperplane). For specific mathematical reasons (see linear regression), this allows the researcher to estimate the conditional expectation (or population average value) of the dependent variable when the independent variables take on a given ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |