|

Focused Information Criterion

In statistics, the focused information criterion (FIC) is a method for selecting the most appropriate model among a set of competitors for a given data set. Unlike most other model selection strategies, like the Akaike information criterion (AIC), the Bayesian information criterion (BIC) and the deviance information criterion (DIC), the FIC does not attempt to assess the overall fit of candidate models but focuses attention directly on the parameter of primary interest with the statistical analysis, say \mu , for which competing models lead to different estimates, say \hat\mu_j for model j . The FIC method consists in first developing an exact or approximate expression for the precision or quality of each estimator, say r_j for \hat\mu_j , and then use data to estimate these precision measures, say \hat r_j . In the end the model with best estimated precision is selected. The FIC methodology was developed by Gerda Claeskens and Nils Lid Hjort, first in two 2003 discussion ar ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Statistics

Statistics (from German language, German: ''wikt:Statistik#German, Statistik'', "description of a State (polity), state, a country") is the discipline that concerns the collection, organization, analysis, interpretation, and presentation of data. In applying statistics to a scientific, industrial, or social problem, it is conventional to begin with a statistical population or a statistical model to be studied. Populations can be diverse groups of people or objects such as "all people living in a country" or "every atom composing a crystal". Statistics deals with every aspect of data, including the planning of data collection in terms of the design of statistical survey, surveys and experimental design, experiments.Dodge, Y. (2006) ''The Oxford Dictionary of Statistical Terms'', Oxford University Press. When census data cannot be collected, statisticians collect data by developing specific experiment designs and survey sample (statistics), samples. Representative sampling as ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Semiparametric Model

In statistics, a semiparametric model is a statistical model that has parametric and nonparametric components. A statistical model is a parameterized family of distributions: \ indexed by a parameter \theta. * A parametric model is a model in which the indexing parameter \theta is a vector in k-dimensional Euclidean space, for some nonnegative integer k.. Thus, \theta is finite-dimensional, and \Theta \subseteq \mathbb^k. * With a nonparametric model, the set of possible values of the parameter \theta is a subset of some space V, which is not necessarily finite-dimensional. For example, we might consider the set of all distributions with mean 0. Such spaces are vector spaces with topological structure, but may not be finite-dimensional as vector spaces. Thus, \Theta \subseteq V for some possibly infinite-dimensional space V. * With a semiparametric model, the parameter has both a finite-dimensional component and an infinite-dimensional component (often a real-valued functi ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Cambridge University Press

Cambridge University Press is the university press of the University of Cambridge. Granted letters patent by Henry VIII of England, King Henry VIII in 1534, it is the oldest university press A university press is an academic publishing house specializing in monographs and scholarly journals. Most are nonprofit organizations and an integral component of a large research university. They publish work that has been reviewed by schola ... in the world. It is also the King's Printer. Cambridge University Press is a department of the University of Cambridge and is both an academic and educational publisher. It became part of Cambridge University Press & Assessment, following a merger with Cambridge Assessment in 2021. With a global sales presence, publishing hubs, and offices in more than 40 Country, countries, it publishes over 50,000 titles by authors from over 100 countries. Its publishing includes more than 380 academic journals, monographs, reference works, school and uni ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Shibata Information Criterion , Train Coupler

{{disambiguation, geo ...

Shibata may refer to: Places * Shibata, Miyagi, a town in Miyagi Prefecture * Shibata District, Miyagi, a district in Miyagi Prefecture * Shibata, Niigata, a city in Niigata Prefecture ** Shibata Station (Niigata), a railway station in Niigata Prefecture * Shibata Station (Aichi), a railway station in Aichi Prefecture Other uses * Shibata (surname), a Japanese surname *Shibata clan, Japanese clan originating in the 12th century *Shibata coupler A coupling (or a coupler) is a mechanism typically placed at each end of a railway vehicle that connects them together to form a train. A variety of coupler types have been developed over the course of railway history. Key issues in their desig ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Hannan–Quinn Information Criterion

In statistics, the Hannan–Quinn information criterion (HQC) is a criterion for model selection. It is an alternative to Akaike information criterion (AIC) and Bayesian information criterion (BIC). It is given as : \mathrm = -2 L_ + 2 k \ln(\ln(n)), \ where ''L_'' is the log-likelihood, ''k'' is the number of parameters, and ''n'' is the number of observations. Burnham & Anderson (2002, p. 287) say that HQC, "while often cited, seems to have seen little use in practice". They also note that HQC, like BIC, but unlike AIC, is not an estimator of Kullback–Leibler divergence. Claeskens & Hjort (2008, ch. 4) note that HQC, like BIC, but unlike AIC, is not asymptotically efficient; however, it misses the optimal estimation rate by a very small \ln(\ln(n)) factor. They further point out that whatever method is being used for fine-tuning the criterion will be more important in practice than the term \ln(\ln(n)), since this latter number is small even for very large n; h ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Deviance Information Criterion

The deviance information criterion (DIC) is a hierarchical modeling generalization of the Akaike information criterion (AIC). It is particularly useful in Bayesian model selection problems where the posterior distributions of the models have been obtained by Markov chain Monte Carlo (MCMC) simulation. DIC is an asymptotic approximation as the sample size becomes large, like AIC. It is only valid when the posterior distribution is approximately multivariate normal. Definition Define the deviance as D(\theta)=-2 \log(p(y, \theta))+C\, , where y are the data, \theta are the unknown parameters of the model and p(y, \theta) is the likelihood function. C is a constant that cancels out in all calculations that compare different models, and which therefore does not need to be known. There are two calculations in common usage for the effective number of parameters of the model. The first, as described in , is p_D=\overline-D(\bar), where \bar is the expectation of \theta. The second, as ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Proportional Hazards Models

Proportional hazards models are a class of survival models in statistics. Survival models relate the time that passes, before some event occurs, to one or more covariates that may be associated with that quantity of time. In a proportional hazards model, the unique effect of a unit increase in a covariate is multiplicative with respect to the hazard rate. For example, taking a drug may halve one's hazard rate for a stroke occurring, or, changing the material from which a manufactured component is constructed may double its hazard rate for failure. Other types of survival models such as accelerated failure time models do not exhibit proportional hazards. The accelerated failure time model describes a situation where the biological or mechanical life history of an event is accelerated (or decelerated). Background Survival models can be viewed as consisting of two parts: the underlying baseline hazard function, often denoted \lambda_0(t), describing how the risk of event per time ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Generalized Linear Model

In statistics, a generalized linear model (GLM) is a flexible generalization of ordinary linear regression. The GLM generalizes linear regression by allowing the linear model to be related to the response variable via a ''link function'' and by allowing the magnitude of the variance of each measurement to be a function of its predicted value. Generalized linear models were formulated by John Nelder and Robert Wedderburn as a way of unifying various other statistical models, including linear regression, logistic regression and Poisson regression. They proposed an iteratively reweighted least squares method for maximum likelihood estimation (MLE) of the model parameters. MLE remains popular and is the default method on many statistical computing packages. Other approaches, including Bayesian regression and least squares fitting to variance stabilized responses, have been developed. Intuition Ordinary linear regression predicts the expected value of a given unknown quantity ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Covariate

Dependent and independent variables are variables in mathematical modeling, statistical modeling and experimental sciences. Dependent variables receive this name because, in an experiment, their values are studied under the supposition or demand that they depend, by some law or rule (e.g., by a mathematical function), on the values of other variables. Independent variables, in turn, are not seen as depending on any other variable in the scope of the experiment in question. In this sense, some common independent variables are time, space, density, mass, fluid flow rate, and previous values of some observed value of interest (e.g. human population size) to predict future values (the dependent variable). Of the two, it is always the dependent variable whose variation is being studied, by altering inputs, also known as regressors in a statistical context. In an experiment, any variable that can be attributed a value without attributing a value to any other variable is called an ind ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

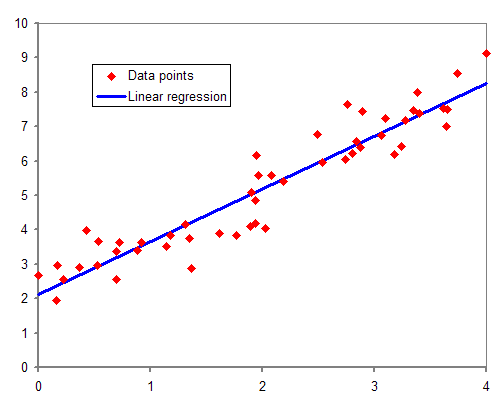

Regression Analysis

In statistical modeling, regression analysis is a set of statistical processes for estimating the relationships between a dependent variable (often called the 'outcome' or 'response' variable, or a 'label' in machine learning parlance) and one or more independent variables (often called 'predictors', 'covariates', 'explanatory variables' or 'features'). The most common form of regression analysis is linear regression, in which one finds the line (or a more complex linear combination) that most closely fits the data according to a specific mathematical criterion. For example, the method of ordinary least squares computes the unique line (or hyperplane) that minimizes the sum of squared differences between the true data and that line (or hyperplane). For specific mathematical reasons (see linear regression), this allows the researcher to estimate the conditional expectation (or population average value) of the dependent variable when the independent variables take on a given ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Sample Size

Sample size determination is the act of choosing the number of observations or Replication (statistics), replicates to include in a statistical sample. The sample size is an important feature of any empirical study in which the goal is to make statistical inference, inferences about a statistical population, population from a sample. In practice, the sample size used in a study is usually determined based on the cost, time, or convenience of collecting the data, and the need for it to offer sufficient statistical power. In complicated studies there may be several different sample sizes: for example, in a stratified sampling, stratified survey sampling, survey there would be different sizes for each stratum. In a census, data is sought for an entire population, hence the intended sample size is equal to the population. In experimental design, where a study may be divided into different treatment groups, there may be different sample sizes for each group. Sample sizes may be chosen in ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Non-parametric Statistics

Nonparametric statistics is the branch of statistics that is not based solely on parametrized families of probability distributions (common examples of parameters are the mean and variance). Nonparametric statistics is based on either being distribution-free or having a specified distribution but with the distribution's parameters unspecified. Nonparametric statistics includes both descriptive statistics and statistical inference. Nonparametric tests are often used when the assumptions of parametric tests are violated. Definitions The term "nonparametric statistics" has been imprecisely defined in the following two ways, among others: Applications and purpose Non-parametric methods are widely used for studying populations that take on a ranked order (such as movie reviews receiving one to four stars). The use of non-parametric methods may be necessary when data have a ranking but no clear numerical interpretation, such as when assessing preferences. In terms of levels of me ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |