|

Fisher-Snedecor Distribution

In probability theory and statistics, the ''F''-distribution or F-ratio, also known as Snedecor's ''F'' distribution or the Fisher–Snedecor distribution (after Ronald Fisher and George W. Snedecor) is a continuous probability distribution that arises frequently as the null distribution of a test statistic, most notably in the analysis of variance (ANOVA) and other ''F''-tests. Definition The F-distribution with ''d''1 and ''d''2 degrees of freedom is the distribution of : X = \frac where S_1 and S_2 are independent random variables with chi-square distributions with respective degrees of freedom d_1 and d_2. It can be shown to follow that the probability density function (pdf) for ''X'' is given by : \begin f(x; d_1,d_2) &= \frac \\ pt&=\frac \left(\frac\right)^ x^ \left(1+\frac \, x \right)^ \end for real ''x'' > 0. Here \mathrm is the beta function. In many applications, the parameters ''d''1 and ''d''2 are positive integers, but the distribution is well-def ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

F-statistics

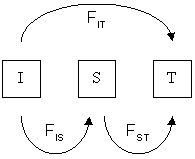

In population genetics, ''F''-statistics (also known as fixation indices) describe the statistically expected level of heterozygosity in a population; more specifically the expected degree of (usually) a reduction in heterozygosity when compared to Hardy–Weinberg expectation. ''F''-statistics can also be thought of as a measure of the correlation between genes drawn at different levels of a (hierarchically) subdivided population. This correlation is influenced by several evolutionary processes, such as genetic drift, founder effect, bottleneck, genetic hitchhiking, meiotic drive, mutation, gene flow, inbreeding, natural selection, or the Wahlund effect, but it was originally designed to measure the amount of allelic fixation owing to genetic drift. The concept of ''F''-statistics was developed during the 1920s by the American geneticist Sewall Wright, who was interested in inbreeding in cattle. However, because complete dominance causes the phenotypes of homozygote dominants ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Beta Function

In mathematics, the beta function, also called the Euler integral of the first kind, is a special function that is closely related to the gamma function and to binomial coefficients. It is defined by the integral : \Beta(z_1,z_2) = \int_0^1 t^(1-t)^\,dt for complex number inputs z_1, z_2 such that \Re(z_1), \Re(z_2)>0. The beta function was studied by Leonhard Euler and Adrien-Marie Legendre and was given its name by Jacques Binet; its symbol is a Greek capital beta. Properties The beta function is symmetric, meaning that \Beta(z_1,z_2) = \Beta(z_2,z_1) for all inputs z_1 and z_2.Davis (1972) 6.2.2 p.258 A key property of the beta function is its close relationship to the gamma function: : \Beta(z_1,z_2)=\frac. A proof is given below in . The beta function is also closely related to binomial coefficients. When (or , by symmetry) is a positive integer, it follows from the definition of the gamma function thatDavis (1972) 6.2.1 p.258 : \Beta(m,n) =\dfrac = \frac \B ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Cochran's Theorem

In statistics, Cochran's theorem, devised by William G. Cochran, is a theorem used to justify results relating to the probability distributions of statistics that are used in the analysis of variance. Statement Let ''U''1, ..., ''U''''N'' be i.i.d. standard normal distribution, normally distributed random variables, and U = [U_1, ..., U_N]^T. Let B^,B^,\ldots, B^be symmetric matrices. Define ''r''''i'' to be the rank (linear algebra), rank of B^. Define Q_i=U^T B^U, so that the ''Q''i are quadratic forms. Further assume \sum_i Q_i = U^T U. Cochran's theorem states that the following are equivalent: * r_1+\cdots +r_k=N, * the ''Q''''i'' are independence (probability), independent * each ''Q''''i'' has a chi-squared distribution with ''r''''i'' degrees of freedom (statistics), degrees of freedom. Often it's stated as \sum_i A_i = A, where A is idempotent, and \sum_i r_i = N is replaced by \sum_i r_i = rank(A). But after an orthogonal transform, A = diag(I_M, 0), and so we reduc ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Statistical Independence

Independence is a fundamental notion in probability theory, as in statistics and the theory of stochastic processes. Two events are independent, statistically independent, or stochastically independent if, informally speaking, the occurrence of one does not affect the probability of occurrence of the other or, equivalently, does not affect the odds. Similarly, two random variables are independent if the realization of one does not affect the probability distribution of the other. When dealing with collections of more than two events, two notions of independence need to be distinguished. The events are called pairwise independent if any two events in the collection are independent of each other, while mutual independence (or collective independence) of events means, informally speaking, that each event is independent of any combination of other events in the collection. A similar notion exists for collections of random variables. Mutual independence implies pairwise independence ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Degrees Of Freedom (statistics)

In statistics, the number of degrees of freedom is the number of values in the final calculation of a statistic that are free to vary. Estimates of statistical parameters can be based upon different amounts of information or data. The number of independent pieces of information that go into the estimate of a parameter is called the degrees of freedom. In general, the degrees of freedom of an estimate of a parameter are equal to the number of independent scores that go into the estimate minus the number of parameters used as intermediate steps in the estimation of the parameter itself. For example, if the variance is to be estimated from a random sample of ''N'' independent scores, then the degrees of freedom is equal to the number of independent scores (''N'') minus the number of parameters estimated as intermediate steps (one, namely, the sample mean) and is therefore equal to ''N'' − 1. Mathematically, degrees of freedom is the number of dimensions of the domain o ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Chi-squared Distribution

In probability theory and statistics, the chi-squared distribution (also chi-square or \chi^2-distribution) with k degrees of freedom is the distribution of a sum of the squares of k independent standard normal random variables. The chi-squared distribution is a special case of the gamma distribution and is one of the most widely used probability distributions in inferential statistics, notably in hypothesis testing and in construction of confidence intervals. This distribution is sometimes called the central chi-squared distribution, a special case of the more general noncentral chi-squared distribution. The chi-squared distribution is used in the common chi-squared tests for goodness of fit of an observed distribution to a theoretical one, the independence of two criteria of classification of qualitative data, and in confidence interval estimation for a population standard deviation of a normal distribution from a sample standard deviation. Many other statistical tests a ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Random Variate

In probability and statistics, a random variate or simply variate is a particular outcome of a ''random variable'': the random variates which are other outcomes of the same random variable might have different values (random numbers). A random deviate or simply deviate is the difference of random variate with respect to the distribution central location (e.g., mean), often divided by the standard deviation of the distribution (i.e., as a standard score). Random variates are used when simulating processes driven by random influences (stochastic processes). In modern applications, such simulations would derive random variates corresponding to any given probability distribution from computer procedures designed to create random variates corresponding to a uniform distribution, where these procedures would actually provide values chosen from a uniform distribution of pseudorandom numbers. Procedures to generate random variates corresponding to a given distribution are known as p ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Confluent Hypergeometric Function

In mathematics, a confluent hypergeometric function is a solution of a confluent hypergeometric equation, which is a degenerate form of a hypergeometric differential equation where two of the three regular singularities merge into an irregular singularity. The term ''confluent'' refers to the merging of singular points of families of differential equations; ''confluere'' is Latin for "to flow together". There are several common standard forms of confluent hypergeometric functions: * Kummer's (confluent hypergeometric) function , introduced by , is a solution to Kummer's differential equation. This is also known as the confluent hypergeometric function of the first kind. There is a different and unrelated Kummer's function bearing the same name. * Tricomi's (confluent hypergeometric) function introduced by , sometimes denoted by , is another solution to Kummer's equation. This is also known as the confluent hypergeometric function of the second kind. * Whittaker functions (for ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Biometrika

''Biometrika'' is a peer-reviewed scientific journal published by Oxford University Press for thBiometrika Trust The editor-in-chief is Paul Fearnhead (Lancaster University). The principal focus of this journal is theoretical statistics. It was established in 1901 and originally appeared quarterly. It changed to three issues per year in 1977 but returned to quarterly publication in 1992. History ''Biometrika'' was established in 1901 by Francis Galton, Karl Pearson, and Raphael Weldon to promote the study of biometrics. The history of ''Biometrika'' is covered by Cox (2001). The name of the journal was chosen by Pearson, but Francis Edgeworth insisted that it be spelt with a "k" and not a "c". Since the 1930s, it has been a journal for statistical theory and methodology. Galton's role in the journal was essentially that of a patron and the journal was run by Pearson and Weldon and after Weldon's death in 1906 by Pearson alone until he died in 1936. In the early days, the American ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Characteristic Function (probability Theory)

In probability theory and statistics, the characteristic function of any real-valued random variable completely defines its probability distribution. If a random variable admits a probability density function, then the characteristic function is the Fourier transform of the probability density function. Thus it provides an alternative route to analytical results compared with working directly with probability density functions or cumulative distribution functions. There are particularly simple results for the characteristic functions of distributions defined by the weighted sums of random variables. In addition to univariate distributions, characteristic functions can be defined for vector- or matrix-valued random variables, and can also be extended to more generic cases. The characteristic function always exists when treated as a function of a real-valued argument, unlike the moment-generating function. There are relations between the behavior of the characteristic function of a ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Beta Prime Distribution

In probability theory and statistics, the beta prime distribution (also known as inverted beta distribution or beta distribution of the second kindJohnson et al (1995), p 248) is an absolutely continuous probability distribution. Definitions Beta prime distribution is defined for x > 0 with two parameters ''α'' and ''β'', having the probability density function: : f(x) = \frac where ''B'' is the Beta function. The cumulative distribution function is : F(x; \alpha,\beta)=I_\left(\alpha, \beta \right) , where ''I'' is the regularized incomplete beta function. The expected value, variance, and other details of the distribution are given in the sidebox; for \beta>4, the excess kurtosis is :\gamma_2 = 6\frac. While the related beta distribution is the conjugate prior distribution of the parameter of a Bernoulli distribution expressed as a probability, the beta prime distribution is the conjugate prior distribution of the parameter of a Bernoulli distribution expressed i ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Excess Kurtosis

In probability theory and statistics, kurtosis (from el, κυρτός, ''kyrtos'' or ''kurtos'', meaning "curved, arching") is a measure of the "tailedness" of the probability distribution of a real-valued random variable. Like skewness, kurtosis describes a particular aspect of a probability distribution. There are different ways to quantify kurtosis for a theoretical distribution, and there are corresponding ways of estimating it using a sample from a population. Different measures of kurtosis may have different interpretations. The standard measure of a distribution's kurtosis, originating with Karl Pearson, is a scaled version of the fourth moment of the distribution. This number is related to the tails of the distribution, not its peak; hence, the sometimes-seen characterization of kurtosis as "peakedness" is incorrect. For this measure, higher kurtosis corresponds to greater extremity of deviations (or outliers), and not the configuration of data near the mean. It is co ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |