|

Freedman's Paradox

In statistical analysis, Freedman's paradox, named after David Freedman, is a problem in model selection whereby predictor variables with no relationship to the dependent variable can pass tests of significance – both individually via a t-test, and jointly via an F-test for the significance of the regression. Freedman demonstrated (through simulation and asymptotic calculation) that this is a common occurrence when the number of variables is similar to the number of data points. Specifically, if the dependent variable and ''k'' regressors are independent normal variables, and there are ''n'' observations, then as ''k'' and ''n'' jointly go to infinity in the ratio ''k''/''n''=''ρ'', # the ''R''2 goes to ''ρ'', # the F-statistic for the overall regression goes to 1.0, and # the number of spuriously significant regressors goes to ''αk'' where α is the chosen critical probability (probability of Type I error for a regressor). This third result is intuitive because it says tha ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Statistical Analysis

Statistical inference is the process of using data analysis to infer properties of an underlying distribution of probability.Upton, G., Cook, I. (2008) ''Oxford Dictionary of Statistics'', OUP. . Inferential statistical analysis infers properties of a population, for example by testing hypotheses and deriving estimates. It is assumed that the observed data set is sampled from a larger population. Inferential statistics can be contrasted with descriptive statistics. Descriptive statistics is solely concerned with properties of the observed data, and it does not rest on the assumption that the data come from a larger population. In machine learning, the term ''inference'' is sometimes used instead to mean "make a prediction, by evaluating an already trained model"; in this context inferring properties of the model is referred to as ''training'' or ''learning'' (rather than ''inference''), and using a model for prediction is referred to as ''inference'' (instead of ''prediction''); ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

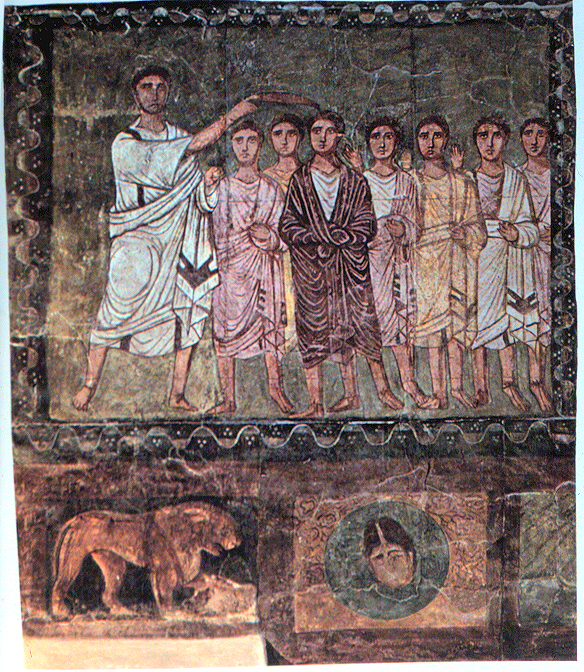

David A

David (; , "beloved one") (traditional spelling), , ''Dāwūd''; grc-koi, Δαυΐδ, Dauíd; la, Davidus, David; gez , ዳዊት, ''Dawit''; xcl, Դաւիթ, ''Dawitʿ''; cu, Давíдъ, ''Davidŭ''; possibly meaning "beloved one". was, according to the Hebrew Bible, the third king of the United Kingdom of Israel. In the Books of Samuel, he is described as a young shepherd and harpist who gains fame by slaying Goliath, a champion of the Philistines, in southern Canaan. David becomes a favourite of Saul, the first king of Israel; he also forges a notably close friendship with Jonathan, a son of Saul. However, under the paranoia that David is seeking to usurp the throne, Saul attempts to kill David, forcing the latter to go into hiding and effectively operate as a fugitive for several years. After Saul and Jonathan are both killed in battle against the Philistines, a 30-year-old David is anointed king over all of Israel and Judah. Following his rise to power, David ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

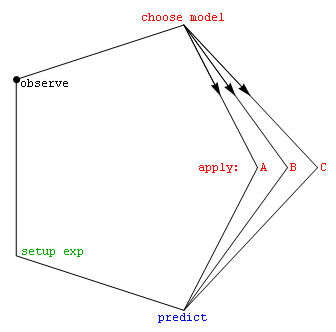

Model Selection

Model selection is the task of selecting a statistical model from a set of candidate models, given data. In the simplest cases, a pre-existing set of data is considered. However, the task can also involve the design of experiments such that the data collected is well-suited to the problem of model selection. Given candidate models of similar predictive or explanatory power, the simplest model is most likely to be the best choice (Occam's razor). state, "The majority of the problems in statistical inference can be considered to be problems related to statistical modeling". Relatedly, has said, "How hetranslation from subject-matter problem to statistical model is done is often the most critical part of an analysis". Model selection may also refer to the problem of selecting a few representative models from a large set of computational models for the purpose of decision making or optimization under uncertainty. Introduction In its most basic forms, model selection is one ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Dependent And Independent Variables

Dependent and independent variables are variables in mathematical modeling, statistical modeling and experimental sciences. Dependent variables receive this name because, in an experiment, their values are studied under the supposition or demand that they depend, by some law or rule (e.g., by a mathematical function), on the values of other variables. Independent variables, in turn, are not seen as depending on any other variable in the scope of the experiment in question. In this sense, some common independent variables are time, space, density, mass, fluid flow rate, and previous values of some observed value of interest (e.g. human population size) to predict future values (the dependent variable). Of the two, it is always the dependent variable whose variation is being studied, by altering inputs, also known as regressors in a statistical context. In an experiment, any variable that can be attributed a value without attributing a value to any other variable is called an in ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Information Theory

Information theory is the scientific study of the quantification (science), quantification, computer data storage, storage, and telecommunication, communication of information. The field was originally established by the works of Harry Nyquist and Ralph Hartley, in the 1920s, and Claude Shannon in the 1940s. The field is at the intersection of probability theory, statistics, computer science, statistical mechanics, information engineering (field), information engineering, and electrical engineering. A key measure in information theory is information entropy, entropy. Entropy quantifies the amount of uncertainty involved in the value of a random variable or the outcome of a random process. For example, identifying the outcome of a fair coin flip (with two equally likely outcomes) provides less information (lower entropy) than specifying the outcome from a roll of a dice, die (with six equally likely outcomes). Some other important measures in information theory are mutual informat ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Regression Variable Selection

Regression or regressions may refer to: Science * Marine regression, coastal advance due to falling sea level, the opposite of marine transgression * Regression (medicine), a characteristic of diseases to express lighter symptoms or less extent (mainly for tumors), without disappearing totally * Regression (psychology), a defensive reaction to some unaccepted impulses * Nodal regression, the movement of the nodes of an object in orbit, in the opposite direction to the motion of the object Statistics * Regression analysis, a statistical technique for estimating the relationships among variables. There are several types of regression: ** Linear regression ** Simple linear regression ** Logistic regression ** Nonlinear regression ** Nonparametric regression ** Robust regression ** Stepwise regression * Regression toward the mean, a common statistical phenomenon Computing * Software regression, the appearance of a bug which was absent in a previous revision ** Regression testing ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |