|

Extreme Learning Machines

Extreme learning machines are feedforward neural networks for classification, regression, clustering, sparse approximation, compression and feature learning with a single layer or multiple layers of hidden nodes, where the parameters of hidden nodes (not just the weights connecting inputs to hidden nodes) need to be tuned. These hidden nodes can be randomly assigned and never updated (i.e. they are random projection but with nonlinear transforms), or can be inherited from their ancestors without being changed. In most cases, the output weights of hidden nodes are usually learned in a single step, which essentially amounts to learning a linear model. The name "extreme learning machine" (ELM) was given to such models by its main inventor Guang-Bin Huang. According to their creators, these models are able to produce good generalization performance and learn thousands of times faster than networks trained using backpropagation. In literature, it also shows that these models can ou ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Feedforward Neural Network

A feedforward neural network (FNN) is an artificial neural network wherein connections between the nodes do ''not'' form a cycle. As such, it is different from its descendant: recurrent neural networks. The feedforward neural network was the first and simplest type of artificial neural network devised. In this network, the information moves in only one direction—forward—from the input nodes, through the hidden nodes (if any) and to the output nodes. There are no cycles or loops in the network. Single-layer perceptron The simplest kind of neural network is a ''single-layer perceptron'' network, which consists of a single layer of output nodes; the inputs are fed directly to the outputs via a series of weights. The sum of the products of the weights and the inputs is calculated in each node, and if the value is above some threshold (typically 0) the neuron fires and takes the activated value (typically 1); otherwise it takes the deactivated value (typically -1). Ne ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Regularization (mathematics)

In mathematics, statistics, finance, computer science, particularly in machine learning and inverse problems, regularization is a process that changes the result answer to be "simpler". It is often used to obtain results for ill-posed problems or to prevent overfitting. Although regularization procedures can be divided in many ways, following delineation is particularly helpful: * Explicit regularization is regularization whenever one explicitly adds a term to the optimization problem. These terms could be priors, penalties, or constraints. Explicit regularization is commonly employed with ill-posed optimization problems. The regularization term, or penalty, imposes a cost on the optimization function to make the optimal solution unique. * Implicit regularization is all other forms of regularization. This includes, for example, early stopping, using a robust loss function, and discarding outliers. Implicit regularization is essentially ubiquitous in modern machine learning appr ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Random Projection

In mathematics and statistics, random projection is a technique used to reduce the dimensionality of a set of points which lie in Euclidean space. Random projection methods are known for their power, simplicity, and low error rates when compared to other methods. According to experimental results, random projection preserves distances well, but empirical results are sparse. They have been applied to many natural language tasks under the name random indexing. Dimensionality reduction Dimensionality reduction, as the name suggests, is reducing the number of random variables using various mathematical methods from statistics and machine learning. Dimensionality reduction is often used to reduce the problem of managing and manipulating large data sets. Dimensionality reduction techniques generally use linear transformations in determining the intrinsic dimensionality of the manifold as well as extracting its principal directions. For this purpose there are various related techniques, ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Reservoir Computing

Reservoir computing is a framework for computation derived from recurrent neural network theory that maps input signals into higher dimensional computational spaces through the dynamics of a fixed, non-linear system called a reservoir. After the input signal is fed into the reservoir, which is treated as a "black box," a simple readout mechanism is trained to read the state of the reservoir and map it to the desired output. The first key benefit of this framework is that training is performed only at the readout stage, as the reservoir dynamics are fixed. The second is that the computational power of naturally available systems, both classical and quantum mechanical, can be used to reduce the effective computational cost. History The concept of reservoir computing stems from the use of recursive connections within neural networks to create a complex dynamical system.Benjamin Schrauwen, Schrauwen, Benjamin, David Verstraeten, and Jan Van Campenhout. "An overview of reservoir compu ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

RBF Network

In the field of mathematical modeling, a radial basis function network is an artificial neural network that uses radial basis functions as activation functions. The output of the network is a linear combination of radial basis functions of the inputs and neuron parameters. Radial basis function networks have many uses, including function approximation, time series prediction, classification, and system control. They were first formulated in a 1988 paper by Broomhead and Lowe, both researchers at the Royal Signals and Radar Establishment. Network architecture Radial basis function (RBF) networks typically have three layers: an input layer, a hidden layer with a non-linear RBF activation function and a linear output layer. The input can be modeled as a vector of real numbers \mathbf \in \mathbb^n. The output of the network is then a scalar function of the input vector, \varphi : \mathbb^n \to \mathbb , and is given by :\varphi(\mathbf) = \sum_^N a_i \rho(, , \mathbf-\mathbf_i, , ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

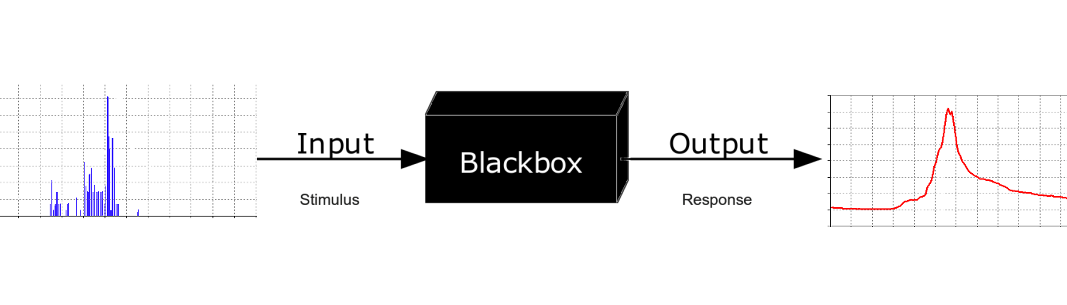

Black-box

In science, computing, and engineering, a black box is a system which can be viewed in terms of its inputs and outputs (or transfer characteristics), without any knowledge of its internal workings. Its implementation is "opaque" (black). The term can be used to refer to many inner workings, such as those of a transistor, an engine, an algorithm, the human brain, or an institution or government. To analyse an open system with a typical "black box approach", only the behavior of the stimulus/response will be accounted for, to infer the (unknown) ''box''. The usual representation of this ''black box system'' is a data flow diagram centered in the box. The opposite of a black box is a system where the inner components or logic are available for inspection, which is most commonly referred to as a white box (sometimes also known as a "clear box" or a "glass box"). History The modern meaning of the term "black box" seems to have entered the English language around 1945. In electr ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

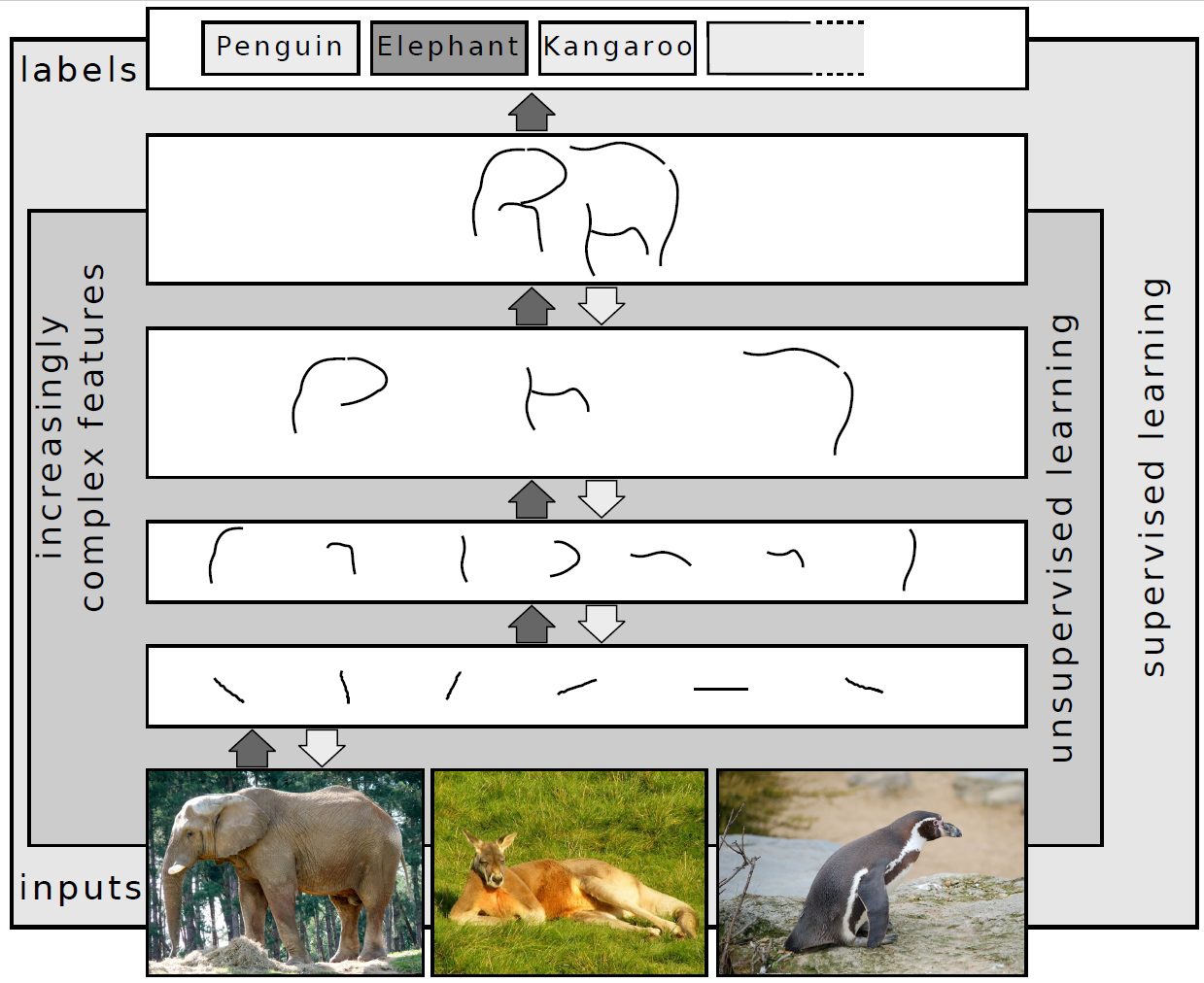

Deep Learning

Deep learning (also known as deep structured learning) is part of a broader family of machine learning methods based on artificial neural networks with representation learning. Learning can be supervised, semi-supervised or unsupervised. Deep-learning architectures such as deep neural networks, deep belief networks, deep reinforcement learning, recurrent neural networks, convolutional neural networks and Transformers have been applied to fields including computer vision, speech recognition, natural language processing, machine translation, bioinformatics, drug design, medical image analysis, Climatology, climate science, material inspection and board game programs, where they have produced results comparable to and in some cases surpassing human expert performance. Artificial neural networks (ANNs) were inspired by information processing and distributed communication nodes in biological systems. ANNs have various differences from biological brains. Specifically, artificial ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Design Matrix

In statistics and in particular in regression analysis, a design matrix, also known as model matrix or regressor matrix and often denoted by X, is a matrix of values of explanatory variables of a set of objects. Each row represents an individual object, with the successive columns corresponding to the variables and their specific values for that object. The design matrix is used in certain statistical models, e.g., the general linear model. It can contain indicator variables (ones and zeros) that indicate group membership in an ANOVA, or it can contain values of continuous variables. The design matrix contains data on the independent variables (also called explanatory variables) in statistical models which attempt to explain observed data on a response variable (often called a dependent variable) in terms of the explanatory variables. The theory relating to such models makes substantial use of matrix manipulations involving the design matrix: see for example linear regression. A n ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Least-squares Fit

The method of least squares is a standard approach in regression analysis to approximate the solution of overdetermined systems (sets of equations in which there are more equations than unknowns) by minimizing the sum of the squares of the residuals (a residual being the difference between an observed value and the fitted value provided by a model) made in the results of each individual equation. The most important application is in data fitting. When the problem has substantial uncertainties in the independent variable (the ''x'' variable), then simple regression and least-squares methods have problems; in such cases, the methodology required for fitting errors-in-variables models may be considered instead of that for least squares. Least squares problems fall into two categories: linear or ordinary least squares and nonlinear least squares, depending on whether or not the residuals are linear in all unknowns. The linear least-squares problem occurs in statistical regression ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Gaussian Noise

Gaussian noise, named after Carl Friedrich Gauss, is a term from signal processing theory denoting a kind of signal noise that has a probability density function (pdf) equal to that of the normal distribution (which is also known as the Gaussian distribution). In other words, the values that the noise can take are Gaussian-distributed. The probability density function p of a Gaussian random variable z is given by: : p_G(z) = \frac e^ where z represents the grey level, \mu the mean grey value and \sigma its standard deviation. A special case is ''White Gaussian noise'', in which the values at any pair of times are identically distributed and statistically independent (and hence uncorrelated). In communication channel testing and modelling, Gaussian noise is used as additive white noise to generate additive white Gaussian noise. In telecommunications and computer networking, communication channels can be affected by wideband Gaussian noise coming from many natural sources, su ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |