|

Computerized Classification Test

A computerized classification test (CCT) refers to, as its name would suggest, a test that is administered by computer for the purpose of classifying examinees. The most common CCT is a mastery test where the test classifies examinees as "Pass" or "Fail," but the term also includes tests that classify examinees into more than two categories. While the term may generally be considered to refer to all computer-administered tests for classification, it is usually used to refer to tests that are interactively administered or of variable-length, similar to computerized adaptive testing (CAT). Like CAT, variable-length CCTs can accomplish the goal of the test (accurate classification) with a fraction of the number of items used in a conventional fixed-form test. A CCT requires several components: # An item bank calibrated with a psychometric model selected by the test designer # A starting point # An item selection algorithm # A termination criterion and scoring procedure The startin ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Test (student Assessment)

An examination (exam or evaluation) or test is an educational assessment intended to measure a test-taker's knowledge, skill, aptitude, physical fitness, or classification in many other topics (e.g., beliefs). A test may be administered verbally, on paper, on a computer, or in a predetermined area that requires a test taker to demonstrate or perform a set of skills. Tests vary in style, rigor and requirements. There is no general consensus or invariable standard for test formats and difficulty. Often, the format and difficulty of the test is dependent upon the educational philosophy of the instructor, subject matter, class size, policy of the educational institution, and requirements of accreditation or governing bodies. A test may be administered formally or informally. An example of an informal test is a reading test administered by a parent to a child. A formal test might be a final examination administered by a teacher in a classroom or an IQ test administered by a psych ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Probability Distribution

In probability theory and statistics, a probability distribution is the mathematical function that gives the probabilities of occurrence of different possible outcomes for an experiment. It is a mathematical description of a random phenomenon in terms of its sample space and the probabilities of events (subsets of the sample space). For instance, if is used to denote the outcome of a coin toss ("the experiment"), then the probability distribution of would take the value 0.5 (1 in 2 or 1/2) for , and 0.5 for (assuming that the coin is fair). Examples of random phenomena include the weather conditions at some future date, the height of a randomly selected person, the fraction of male students in a school, the results of a survey to be conducted, etc. Introduction A probability distribution is a mathematical description of the probabilities of events, subsets of the sample space. The sample space, often denoted by \Omega, is the set of all possible outcomes of a random phe ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Psychometrics

Psychometrics is a field of study within psychology concerned with the theory and technique of measurement. Psychometrics generally refers to specialized fields within psychology and education devoted to testing, measurement, assessment, and related activities. Psychometrics is concerned with the objective measurement of latent constructs that cannot be directly observed. Examples of latent constructs include intelligence, introversion, mental disorders, and educational achievement. The levels of individuals on nonobservable latent variables are inferred through mathematical modeling based on what is observed from individuals' responses to items on tests and scales. Practitioners are described as psychometricians, although not all who engage in psychometric research go by this title. Psychometricians usually possess specific qualifications such as degrees or certifications, and most are psychologists with advanced graduate training in psychometrics and measurement theory. I ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Journal Of The Royal Statistical Society

The ''Journal of the Royal Statistical Society'' is a peer-reviewed scientific journal of statistics. It comprises three series and is published by Wiley for the Royal Statistical Society. History The Statistical Society of London was founded in 1834, but would not begin producing a journal for four years. From 1834 to 1837, members of the society would read the results of their studies to the other members, and some details were recorded in the proceedings. The first study reported to the society in 1834 was a simple survey of the occupations of people in Manchester, England. Conducted by going door-to-door and inquiring, the study revealed that the most common profession was mill-hands, followed closely by weavers. When founded, the membership of the Statistical Society of London overlapped almost completely with the statistical section of the British Association for the Advancement of Science. In 1837 a volume of ''Transactions of the Statistical Society of London'' were wri ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Hypothesis Test

A statistical hypothesis test is a method of statistical inference used to decide whether the data at hand sufficiently support a particular hypothesis. Hypothesis testing allows us to make probabilistic statements about population parameters. History Early use While hypothesis testing was popularized early in the 20th century, early forms were used in the 1700s. The first use is credited to John Arbuthnot (1710), followed by Pierre-Simon Laplace (1770s), in analyzing the human sex ratio at birth; see . Modern origins and early controversy Modern significance testing is largely the product of Karl Pearson (p-value, ''p''-value, Pearson's chi-squared test), William Sealy Gosset (Student's t-distribution), and Ronald Fisher ("null hypothesis", analysis of variance, "statistical significance, significance test"), while hypothesis testing was developed by Jerzy Neyman and Egon Pearson (son of Karl). Ronald Fisher began his life in statistics as a Bayesian (Zabell 1992), but Fisher ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Bayesian Decision Theory

In estimation theory and decision theory, a Bayes estimator or a Bayes action is an estimator or decision rule that minimizes the posterior expected value of a loss function (i.e., the posterior expected loss). Equivalently, it maximizes the posterior expectation of a utility function. An alternative way of formulating an estimator within Bayesian statistics is maximum a posteriori estimation. Definition Suppose an unknown parameter \theta is known to have a prior distribution \pi. Let \widehat = \widehat(x) be an estimator of \theta (based on some measurements ''x''), and let L(\theta,\widehat) be a loss function, such as squared error. The Bayes risk of \widehat is defined as E_\pi(L(\theta, \widehat)), where the expectation is taken over the probability distribution of \theta: this defines the risk function as a function of \widehat. An estimator \widehat is said to be a ''Bayes estimator'' if it minimizes the Bayes risk among all estimators. Equivalently, the estimator whic ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Information

Information is an abstract concept that refers to that which has the power to inform. At the most fundamental level information pertains to the interpretation of that which may be sensed. Any natural process that is not completely random, and any observable pattern in any medium can be said to convey some amount of information. Whereas digital signals and other data use discrete signs to convey information, other phenomena and artifacts such as analog signals, poems, pictures, music or other sounds, and currents convey information in a more continuous form. Information is not knowledge itself, but the meaning that may be derived from a representation through interpretation. Information is often processed iteratively: Data available at one step are processed into information to be interpreted and processed at the next step. For example, in written text each symbol or letter conveys information relevant to the word it is part of, each word conveys information rele ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Testlet

Multistage testing is an algorithm-based approach to administering tests. It is very similar to computer-adaptive testing in that items are interactively selected for each examinee by the algorithm, but rather than selecting individual items, groups of items are selected, building the test in stages. These groups are called ''testlets'' or ''panels''. While multistage tests could theoretically be administered by a human, the extensive computations required (often using item response theory In psychometrics, item response theory (IRT) (also known as latent trait theory, strong true score theory, or modern mental test theory) is a paradigm for the design, analysis, and scoring of tests, questionnaires, and similar instruments measuring ...) mean that multistage tests are administered by computer. The number of stages or testlets can vary. If the testlets are relatively small, such as five items, ten or more could easily be used in a test. Some multistage tests are designed with the ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Confidence Interval

In frequentist statistics, a confidence interval (CI) is a range of estimates for an unknown parameter. A confidence interval is computed at a designated ''confidence level''; the 95% confidence level is most common, but other levels, such as 90% or 99%, are sometimes used. The confidence level represents the long-run proportion of corresponding CIs that contain the true value of the parameter. For example, out of all intervals computed at the 95% level, 95% of them should contain the parameter's true value. Factors affecting the width of the CI include the sample size, the variability in the sample, and the confidence level. All else being the same, a larger sample produces a narrower confidence interval, greater variability in the sample produces a wider confidence interval, and a higher confidence level produces a wider confidence interval. Definition Let be a random sample from a probability distribution with statistical parameter , which is a quantity to be estimate ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Computer

A computer is a machine that can be programmed to Execution (computing), carry out sequences of arithmetic or logical operations (computation) automatically. Modern digital electronic computers can perform generic sets of operations known as Computer program, programs. These programs enable computers to perform a wide range of tasks. A computer system is a nominally complete computer that includes the Computer hardware, hardware, operating system (main software), and peripheral equipment needed and used for full operation. This term may also refer to a group of computers that are linked and function together, such as a computer network or computer cluster. A broad range of Programmable logic controller, industrial and Consumer electronics, consumer products use computers as control systems. Simple special-purpose devices like microwave ovens and remote controls are included, as are factory devices like industrial robots and computer-aided design, as well as general-purpose devi ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Sequential Probability Ratio Test

The sequential probability ratio test (SPRT) is a specific sequential hypothesis test, developed by Abraham Wald and later proven to be optimal by Wald and Jacob Wolfowitz. Neyman and Pearson's 1933 result inspired Wald to reformulate it as a sequential analysis problem. The Neyman-Pearson lemma, by contrast, offers a rule of thumb for when all the data is collected (and its likelihood ratio known). While originally developed for use in quality control studies in the realm of manufacturing, SPRT has been formulated for use in the computerized testing of human examinees as a termination criterion. Theory As in classical hypothesis testing, SPRT starts with a pair of hypotheses, say H_0 and H_1 for the null hypothesis and alternative hypothesis respectively. They must be specified as follows: :H_0: p=p_0 :H_1: p=p_1 The next step is to calculate the cumulative sum of the log-likelihood ratio, \log \Lambda_i, as new data arrive: with S_0 = 0, then, for i=1,2,..., :S_i=S_+ \log \ ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

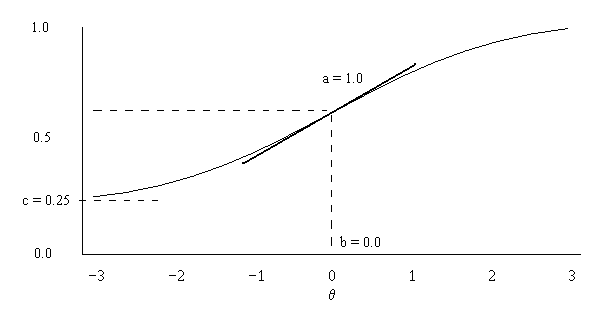

Item Response Theory

In psychometrics, item response theory (IRT) (also known as latent trait theory, strong true score theory, or modern mental test theory) is a paradigm for the design, analysis, and scoring of tests, questionnaires, and similar instruments measuring abilities, attitudes, or other variables. It is a theory of testing based on the relationship between individuals' performances on a test item and the test takers' levels of performance on an overall measure of the ability that item was designed to measure. Several different statistical models are used to represent both item and test taker characteristics. Unlike simpler alternatives for creating scales and evaluating questionnaire responses, it does not assume that each item is equally difficult. This distinguishes IRT from, for instance, Likert scaling, in which ''"''All items are assumed to be replications of each other or in other words items are considered to be parallel instruments".A. van Alphen, R. Halfens, A. Hasman and T. Imbos. ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |