|

Coding Theory

Coding theory is the study of the properties of codes and their respective fitness for specific applications. Codes are used for data compression, cryptography, error detection and correction, data transmission and data storage. Codes are studied by various scientific disciplines—such as information theory, electrical engineering, mathematics, linguistics, and computer science—for the purpose of designing efficient and reliable data transmission methods. This typically involves the removal of redundancy and the correction or detection of errors in the transmitted data. There are four types of coding: # Data compression (or ''source coding'') # Error control (or ''channel coding'') # Cryptographic coding # Line coding Data compression attempts to remove unwanted redundancy from the data from a source in order to transmit it more efficiently. For example, ZIP data compression makes data files smaller, for purposes such as to reduce Internet traffic. Data compression and er ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Hamming

Hamming may refer to: * Richard Hamming (1915–1998), American mathematician * Hamming(7,4), in coding theory, a linear error-correcting code * Overacting, or acting in an exaggerated way See also * Hamming code, error correction in telecommunication * Hamming distance, a way of defining how different two sequences are * Hamming weight, the number of non-zero elements in a sequence * Hamming window In discrete-time signal processing, windowing is a preliminary signal shaping technique, usually applied to improve the appearance and usefulness of a subsequent Discrete Fourier Transform. Several '' window functions'' can be defined, based on ..., a mathematical function used in signal processing * Hammond (other) * Ham (other) {{disambiguation, surname ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Joint Source And Channel Coding

In information theory, joint source–channel coding is the encoding of a redundant information source for transmission over a noisy channel In information theory, the noisy-channel coding theorem (sometimes Shannon's theorem or Shannon's limit), establishes that for any given degree of noise contamination of a communication channel, it is possible to communicate discrete data (dig ..., and the corresponding decoding, using a single code instead of the more conventional steps of source coding followed by channel coding. Joint source–channel coding has been proposed and implemented for a variety of situations, including speech and videotransmission. References Information theory Fault tolerance {{tech-stub ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Binary Golay Code

In mathematics and electronics engineering, a binary Golay code is a type of linear error-correcting code used in digital communications. The binary Golay code, along with the ternary Golay code, has a particularly deep and interesting connection to the theory of finite sporadic groups in mathematics. These codes are named in honor of Marcel J. E. Golay whose 1949 paper introducing them has been called, by E. R. Berlekamp, the "best single published page" in coding theory. There are two closely related binary Golay codes. The extended binary Golay code, ''G''24 (sometimes just called the "Golay code" in finite group theory) encodes 12 bits of data in a 24-bit word in such a way that any 3-bit errors can be corrected or any 7-bit errors can be detected. The other, the perfect binary Golay code, ''G''23, has codewords of length 23 and is obtained from the extended binary Golay code by deleting one coordinate position (conversely, the extended binary Golay code is obtained from t ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

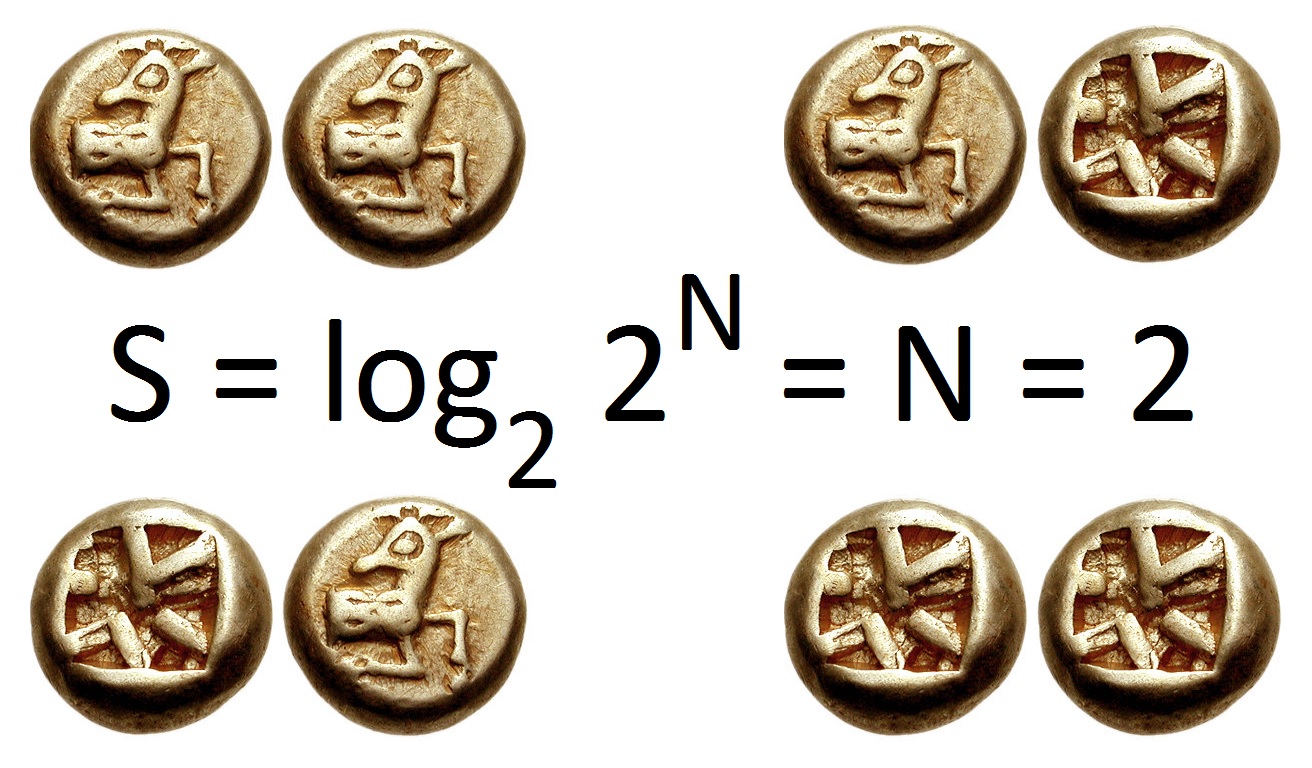

Information Entropy

In information theory, the entropy of a random variable is the average level of "information", "surprise", or "uncertainty" inherent to the variable's possible outcomes. Given a discrete random variable X, which takes values in the alphabet \mathcal and is distributed according to p: \mathcal\to , 1/math>: \Eta(X) := -\sum_ p(x) \log p(x) = \mathbb \log p(X), where \Sigma denotes the sum over the variable's possible values. The choice of base for \log, the logarithm, varies for different applications. Base 2 gives the unit of bits (or " shannons"), while base ''e'' gives "natural units" nat, and base 10 gives units of "dits", "bans", or " hartleys". An equivalent definition of entropy is the expected value of the self-information of a variable. The concept of information entropy was introduced by Claude Shannon in his 1948 paper " A Mathematical Theory of Communication",PDF archived froherePDF archived frohere and is also referred to as Shannon entropy. Shannon's theory d ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Norbert Wiener

Norbert Wiener (November 26, 1894 – March 18, 1964) was an American mathematician and philosopher. He was a professor of mathematics at the Massachusetts Institute of Technology (MIT). A child prodigy, Wiener later became an early researcher in stochastic and mathematical noise processes, contributing work relevant to electronic engineering, electronic communication, and control systems. Wiener is considered the originator of cybernetics, the science of communication as it relates to living things and machines, with implications for engineering, systems control, computer science, biology, neuroscience, philosophy, and the organization of society. Norbert Wiener is credited as being one of the first to theorize that all intelligent behavior was the result of feedback mechanisms, that could possibly be simulated by machines and was an important early step towards the development of modern artificial intelligence. Biography Youth Wiener was born in Columbia, Missouri, the fi ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Information

Information is an abstract concept that refers to that which has the power to inform. At the most fundamental level information pertains to the interpretation of that which may be sensed. Any natural process that is not completely random, and any observable pattern in any medium can be said to convey some amount of information. Whereas digital signals and other data use discrete signs to convey information, other phenomena and artifacts such as analog signals, poems, pictures, music or other sounds, and currents convey information in a more continuous form. Information is not knowledge itself, but the meaning that may be derived from a representation through interpretation. Information is often processed iteratively: Data available at one step are processed into information to be interpreted and processed at the next step. For example, in written text each symbol or letter conveys information relevant to the word it is part of, each word conveys information rele ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

A Mathematical Theory Of Communication

"A Mathematical Theory of Communication" is an article by mathematician Claude E. Shannon published in ''Bell System Technical Journal'' in 1948. It was renamed ''The Mathematical Theory of Communication'' in the 1949 book of the same name, a small but significant title change after realizing the generality of this work. It became one of the most cited scientific articles and gave rise to the field of information theory. Publication The article was the founding work of the field of information theory. It was later published in 1949 as a book titled ''The Mathematical Theory of Communication'' (), which was published as a paperback in 1963 (). The book contains an additional article by Warren Weaver, providing an overview of the theory for a more general audience. Contents Shannon's article laid out the basic elements of communication: *An information source that produces a message *A transmitter that operates on the message to create a signal which can be sent through a channel ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Claude Shannon

Claude Elwood Shannon (April 30, 1916 – February 24, 2001) was an American mathematician, electrical engineer, and cryptographer known as a "father of information theory". As a 21-year-old master's degree student at the Massachusetts Institute of Technology (MIT), he wrote his thesis demonstrating that electrical applications of Boolean algebra could construct any logical numerical relationship. Shannon contributed to the field of cryptanalysis for national defense of the United States during World War II, including his fundamental work on codebreaking and secure telecommunications. Biography Childhood The Shannon family lived in Gaylord, Michigan, and Claude was born in a hospital in nearby Petoskey. His father, Claude Sr. (1862–1934), was a businessman and for a while, a judge of probate in Gaylord. His mother, Mabel Wolf Shannon (1890–1945), was a language teacher, who also served as the principal of Gaylord High School. Claude Sr. was a descendant of New Jer ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

LDPC Code

In information theory, a low-density parity-check (LDPC) code is a linear error correcting code, a method of transmitting a message over a noisy transmission channel. An LDPC code is constructed using a sparse Tanner graph (subclass of the bipartite graph). LDPC codes are capacity-approaching codes, which means that practical constructions exist that allow the noise threshold to be set very close to the theoretical maximum (the Shannon limit) for a symmetric memoryless channel. The noise threshold defines an upper bound for the channel noise, up to which the probability of lost information can be made as small as desired. Using iterative belief propagation techniques, LDPC codes can be decoded in time linear to their block length. LDPC codes are finding increasing use in applications requiring reliable and highly efficient information transfer over bandwidth-constrained or return-channel-constrained links in the presence of corrupting noise. Implementation of LDPC codes has ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Turbo Code

In information theory, turbo codes (originally in French ''Turbocodes'') are a class of high-performance forward error correction (FEC) codes developed around 1990–91, but first published in 1993. They were the first practical codes to closely approach the maximum channel capacity or Shannon limit, a theoretical maximum for the code rate at which reliable communication is still possible given a specific noise level. Turbo codes are used in 3G/ 4G mobile communications (e.g., in UMTS and LTE) and in ( deep space) satellite communications as well as other applications where designers seek to achieve reliable information transfer over bandwidth- or latency-constrained communication links in the presence of data-corrupting noise. Turbo codes compete with low-density parity-check (LDPC) codes, which provide similar performance. The name "turbo code" arose from the feedback loop used during normal turbo code decoding, which was analogized to the exhaust feedback used for engine ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

NASA Deep Space Network

The NASA Deep Space Network (DSN) is a worldwide network of American spacecraft communication ground segment facilities, located in the United States (California), Spain (Madrid), and Australia (Canberra), that supports NASA's interplanetary spacecraft missions. It also performs radio and radar astronomy observations for the exploration of the Solar System and the universe, and supports selected Earth-orbiting missions. DSN is part of the NASA Jet Propulsion Laboratory (JPL). General information DSN currently consists of three deep-space communications facilities placed approximately 120 degrees apart around the Earth. They are: * the Goldstone Deep Space Communications Complex () outside Barstow, California. For details of Goldstone's contribution to the early days of space probe tracking, see Project Space Track; * the Madrid Deep Space Communications Complex (), west of Madrid, Spain; and * the Canberra Deep Space Communication Complex (CDSCC) in the Australian ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Fading

In wireless communications, fading is variation of the attenuation of a signal with various variables. These variables include time, geographical position, and radio frequency. Fading is often modeled as a random process. A fading channel is a communication channel that experiences fading. In wireless systems, fading may either be due to multipath propagation, referred to as multipath-induced fading, weather (particularly rain), or shadowing from obstacles affecting the wave propagation, sometimes referred to as shadow fading. Key concepts The presence of reflectors in the environment surrounding a transmitter and receiver create multiple paths that a transmitted signal can traverse. As a result, the receiver sees the superposition of multiple copies of the transmitted signal, each traversing a different path. Each signal copy will experience differences in attenuation, delay and phase shift while traveling from the source to the receiver. This can result in either const ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |