|

Concept Search

A concept search (or conceptual search) is an automated information retrieval method that is used to search electronically stored unstructured text (for example, digital archives, email, scientific literature, etc.) for information that is conceptually similar to the information provided in a search query. In other words, the ''ideas'' expressed in the information retrieved in response to a concept search query are relevant to the ideas contained in the text of the query. __TOC__ Development Concept search techniques were developed because of limitations imposed by classical Boolean keyword search technologies when dealing with large, unstructured digital collections of text. Keyword searches often return results that include many non-relevant items (false positives) or that exclude too many relevant items (false negatives) because of the effects of synonymy and polysemy. Synonymy means that one of two or more words in the same language have the same meaning, and polysemy mean ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Information Retrieval

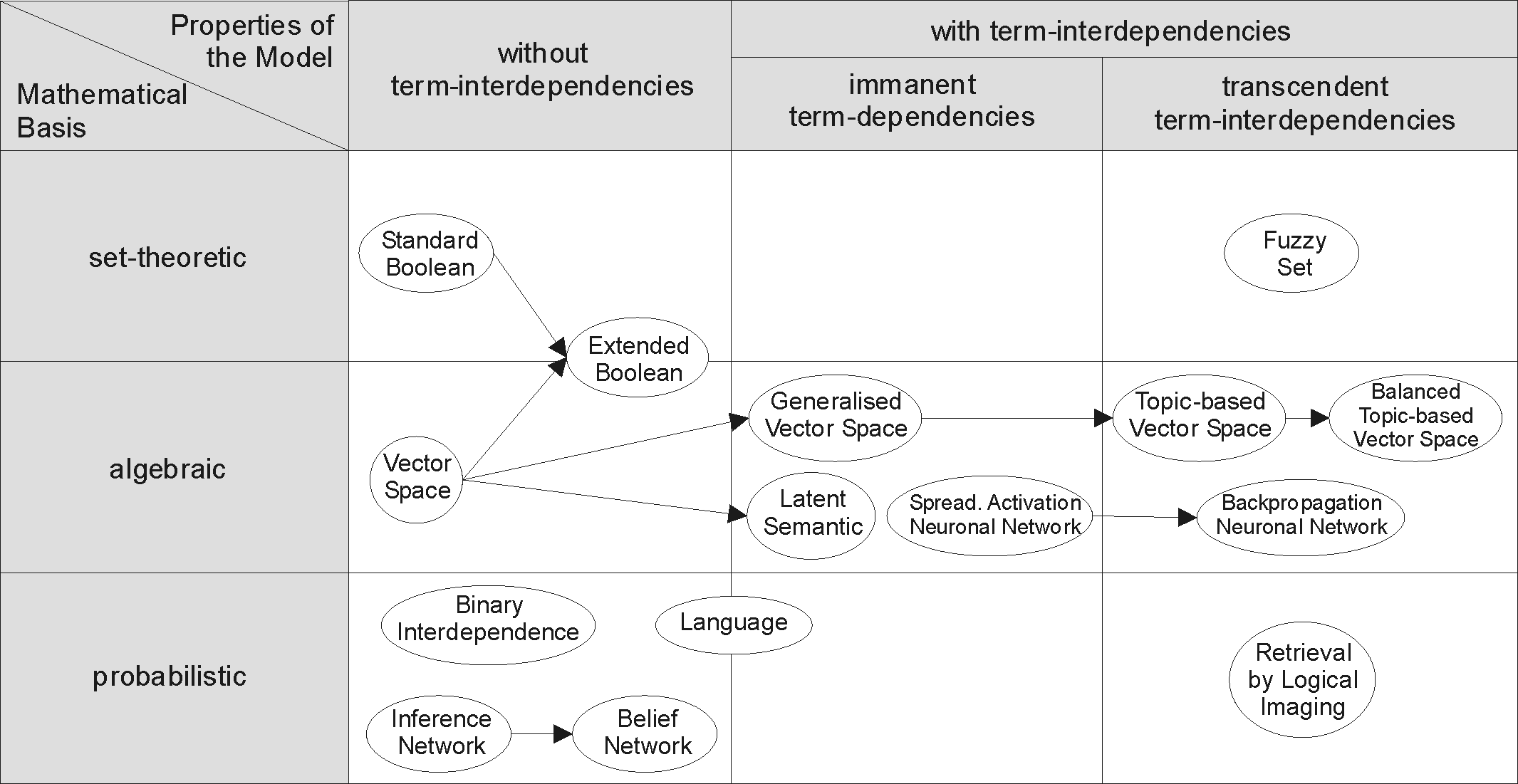

Information retrieval (IR) in computing and information science is the process of obtaining information system resources that are relevant to an information need from a collection of those resources. Searches can be based on full-text or other content-based indexing. Information retrieval is the science of searching for information in a document, searching for documents themselves, and also searching for the metadata that describes data, and for databases of texts, images or sounds. Automated information retrieval systems are used to reduce what has been called information overload. An IR system is a software system that provides access to books, journals and other documents; stores and manages those documents. Web search engines are the most visible IR applications. Overview An information retrieval process begins when a user or searcher enters a query into the system. Queries are formal statements of information needs, for example search strings in web search engines. In ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Word Sense Disambiguation

Word-sense disambiguation (WSD) is the process of identifying which sense of a word is meant in a sentence or other segment of context. In human language processing and cognition, it is usually subconscious/automatic but can often come to conscious attention when ambiguity impairs clarity of communication, given the pervasive polysemy in natural language. In computational linguistics, it is an open problem that affects other computer-related writing, such as discourse, improving relevance of search engines, anaphora resolution, coherence, and inference. Given that natural language requires reflection of neurological reality, as shaped by the abilities provided by the brain's neural networks, computer science has had a long-term challenge in developing the ability in computers to do natural language processing and machine learning. Many techniques have been researched, including dictionary-based methods that use the knowledge encoded in lexical resources, supervised machine l ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Matrix Decomposition

In the mathematical discipline of linear algebra, a matrix decomposition or matrix factorization is a factorization of a matrix into a product of matrices. There are many different matrix decompositions; each finds use among a particular class of problems. Example In numerical analysis, different decompositions are used to implement efficient matrix algorithms. For instance, when solving a system of linear equations A \mathbf = \mathbf, the matrix ''A'' can be decomposed via the LU decomposition. The LU decomposition factorizes a matrix into a lower triangular matrix ''L'' and an upper triangular matrix ''U''. The systems L(U \mathbf) = \mathbf and U \mathbf = L^ \mathbf require fewer additions and multiplications to solve, compared with the original system A \mathbf = \mathbf, though one might require significantly more digits in inexact arithmetic such as floating point. Similarly, the QR decomposition expresses ''A'' as ''QR'' with ''Q'' an orthogonal matrix and ''R'' an u ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Sliding Window Based Part-of-speech Tagging

Sliding window based part-of-speech tagging is used to part-of-speech tag a text. A high percentage of words in a natural language are words which out of context can be assigned more than one part of speech. The percentage of these ambiguous words is typically around 30%, although it depends greatly on the language. Solving this problem is very important in many areas of natural language processing. For example in machine translation changing the part-of-speech of a word can dramatically change its translation. Sliding window based part-of-speech taggers are programs which assign a single part-of-speech to a given lexical form of a word, by looking at a fixed sized "window" of words around the word to be disambiguated. The two main advantages of this approach are: * It is possible to automatically train the tagger, getting rid of the need of manually tagging a corpus. * The tagger can be implemented as a finite state automaton (Mealy machine) Formal definition Let :\Gamma = \ ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Latent Semantic Analysis

Latent semantic analysis (LSA) is a technique in natural language processing, in particular distributional semantics, of analyzing relationships between a set of documents and the terms they contain by producing a set of concepts related to the documents and terms. LSA assumes that words that are close in meaning will occur in similar pieces of text (the distributional hypothesis). A matrix containing word counts per document (rows represent unique words and columns represent each document) is constructed from a large piece of text and a mathematical technique called singular value decomposition (SVD) is used to reduce the number of rows while preserving the similarity structure among columns. Documents are then compared by cosine similarity between any two columns. Values close to 1 represent very similar documents while values close to 0 represent very dissimilar documents. An information retrieval technique using latent semantic structure was patented in 1988US Patent 4,839 ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

WordNet

WordNet is a lexical database of semantic relations between words in more than 200 languages. WordNet links words into semantic relations including synonyms, hyponyms, and meronyms. The synonyms are grouped into ''synsets'' with short definitions and usage examples. WordNet can thus be seen as a combination and extension of a dictionary and thesaurus. While it is accessible to human users via a web browser, its primary use is in automatic text analysis and artificial intelligence applications. WordNet was first created in the English language and the English WordNet database and software tools have been released under a BSD style license and are freely available for download from that WordNet website. History and team members WordNet was first created in English only in the Cognitive Science Laboratory of Princeton University under the direction of psychology professor George Armitage Miller starting in 1985 and was later directed by Christiane Fellbaum. The project was ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Ontology (information Science)

In computer science and information science, an ontology encompasses a representation, formal naming, and definition of the categories, properties, and relations between the concepts, data, and entities that substantiate one, many, or all domains of discourse. More simply, an ontology is a way of showing the properties of a subject area and how they are related, by defining a set of concepts and categories that represent the subject. Every academic discipline or field creates ontologies to limit complexity and organize data into information and knowledge. Each uses ontological assumptions to frame explicit theories, research and applications. New ontologies may improve problem solving within that domain. Translating research papers within every field is a problem made easier when experts from different countries maintain a controlled vocabulary of jargon between each of their languages. For instance, the definition and ontology of economics is a primary concern in Marxist ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Controlled Vocabularies

Control may refer to: Basic meanings Economics and business * Control (management), an element of management * Control, an element of management accounting * Comptroller (or controller), a senior financial officer in an organization * Controlling interest, a percentage of voting stock shares sufficient to prevent opposition * Foreign exchange controls, regulations on trade * Internal control, a process to help achieve specific goals typically related to managing risk Mathematics and science * Control (optimal control theory), a variable for steering a controllable system of state variables toward a desired goal * Controlling for a variable in statistics * Scientific control, an experiment in which "confounding variables" are minimised to reduce error * Control variables, variables which are kept constant during an experiment * Biological pest control, a natural method of controlling pests * Control network in geodesy and surveying, a set of reference points of known geospatial ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Natural Language Processing

Natural language processing (NLP) is an interdisciplinary subfield of linguistics, computer science, and artificial intelligence concerned with the interactions between computers and human language, in particular how to program computers to process and analyze large amounts of natural language data. The goal is a computer capable of "understanding" the contents of documents, including the contextual nuances of the language within them. The technology can then accurately extract information and insights contained in the documents as well as categorize and organize the documents themselves. Challenges in natural language processing frequently involve speech recognition, natural-language understanding, and natural-language generation. History Natural language processing has its roots in the 1950s. Already in 1950, Alan Turing published an article titled " Computing Machinery and Intelligence" which proposed what is now called the Turing test as a criterion of intelligence, ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Artificial Intelligence

Artificial intelligence (AI) is intelligence—perceiving, synthesizing, and inferring information—demonstrated by machines, as opposed to intelligence displayed by animals and humans. Example tasks in which this is done include speech recognition, computer vision, translation between (natural) languages, as well as other mappings of inputs. The ''Oxford English Dictionary'' of Oxford University Press defines artificial intelligence as: the theory and development of computer systems able to perform tasks that normally require human intelligence, such as visual perception, speech recognition, decision-making, and translation between languages. AI applications include advanced web search engines (e.g., Google), recommendation systems (used by YouTube, Amazon and Netflix), understanding human speech (such as Siri and Alexa), self-driving cars (e.g., Tesla), automated decision-making and competing at the highest level in strategic game systems (such as chess and Go). ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Matrix Decomposition

In the mathematical discipline of linear algebra, a matrix decomposition or matrix factorization is a factorization of a matrix into a product of matrices. There are many different matrix decompositions; each finds use among a particular class of problems. Example In numerical analysis, different decompositions are used to implement efficient matrix algorithms. For instance, when solving a system of linear equations A \mathbf = \mathbf, the matrix ''A'' can be decomposed via the LU decomposition. The LU decomposition factorizes a matrix into a lower triangular matrix ''L'' and an upper triangular matrix ''U''. The systems L(U \mathbf) = \mathbf and U \mathbf = L^ \mathbf require fewer additions and multiplications to solve, compared with the original system A \mathbf = \mathbf, though one might require significantly more digits in inexact arithmetic such as floating point. Similarly, the QR decomposition expresses ''A'' as ''QR'' with ''Q'' an orthogonal matrix and ''R'' an u ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Co-occurrence

In linguistics, co-occurrence or cooccurrence is an above-chance frequency of occurrence of two terms (also known as coincidence or concurrence) from a text corpus alongside each other in a certain order. Co-occurrence in this linguistic sense can be interpreted as an indicator of semantic proximity or an idiomatic expression. Corpus linguistics and its statistic analyses reveal patterns of co-occurrences within a language and enable to work out typical collocations for its lexical items. A ''co-occurrence restriction'' is identified when linguistic elements never occur together. Analysis of these restrictions can lead to discoveries about the structure and development of a language. Co-occurrence can be seen an extension of word counting in higher dimensions. Co-occurrence can be quantitatively described using measures like correlation or mutual information In probability theory and information theory, the mutual information (MI) of two random variables is a measure ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |