|

Asymptotic Complexity

In computer science, the computational complexity or simply complexity of an algorithm is the amount of resources required to run it. Particular focus is given to computation time (generally measured by the number of needed elementary operations) and memory storage requirements. The complexity of a problem is the complexity of the best algorithms that allow solving the problem. The study of the complexity of explicitly given algorithms is called analysis of algorithms, while the study of the complexity of problems is called computational complexity theory. Both areas are highly related, as the complexity of an algorithm is always an upper bound on the complexity of the problem solved by this algorithm. Moreover, for designing efficient algorithms, it is often fundamental to compare the complexity of a specific algorithm to the complexity of the problem to be solved. Also, in most cases, the only thing that is known about the complexity of a problem is that it is lower than the c ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Computer Science

Computer science is the study of computation, automation, and information. Computer science spans theoretical disciplines (such as algorithms, theory of computation, information theory, and automation) to Applied science, practical disciplines (including the design and implementation of Computer architecture, hardware and Computer programming, software). Computer science is generally considered an area of research, academic research and distinct from computer programming. Algorithms and data structures are central to computer science. The theory of computation concerns abstract models of computation and general classes of computational problem, problems that can be solved using them. The fields of cryptography and computer security involve studying the means for secure communication and for preventing Vulnerability (computing), security vulnerabilities. Computer graphics (computer science), Computer graphics and computational geometry address the generation of images. Progr ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Distributed Computation

A distributed system is a system whose components are located on different networked computers, which communicate and coordinate their actions by passing messages to one another from any system. Distributed computing is a field of computer science that studies distributed systems. The components of a distributed system interact with one another in order to achieve a common goal. Three significant challenges of distributed systems are: maintaining concurrency of components, overcoming the lack of a global clock, and managing the independent failure of components. When a component of one system fails, the entire system does not fail. Examples of distributed systems vary from SOA-based systems to massively multiplayer online games to peer-to-peer applications. A computer program that runs within a distributed system is called a distributed program, and ''distributed programming'' is the process of writing such programs. There are many different types of implementations for t ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Multiplication

Multiplication (often denoted by the cross symbol , by the mid-line dot operator , by juxtaposition, or, on computers, by an asterisk ) is one of the four elementary mathematical operations of arithmetic, with the other ones being addition, subtraction, and division. The result of a multiplication operation is called a ''product''. The multiplication of whole numbers may be thought of as repeated addition; that is, the multiplication of two numbers is equivalent to adding as many copies of one of them, the ''multiplicand'', as the quantity of the other one, the ''multiplier''. Both numbers can be referred to as ''factors''. :a\times b = \underbrace_ For example, 4 multiplied by 3, often written as 3 \times 4 and spoken as "3 times 4", can be calculated by adding 3 copies of 4 together: :3 \times 4 = 4 + 4 + 4 = 12 Here, 3 (the ''multiplier'') and 4 (the ''multiplicand'') are the ''factors'', and 12 is the ''product''. One of the main properties of multiplication is ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Big O Notation

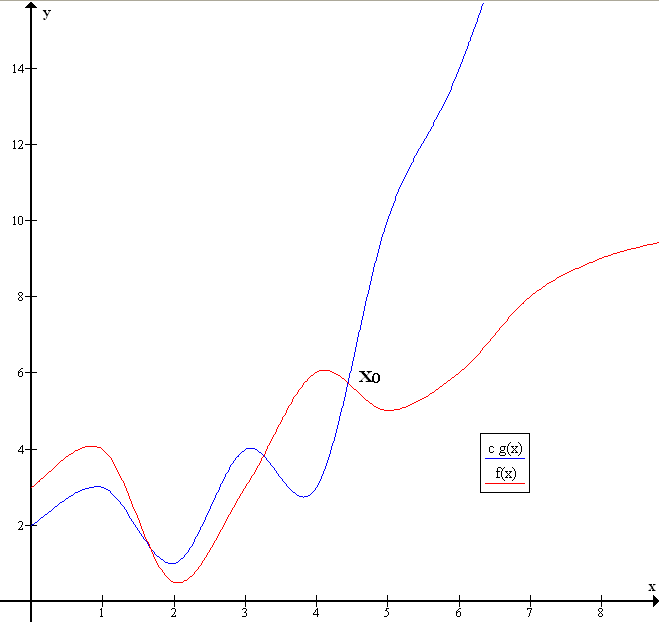

Big ''O'' notation is a mathematical notation that describes the limiting behavior of a function when the argument tends towards a particular value or infinity. Big O is a member of a family of notations invented by Paul Bachmann, Edmund Landau, and others, collectively called Bachmann–Landau notation or asymptotic notation. The letter O was chosen by Bachmann to stand for ''Ordnung'', meaning the order of approximation. In computer science, big O notation is used to classify algorithms according to how their run time or space requirements grow as the input size grows. In analytic number theory, big O notation is often used to express a bound on the difference between an arithmetical function and a better understood approximation; a famous example of such a difference is the remainder term in the prime number theorem. Big O notation is also used in many other fields to provide similar estimates. Big O notation characterizes functions according to their growth rates: d ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Asymptotic Analysis

In mathematical analysis, asymptotic analysis, also known as asymptotics, is a method of describing limiting behavior. As an illustration, suppose that we are interested in the properties of a function as becomes very large. If , then as becomes very large, the term becomes insignificant compared to . The function is said to be "''asymptotically equivalent'' to , as ". This is often written symbolically as , which is read as " is asymptotic to ". An example of an important asymptotic result is the prime number theorem. Let denote the prime-counting function (which is not directly related to the constant pi), i.e. is the number of prime numbers that are less than or equal to . Then the theorem states that \pi(x)\sim\frac. Asymptotic analysis is commonly used in computer science as part of the analysis of algorithms and is often expressed there in terms of big O notation. Definition Formally, given functions and , we define a binary relation f(x) \sim g(x) \qu ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Search Algorithm

In computer science, a search algorithm is an algorithm designed to solve a search problem. Search algorithms work to retrieve information stored within particular data structure, or calculated in the search space of a problem domain, with either discrete or continuous values. algorithms are Although search engines use search algorithms, they belong to the study of information retrieval, not algorithmics. The appropriate search algorithm often depends on the data structure being searched, and may also include prior knowledge about the data. Search algorithms can be made faster or more efficient by specially constructed database structures, such as search trees, hash maps, and database indexes. Search algorithms can be classified based on their mechanism of searching into three types of algorithms: linear, binary, and hashing. Linear search algorithms check every record for the one associated with a target key in a linear fashion. Binary, or half-interval, searches repeatedly ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Sorting

Sorting refers to ordering data in an increasing or decreasing manner according to some linear relationship among the data items. # ordering: arranging items in a sequence ordered by some criterion; # categorizing: grouping items with similar properties. Ordering items is the combination of categorizing them based on equivalent order, and ordering the categories themselves. Sorting information or data In , arranging in an ordered sequence is called "sorting". Sorting is a common operation in many applications, and efficient algorithms to perform it have been developed. The most common uses of sorted sequences are: * making lookup or search efficient; * making merging of sequences efficient. * enable processing of data in a defined order. The opposite of sorting, rearranging a sequence of items in a random or meaningless order, is called shuffling. For sorting, either a weak order, "should not come after", can be specified, or a strict weak order, "should come before" (specif ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Soft O Notation

Big ''O'' notation is a mathematical notation that describes the limiting behavior of a function when the argument tends towards a particular value or infinity. Big O is a member of a family of notations invented by Paul Bachmann, Edmund Landau, and others, collectively called Bachmann–Landau notation or asymptotic notation. The letter O was chosen by Bachmann to stand for ''Ordnung'', meaning the order of approximation. In computer science, big O notation is used to classify algorithms according to how their run time or space requirements grow as the input size grows. In analytic number theory, big O notation is often used to express a bound on the difference between an arithmetical function and a better understood approximation; a famous example of such a difference is the remainder term in the prime number theorem. Big O notation is also used in many other fields to provide similar estimates. Big O notation characterizes functions according to their growth rates: diff ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Modular Arithmetic

In mathematics, modular arithmetic is a system of arithmetic for integers, where numbers "wrap around" when reaching a certain value, called the modulus. The modern approach to modular arithmetic was developed by Carl Friedrich Gauss in his book ''Disquisitiones Arithmeticae'', published in 1801. A familiar use of modular arithmetic is in the 12-hour clock, in which the day is divided into two 12-hour periods. If the time is 7:00 now, then 8 hours later it will be 3:00. Simple addition would result in , but clocks "wrap around" every 12 hours. Because the hour number starts over at zero when it reaches 12, this is arithmetic ''modulo'' 12. In terms of the definition below, 15 is ''congruent'' to 3 modulo 12, so "15:00" on a 24-hour clock is displayed "3:00" on a 12-hour clock. Congruence Given an integer , called a modulus, two integers and are said to be congruent modulo , if is a divisor of their difference (that is, if there is an integer such that ). Congruence modulo ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Exponential Function

The exponential function is a mathematical function denoted by f(x)=\exp(x) or e^x (where the argument is written as an exponent). Unless otherwise specified, the term generally refers to the positive-valued function of a real variable, although it can be extended to the complex numbers or generalized to other mathematical objects like matrices or Lie algebras. The exponential function originated from the notion of exponentiation (repeated multiplication), but modern definitions (there are several equivalent characterizations) allow it to be rigorously extended to all real arguments, including irrational numbers. Its ubiquitous occurrence in pure and applied mathematics led mathematician Walter Rudin to opine that the exponential function is "the most important function in mathematics". The exponential function satisfies the exponentiation identity e^ = e^x e^y \text x,y\in\mathbb, which, along with the definition e = \exp(1), shows that e^n=\underbrace_ for positive i ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Gaussian Elimination

In mathematics, Gaussian elimination, also known as row reduction, is an algorithm for solving systems of linear equations. It consists of a sequence of operations performed on the corresponding matrix of coefficients. This method can also be used to compute the rank of a matrix, the determinant of a square matrix, and the inverse of an invertible matrix. The method is named after Carl Friedrich Gauss (1777–1855) although some special cases of the method—albeit presented without proof—were known to Chinese mathematicians as early as circa 179 AD. To perform row reduction on a matrix, one uses a sequence of elementary row operations to modify the matrix until the lower left-hand corner of the matrix is filled with zeros, as much as possible. There are three types of elementary row operations: * Swapping two rows, * Multiplying a row by a nonzero number, * Adding a multiple of one row to another row. (subtraction can be achieved by multiplying one row with -1 and adding ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Integer Matrix

In mathematics, an integer matrix is a matrix whose entries are all integers. Examples include binary matrices, the zero matrix, the matrix of ones, the identity matrix, and the adjacency matrices used in graph theory, amongst many others. Integer matrices find frequent application in combinatorics. Examples :\left(\begin 5 & 2 & 6 & 0\\ 4 & 7 & 3 & 8\\ 5 & 9 & 0 & 4\\ 3 & 1 & 0 & \!\!\!-3\\ 9 & 0 & 2 & 1\end\right) and \left(\begin 1 & 5 & 0\\ 0 & 9 & 2\\ 1 & 7 & 3\end\right) are both examples of integer matrices. Properties Invertibility of integer matrices is in general more numerically stable than that of non-integer matrices. The determinant of an integer matrix is itself an integer, thus the numerically smallest possible magnitude of the determinant of an invertible integer matrix is one, hence where inverses exist they do not become excessively large (see condition number). Theorems from matrix theory that infer properties from determinants thu ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |